Concerned over Apple iPhone's third-party development and deployment model? Yep.

In a much ballyhooed media event, Apple released the iPhone SDK at a press conference last week. I've been watching the wire to see if other security researchers are as concerned about Apple's development and deployment model as I am. They are.

A good friend and colleague at Ernst & Young, Nitesh Dhanjani, made a blog posting about the subject this morning. If you haven't read and subscribed to Nitesh's blog, you are missing some really great content. Specifically, you should check out Nitesh's discussions on phising. So after a shameless plug for a friend, back to the matter at hand. Nitesh really brought up some relevant points and it will be interesting to see how these concerns flesh out.

Nitesh pointed to a quote from Steve Jobs during the iPhone SDK Press Conference:

If they write a malicious application we [will] track them down and tell their parents.

As Nitesh mentioned, this means that the iPhone applications will need to be digitally signed by Apple and the developers will be required to register with Apple. What kind of information will Apple require from developers? How will this information be stored? How does Apple protect the intellectual property of those developing on their platform?

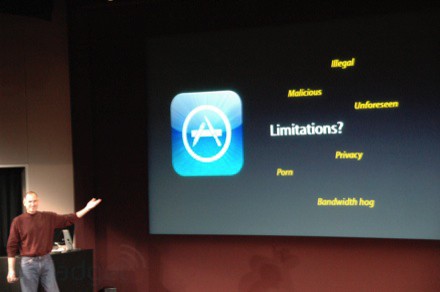

Further, as Nitesh mentions, Jobs points to a slide (shown below) where he describes what kind of apps will not be allowed on the iPhone:

- Apple may have a difficult time auditing applications to ensure they meet their criteria. What is the absolute definition of malicious in the given context? Malicious to whom? The end user, Apple, or AT&T? Perhaps all of the above. Now, how does Apple go about obtaining assurance whether a given application is malicious or not? Will someone try out every application that is submitted? Will someone at Apple review the source code of every application to ensure it does not invoke any malicious operations and only calls published APIs?

- Applications may not run in the background. This is quite likely to be a decision based upon processing resource constraints. Note that Apple's own iPhone applications such as Mail, iPod, and SMS do run in the background.

- The Unforeseen clause means that Apple reserves the right to ban any application at any time. Will they be reasonable with the developers? I don't see why they wouldn't be as long as it doesn't hurt their bottom line, for example:

11:32AM - We asked: Will SIM unlock software be considered software not allowed in the app store?A: Steve: (pause) "... yes." Laughter.

Perhaps the most interesting concern Nitesh brought up is how does Apple go about obtaining assurance whether a given application is malicious or not? Obviously some sort of source code audit must be being performed to determine if there are any backdoors, etc., who takes on that responsibility? Maybe more importantly, who is negligent if a backdoor gets through?

Personally concerning to me is, who is reviewing the security of these applications? With Apple signing off on these applications, can users reasonably expect the apps to be secure? Will the previously mentioned source code audit cover vulnerabilities as well as malicious code? One could make the case that applications like QuickTime, which have been repeatedly targeted and exploited, are as threatening as malicious code. Will these be reviewed prior to being placed on the iPhone?

With mobile devices, such as the iPhone, being coupled tighter with internal corporate resources, it's clear that there is a great bang-for-the-buck factor for attackers; not to mention that hacking Apple and mobile devices seem to be too very sexy subjects for security researchers right now. It will be interesting to see how Apple's development and deployment model stand up to attacks.

-Nate