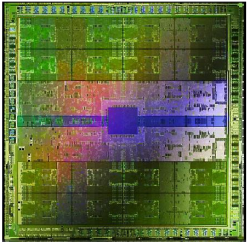

NVIDIA drip-feeds us more info on next-gen GF100 "Fermi" GPU

NVIDIA has decided to drip-feed us some more information on its upcoming GF100 "Fermi" next-generation GPU.

We already know quite a bit about the "Fermi" architecture. For example, we know that it's feature some 3 billion transistors (compare this to little over 2 billion present in ATI's Radeon HD 5870) and make use TSMC's 40nm processes. The Fermi GPU will feature double the number of CUDAcores, up to 512. On top of that Fermi will support error correcting code (ECC) to accommodate for soft error rate and allow NVIDIA to push the hardware to higher densities. Fermi GPUs will also offer better C++.

Check out the GF100 "Fermi" image gallery

I could delve into a lot of technical stuff relating to Fermi and the GF100, but it's likely to bore you to tears, so I'll start by giving you the basics and then delve into some of the more interesting tech a little deeper.

The GF100 will have:

- 512 CUDA processors (Unified Shader cores)

- 16 geometry units

- 4 raster units

- 64 texture units

- 48 ROP engines

- 384-bit GDDR5 memory bus

- DirectX 11 support

Each GPU is made up of four separate GPC (Graphics Processing Clusters), as shown in the following block diagram:

Delving deeper -->

Breaking this down further, we can look at each of the streaming multiprocessor (SM) cores within the GPCs separately:

Each of the SMs comprise of 32 CUDA cores, 16 or 48KB of shared memory, 16 or 48KB of L1 cache, 4 texture units and a polymorph engine.

Notice how there are two types of engine - a raster engine which handles screen space processing (that is, on-screen display), and a polymorph engine for world space processing (this is the engine that conjures up the 3D environment that will be sent for on-screen display processing). These two engines offer greater performance and versatility.

So what does this all mean? Well, it's all interesting technology, but there are still a lot of unanswered questions. First, there's practical stuff such as price, power consumption, clock speeds, and thermal issues. Then there's the all important question of how much benefit will this offer end users, especially gamers. The problem as I see it is that games aren't pushing graphics anywhere near as hard as they one did, and for most people a $99 graphics card is more than enough. Those who want to side-step the whole PC gaming (and upgrading) bandwagon can buy a games console that'll give them years of gaming pleasure for the price of a high-performance GPU.

Until we see retail units, there are still more questions than answers regarding Fermi.

<< Home >>