MS Office 2007 versus Open Office 2.2 shootout

After yesterday's blog about the relevance of feature bloat, I figured that I would follow up with some quantitative analysis on the performance characteristics to measure resource bloat. This isn't the first time I've measured Office CPU and memory consumption of Microsoft Office and Open Office. I have a whole series on it dating back to 2005. This time, I'm pitting Microsoft-backed OOXML (Office Open XML) versus the OASIS-backed ODF (OpenDocument) format with Microsoft Office 2007 and Open Office 2.2.

Before I start, I'm going to disclose the hardware, OS, and software I'm using to measure these two Office suites.

Hardware:- Intel Core 2 Duo 2.13

- 2 GB DDR2-800

- ATI X800 PCI-Express Video Card

- 500 GB SATA-II hard drive housing the sample files

- Windows Vista

- Microsoft Sysinternals Process Explorer (resource measurement)

- Microsoft Office 2007

- OpenOffice.org 2.2

| Baseline measurements for opening Application | |||||||

| Application | CPU time (milliseconds) | Memory | Number of I/O | ||||

| Kernel | User | Total | Peak KB | Read | Write | Other | |

| MS Excel | 234 | 328 | 562 | 24308 | 14 | 10 | 1422 |

| OO.o Calc | 625 | 593 | 1218 | 47788 | 364 | 12 | 13106 |

| MS Word | 171 | 390 | 562 | 31776 | 136 | 13 | 1957 |

| OO.o Writer | 343 | 687 | 1031 | 46700 | 365 | 8 | 13120 |

| PowerPoint | 250 | 343 | 593 | 27796 | 14 | 10 | 1403 |

| Impress | 484 | 843 | 1328 | 52804 | 921 | 16 | 14849 |

| MS Access | 484 | 531 | 1015 | 25836 | 12 | 9 | 967 |

| OO.o Base | 781 | 906 | 1687 | 49984 | 1708 | 176 | 22832 |

Office 2007 base memory consumption went up significantly compared to the Office 2003 I measured last year, but it's still significantly less than OpenOffice.org 2.2. Some of the OpenOffice.org applications, like Base, require Java to run, and the memory consumption spikes over 70 megabytes as soon as you start navigating in the interface. However, the difference between Microsoft and OpenOffice.org base resource consumption has gotten smaller. Next, we test the CPU and memory utilization of Microsoft Excel and OpenOffice.org Calc when opening the same 16-sheet test file.

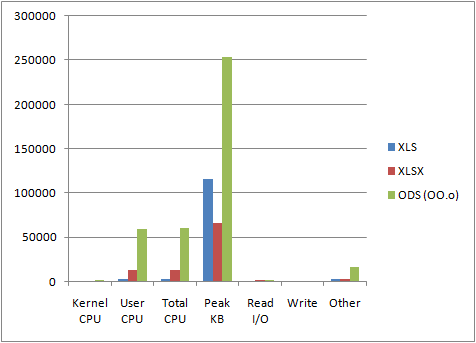

| Opening large spreadsheet | |||||||

| Application | CPU time (milliseconds) | Memory | Number of I/O | ||||

| Kernel | User | Total | Peak KB | Read | Write | Other | |

| XLS (MS) | 265 | 2046 | 2312 | 115548 | 39 | 17 | 2376 |

| XLSX (MS) | 296 | 12406 | 12703 | 65548 | 687 | 19 | 1854 |

| ODS (OO.o) | 968 | 58875 | 59843 | 253680 | 899 | 22 | 15822 |

From these results, we can see that the OpenOffice.org ODF XML parser (while vastly improved) is still about 5 times slower than Microsoft's OOXML parser. OpenOffice.org also seems to consume nearly 4 times the amount of RAM to hold the same data. While OpenOffice.org continues to have fewer features than Microsoft Office, it continues to consume far more resources than Microsoft.

Even though these results still show drastic differences in CPU and memory consumption between MS Office 2007 and OpenOffice.org 2.2, it's not as extreme as the results measured last year. It would appear that OpenOffice.org 2.2 has gotten significantly better than version 2.0, but it still has a lot to work on. The official OpenOffice.org performance-tuning wiki is tracking some of these improvements. I praise their recent efforts and hope they keep it up because it will only bring more competition to the table. So while I may still consider OpenOffice.org a resource pig, the pig has definitely lost some weight.