VMFS-3, How Do I Despise Thee

The VMWare Cluster Locking File System, version 3 (VMFS-3) Is one of the core technologies used in VMWare ESX/vSphere 4 virtual infrastructure environments. Unfortunately, it's also a completely proprietary black box that makes interoperability nearly impossible.

One of the perils of being a practicing systems integration expert versus someone who strictly writes about or reports on technology is that when it comes to taking care of paying customers versus attending trade shows, my customers come first. So while I would love to attend every industry trade show that would allow me to network with other industry peers and touch base with the companies that I write about, it's not always possible.

Click on the "Read the rest of this entry" link below for more.

VMWorld 2009 in San Francisco was a trade show that I would have loved to have attended this week, but the practical realities of having to actually use the technology in question -- VMWare vSphere -- prevented me from being able to cover the event. In this case, I had a customer which had to perform a business continuity recovery exercise over a 48 hour period which required my assistance as the VMWare lead, working round the clock shifts in a cold datacenter, sleep deprived, bugged-out on fluorescent lighting and hopped up on dispenser machine coffee, Dunkin' Donuts Munchkins and MSG-laced snack foods.

As a best practice, I normally would recommend that production VMWare data be SAN replicated over a Wide Area Network, but in this case the customer for whatever reason, probably as a cost saving measure, chose to physically send us actual FAT32-formatted hard disks containing copies of the VM directories with the .VMX and .VMDK files.

No big deal, right? Just physically attach the USB/eSATA hard disks to the VMWare ESX server's USB 2.0 or eSATA ports and copy the files in. Won't be the fastest of restores, but it will work just fine.

Uh, hold on there cowboy. Sorry, you can't do that.

Had we been dealing with just about any other x86 operating system and/or hypervisor, the procedure I described above would work just fine -- on Windows Server 2008 Hyper-V and on a multitude of Linux distributions, UNIX, Xen flavors and KVM included. Hell, even on Macs. But on VMWare ESX, that just isn't the case.

You see, VMWare's ESX bare metal hypervisor is a black box. The only way you can move data in and out of their VMFS-3 file system is using their provided proprietary tools, in this case the VMWare vCenter Client which is used to remotely administrate an ESX box from a Windows workstation or server, or their Linux command line console which is only available on the full blown VI3/ESX 3.5 or vSphere 4.0 product, not the embedded ESXi version which is becoming increasingly popular with environments using server blades.

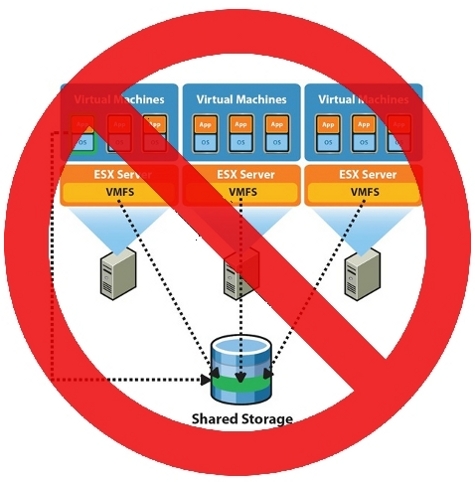

For those not familiar, the ESX hypervisor itself can talk to local VMFS-3 storage, or to iSCSI or fiber SAN-attached VMFS-3 storage. It can also talk to remote NFS storage through its vmkernel interface over the network. However, It CANNOT talk to the USB ports on the hardware the ESX server itself is running on, nor can it locally mount any other file system other than its own VMFS, or files mounted on the CD-ROM or DVD drive.

So if someone provides you with a disk that contains copies of your VMWare virtual machines, how do you transfer it? Well, in our case, since we didn't have replicated VMFS-3 LUNs that we could just connect to as a regular VMWare ESX datastore using the SAN, we had to connect the eSATA drives to a PCI eSATA adapter that was hooked up to a Red Hat Linux server, exported the storage as NFS, and used the vmkernel NFS interface on ESX to copy over the files over the gigabit LAN using the Windows-based VMWare Infrastructure Client.

Typically, the vmkernel NFS interface is used in conjunction with fast NAS appliances with large RAID stripes such as NetApp devices and the like which cost tens of thousands of dollars, not with consumer grade hard disks that you buy at the local Staples or Best Buy hooked up to some random Linux server. So to say that this jury-rig cobbled up solution was not optimal for data transfer would be a gross understatement.

Suffice it to say that it took us well over 10 hours to copy 1 Terabyte of data across the network using this method, and we had several false starts and several aborted transfers, including a few ESX crashes in the process due to network and contention problems, so it really took us about 12-14 hours to do the job and get all our VMs fired up before we could even begin the process of incremental tape restores. I was not a happy camper, and neither was the customer.

An alternative to restoring data in this method would have been to use the VMware Consolidated Backup (VCB) product, which is in effect a VMFS-3 file system driver for Windows.

VCB is used as a "gateway" so that a single Windows server with SAN connectivity to VMWare's clustered storage can be used for speedier out-of-band backup and restore of the VMDK files, instead of doing slower agent-based network backup and restore from within the virtual machines themselves in conjunction with popular network-based backup software such as IBM Tivoli Storage Manager, Networker, CA ArcServe or NetBackup. However, VCB costs (a lot of) money, and the customer in question didn't have a license for it.

Let's face it -- enterprises which choose VMWare as their Virtual Infrastructure environment of choice are trusting their data to a proprietary filesystem and a hypervisor which is a black box closed system.

Indeed, Microsoft's Hyper-V and their NTFS file system are also proprietary, but enough reverse engineering over the last decade has been done to expose NTFS so that it is read-writable by Linux and UNIX, and all modern Microsoft OSes as well as Linux and UNIX OSes thru SAMBA can write to networked NTFS volumes without any problem whatsoever. Recently, through Microsoft's Open Specification Promise, SAMBA is also becoming more and more "kosher" as a fully certified CIFS/SMB networking solution with the full cooperation of Microsoft.

There are even Linux ext3 file system drivers for Windows should anyone really care to copy data in that direction without the use of SAMBA networking. With Windows and Linux, there are multiple methods for accessing and moving data stored on disk. Now, would I like to see NTFS's specifications fully opened or a Microsoft certified Linux NTFS driver in Open Source? Sure, but compared to VMWare, Microsoft is utter interoperability paradise.

Providing a network interface via VMWare ESX through NFS and VCB just isn't good enough. VMWare needs to expose as much of the hypervisor and the underlying file system as much as possible so that better tools for data transfer and data forensics and recovery can be created.

It doesn't help that VMWare is the industry leader in virtualization and that it abuses its market position by nickel and diming its customers for such things as simple file system interoperability tools like VCB. Backup/Restore software and other operating systems should be able to talk directly to VMFS, period.

It's time that VMWare's customers demand better interoperability from the company and its products -- or seek more open hypervisor solutions like Hyper-V, Xen and KVM.

Is VMWare abusing its position by locking you out of your own data? Are they making things unnecessarily difficult, proprietary and costly? Talk Back and Let Me Know.