For Apple, an inflection point

How do you follow a legendary act? By making sure the audience doesn't remember what came before you.

No, I'm not talking about Apple co-founder Steve Jobs, whose death in 2011 pushed chief operating officer Tim Cook into the limelight. I am referring to the unprecedented run of success that the company enjoyed between 2001 and 2011, a rough approximation of the span of time between when Apple introduced the iPod portable music player that would make it a household name and the death of its spiritual leader Jobs.

In that period, the company entered and dominated (to the point of synonymity) the portable media player market, then radically altered the business model for its content; set a visual and functional benchmark for what would come to be known as the "smartphone," then commanded the segment across continents; created a mainstream market for the slate-style tablet computer, then dominated it; introduced a laptop computer with market-leading thinness, then popularized it; and finally, knit them all together with a single, cloud-based account system that made it difficult indeed to use an Apple product without being a click or tap away from one of its various content, software or services stores. Through it all, the company built and burnished its tony reputation.

Jobs' passing coincided with a moment when the company's strategy was in full bloom. The swell (and product pipeline) would carry the company for more than a year after Jobs' death.

But what happens when the momentum begins to recede? What then?

Mark the end of a chapter, and start a new one.

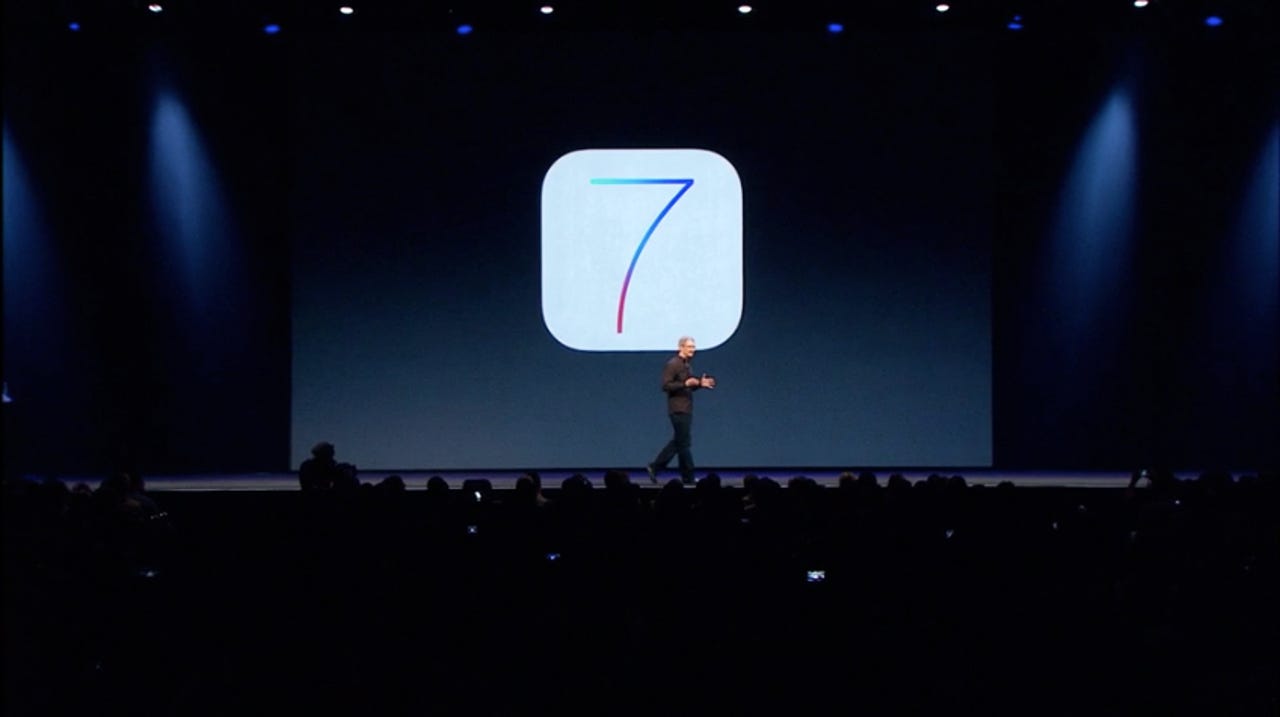

During the keynote of its annual Worldwide Developers Conference yesterday, Apple took what I believe to be its first real, albeit careful, steps into its next phase: it introduced a new, non-feline naming scheme for its desktop operating system, OS X, and it unveiled a redesign of its mobile operating system, iOS. (It also announced other things; read the recap here.)

The milestones matter.

For OS X, Apple's new California-styled naming scheme for the next versions of its 13-year-old operating system ("Mavericks," named after the popular U.S. surfing spot, will be the first) will likely see the desktop computer through its final years of broad consumer popularity and into an era of narrower, more work-oriented use.

For iOS, the new version represents the company's first attempt at reinvention—and I use that term without implied exaggeration, because much is the same—rather than refinement. It is a deliberate departure, and "the biggest change to iOS since the introduction of the iPhone," Cook said several times during the presentation, doing his best to draw a line between what came before, and what comes next.

Mark.

The aesthetic and functional changes to iOS 7 are a mixed bag, at best. ("Simply confusing," Josh Topolsky writes with some disgust at The Verge.) Change is always a hard pill to swallow, but in this case, the new operating system's idiosyncrasies seem to reflect chief executive Cook's October 2012 management decision to dismiss the contentious Scott Forstall, formerly senior vice president of iOS, and assign his duties to other senior executives.

Mark.

"Without its Decider-in-Chief," Bloomberg Businessweek wrote in 2011, "the company must fundamentally change the way it works." That sentence was referring to Jobs, but you could say the same about Forstall. In both cases, Apple is finding its way through internal changes that ultimately impact how its products look and operate—chiefly, iOS. Consumers are left to feel for the seams.

"It's like getting an entirely new phone," SVP Craig Federighi said during his WWDC presentation.

Mark.

So how do you deal with all that baggage—the polarizing people, the landmark products, the unprecedented business success? By throwing it out the window and reframing the conversation. Turn the seam into a deliberate design decision, so to speak.

Cook may never be able to fully step out of Jobs' shadow, but he has certainly tried over the last year and a half to establish a new style of leadership that discourages comparison. Apple's iOS may never be able to make a clean break from its Forstall-led past, but its designers and engineers certainly tried in its introduction yesterday to signal a new direction that jettisons his favored skeumorphic touches.

And with the company's stock price down 38 percent over three quarters, Apple's senior executive team will do its best in the next two quarters to show to investors that they should not look at the company's progress relative to the price peak it enjoyed in September 2012, but rather the valley it experienced in April 2013.

It's all relative. All that matters is perception.