At Microsoft, NUI goes beyond fun and games

This year's theme for Microsoft Research's TechFest research showcase is natural user interfaces (NUIs).

While the company is showing off some non-NUI-themed research at this year's event, most of the TechFest 2011 projects that the company is highlighting visibly are those that focus on using gestures, touch, computer vision and speech as ways to interact with PCs and other computing devices.

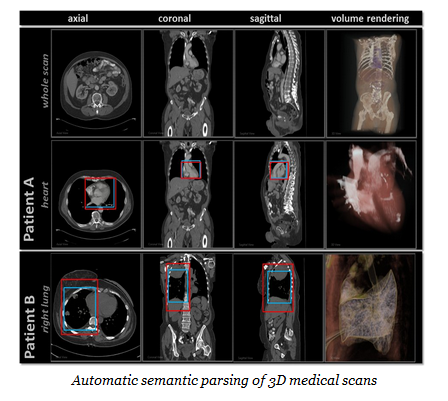

One of these project, known as InnerEye, is focused on "the automatic analysis of patients' (medical) scans using modern machine learning techniques," like semantic navigation and visualization. The Microsoft researchers on the project are working with the Microsoft Amalga team on Inner Eye, which is one of the demos being showcased at TechFest 2011.

Another area of NUI exploration, which doesn't seem to be on the TechFest 2011 agenda, is interacting without touching. Microsoft researchers are publishing a couple of new papers on this topic this year, one on image-guided interventional radiology (a PDF of which is available now), and another on brain-computer interaction.

Here's a list of the TechFest 2011 projects, NUI and non-NUI both, that the Softies are playing up this year.

Today, March 8, is the semi-public TechFest day, when certain invited guests get to peek at some of the projects that will be on display for Microsoft employees from March 8 to 10.