Liquid-cooled search results

Google has a newly-issued patent for dual-sided liquid-cooled motherboards. Are they ramping up density instead of building new data centers?

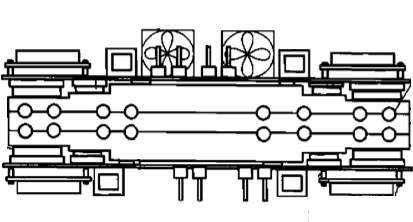

Patent 20080158818 Here's the basic idea:

- The mobo has the hot components on 1 side and the less hot on the other.

- The liquid cooling unit is sandwiched between 2 mobos.

- Disks are on the outside of the sandwich, as is the DRAM.

- Fans are mounted on the outside to air cool the external low thermal load components.

- The hot stuff is next to the cold stuff.

Here's a drawing from the patent:

The patent was filed in December 2006. It responds to issues Google saw in mid 2005: hot CPUs, expected to get hotter; concern that hotter - 80F+ - data centers would cause CPU malfunctions; uncertainty over future cooling strategies, such as fresh air.

The Storage Bits take Google won't adopt this technology. Why?

- The cost of the cooling module and the associated plumbing and chillers. Huge! Especially in existing data centers. Fresh air is cheaper.

- Intel dropped the smoking hot Netburst architecture. They have quad-core chips today that use half the power of a slower, single-core Pentium 4.

- Despite popular folklore, IT equipment - including disks - is not sensitive to ambient temperatures below 90F. Accelerated life testing is done at high temperatures, so they've figured it out.

I'll bet the protos made for hellacious gaming!

2 months after the patent was filed Google released a paper on disk failure experience which found that for the SATA drives Google uses, drive temperatures below 104F did not affect reliability.

Intel started shipping more efficient Core-based CPUs in 2006. Their thermal load was a quarter or less per core. Power efficiency is driving Intel strategy, not clock speed.

There might be an economic argument for liquid-cooled, overclocked CPUs if free cold water were available. But Google rarely pushes the technology that way. Forced to pick 2 of faster, better or cheaper, they take faster and cheaper.

Comments welcome, of course. For another take, check out Rich Miller at Data Center Knowledge.