NASA outlines technical roadmaps: A heavy dose of cloud, big data, robotics

NASA's technical bets for the next 20 years will have a heavy dose of cloud supercomputing, cognitive computing, big data as well as robots that'll interact better with humans.

The 2015 technical roadmap from NASA is a sprawling series of documents designed to support the space agency's missions---including a trip to Mars---as well as develop new technologies to make computers that can withstand radiation.

NASA is seeking comments on its technology roadmaps, which are an element of its strategic technology investment plan. The roadmaps are designed to highlight needs for 2015 to 2035. NASA said in its introduction:

NASA's ambitious missions require that we expand the frontiers of our technological capabilities to tackle our difficult problems. For space exploration, creating an environment for humans to live and work in space, navigating and traveling to distant locations, manufacturing products in space, landing on and departing from planetary surfaces, and quickly communicating between the Earth and space systems are some of the formidable technology hurdles to be conquered before the first boot print is left on Mars. For Aeronautics, the challenges are equally daunting as we create high-fidelity, integrated, distributed simulation systems; next generation air traffic control; and next generation vehicles; all working to ensure the Nation and world safely and efficiently accommodate the ever increasing commercial air traffic while reducing noise and carbon output.

As for measuring the returns on these technologies, NASA is using TechPort, a web-based system that compares the technology portfolio and projects to the agency's goals and objectives.

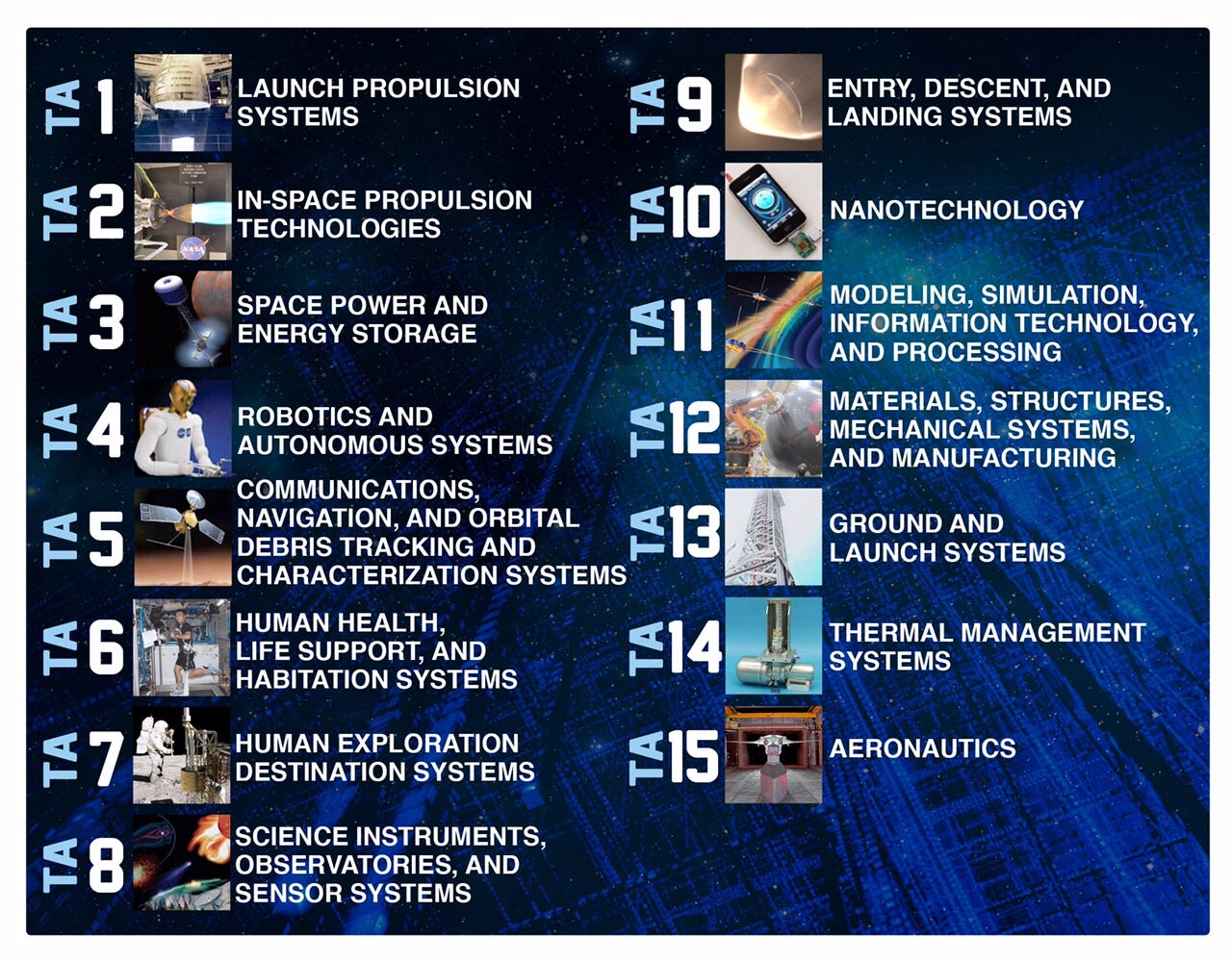

In a graphic, the technology areas in NASA's roadmap goes like this:

For our purposes we'll hone in on modeling, simulation and information technology as well as robotics and autonomous systems.

On the information technology front, NASA indicated that it will continue to have a hybrid environment. NASA noted that broad user of cloud computing---especially supercomputing---is cost prohibitive for now. NASA said:

While some NASA applications have been hosted in the cloud, broader NASA use of the cloud requires the technology to achieve transparency of use and information technology (IT) security equivalent to that of moderate-impact NASA systems. Achieving these technology objectives will enable NASA to use the cloud for surge supercomputing when needed to meet mission-critical time constraints by transparently sending computations to public clouds. Experiments in surge supercomputing show that it is currently too labor intensive and expensive to move supercomputer computations to the cloud and that the maximum surge capacity is too small to make a meaningful difference. With technology to automatically package computations for the cloud, many of these challenges can be overcome within five years, enabling NASA computing to surge at least 50% above NASA's supercomputing capacity, with a time penalty of only 10% for those jobs sent to the cloud. If these goals can be met, cloud supercomputing may impact near-term space exploration missions. However, the usefulness of these technologies requires cloud usage to become cost competitive with internal NASA supercomputing.

The supercomputing effort is critical to NASA because it has to simulate missions repeatedly. NASA is hopeful that cognitive computing may fill a void, but not today. NASA noted:

Cognitive computing based on artificial neurons and synapses could be an efficient means of demonstrating the human ability to learn from examples and observation, interact verbally, find complex relationships in data, and adapt to circumstances without reprogramming. This will enable a dramatic acceleration in the advancement of NASA science missions through Big Data analysis and will enable more adaptable deep space robotic probe missions. Cognitive computers must have up to 40 million times more neurons and synapses than current models and use far less power.

On the compute front, here are a few technologies NASA needs:

NASA also noted that it will have to use exascale computing to simulate and integrate the moving parts of a mission.

Most current simulation capabilities are based on heuristics and similitude and are often insufficient to meet NASA's increasingly aggressive mission demands. Because these existing simulations are not grounded in an understanding of the underlying physical processes, they often do not have applicability beyond the test conditions for which their various coefficients and parameters were tuned. Application of most of these simulations results in large but undetermined uncertainties, sub-optimal performance, and increased cost and risk.

The IT roadmap for NASA is a bit overwhelming. Everything from speedier networks to security frameworks and analytics toolsets are needed.

Needless to say NASA's IT backbone will provide some help to the agency's robotics needs. In a nutshell, NASA needs more autonomous systems.

In the robotics document, NASA noted:

The goal of robotics and autonomous systems is to extend our reach into space, expand our planetary access capability and our ability to manipulate assets and resources to help us understand planetary bodies using remote and in-situ sensors, prepare them for human arrival, support our crews in their space operations, support the assets they leave behind, and enhance the efficacy of our operations. Advances in robotic sensing and perception, mobility and manipulation, rendezvous and docking, onboard and ground-based autonomous capabilities, and human-systems integration will drive these goals.

To hit those objectives, NASA said better sensors, vision and algorithms will be needed. Robots will also have to navigate extreme terrain better and move below surfaces.

In a graphic, NASA's robotics needs go like this:

The so-called human-system interaction technologies will matter because autonomy will be the only way to coordinate in many ways.

NASA noted:

Since coordinating a heterogeneous team of humans and autonomous systems is complex, a key challenge is to develop tools and techniques that allow each agent to have multiple command paths and degrees of autonomy. Another key challenge is to develop advanced user interfaces that enable humans (both ground control and astronauts) and autonomous systems to communicate clearly about their goals, abilities, plans, and achievements; collaborate to solve problems, especially when situations are beyond autonomous capabilities; and interact via multiple modalities (dialogue, gestures), both locally and remotely.