Pluggd makes podcasts and video scannable

The Internet breeding a new species of human, those who are very adept at scanning, absorbing and cataloging information from the Web at broadband speed. Sitting in front of or holding a screen, with a particular information gathering goal or merely surfing, individuals cruise along at their own scanning speed, processing the data flow in real time and sifting out what is useful in a few seconds or even nanoseconds.

You become accustomed to consuming dozens of pages of mostly text in minutes, scanning the text and firing off searches for pieces that trigger connections to some embedded memory and attaching itself to your data clusters. However, when it comes to scanning audio and video content, you are dealing with a black hole. With text you can scan, read ahead and get the point in a relatively efficient way. It has a great 'compression' algorithm. However, audio and video content are mostly opaque when it comes to scanning and searching. More people would listen to podcasts and watch Web video if they could figure out where, in a 5-minute, 10-minute, 40-minute, or 5-hour show, their interests intersect with the content stream.

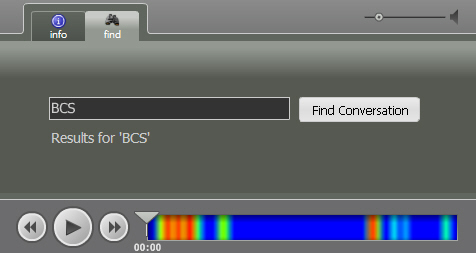

Matt Marshall at VentureBeat blogged about Pluggd's HearHere technology, a possible solution to scanning and searching multimedia content. I saw it first several months ago, and was impressed by the demo. Now the company has improved the technology, cataloged a lot of content and is ready to go to market. Pluggd also claims that it has "perfected the user experience" for audio and visual search. I'll settle for just a "dramatically improved" user experience.

Pluggd preprocesses the content and then creates a heat map to indicate where the relevant content can be found in the stream