Researchers invade Facebook with socialbots, grab 250GB of data

Four researchers, Yazan Boshmaf, Ildar Muslukhov, Konstantin, Beznosov, and Matei Ripeanu, are claiming Facebook's security systems are "not effective enough" at stopping automated identity theft. Their 10-page paper, titled "The Socialbot Network: When Bots Socialise for Fame and Money", describes how they managed to collect private data from thousands of Facebook users by leveraging socialbots. The paper is scheduled to be presented at next month's Annual Computer Security Applications Conference (ACSAC) in Orlando, Florida.

Here's the abstract:

Online Social Networks (OSNs) have become an integral part of today's Web. Politicians, celebrities, revolutionists, and others use OSNs as a podium to deliver their message to millions of active web users. Unfortunately, in the wrong hands, OSNs can be used to run astroturf campaigns to spread misinformation and propaganda. Such campaigns usually start off by infiltrating a targeted OSN on a large scale. In this paper, we evaluate how vulnerable OSNs are to a large-scale infiltration by socialbots: computer programs that control OSN accounts and mimic real users. We adopt a traditional web-based botnet design and built a Socialbot Network (SbN): a group of adaptive socialbots that are orchestrated in a command-and-control fashion.

We operated such an SbN on Facebook—a 750 million user OSN—for about 8 weeks. We collected data related to users' behavior in response to a large-scale infiltration where socialbots were used to connect to a large number of Facebook users. Our results show that (1) OSNs, such as Facebook, can be infiltrated with a success rate of up to 80%, (2) depending on users' privacy settings, a successful infiltration can result in privacy breaches where even more users' data are exposed when compared to a purely public access, and (3) in practice, OSN security defenses, such as the Facebook Immune System, are not effective enough in detecting or stopping a large-scale infiltration as it occurs.

I wrote about their findings briefly last month in a post about the Facebook Immune System (FIS):

Researchers at the University of British Columbia in Vancouver, Canada, recently used 102 socialbots to make a combined 3,000 friends in eight weeks. The bots began by sending friend requests to random users, 20 percent of whom accepted, and then to their mutual friends, which resulted in the acceptance rate jumping to almost 60 percent. Such an attack means it doesn't matter if users hide their personal information from public view as long as they let their friends have visibility. The social bots thus managed to extract some 46,500 email addresses and 14,500 physical addresses from users' profiles.

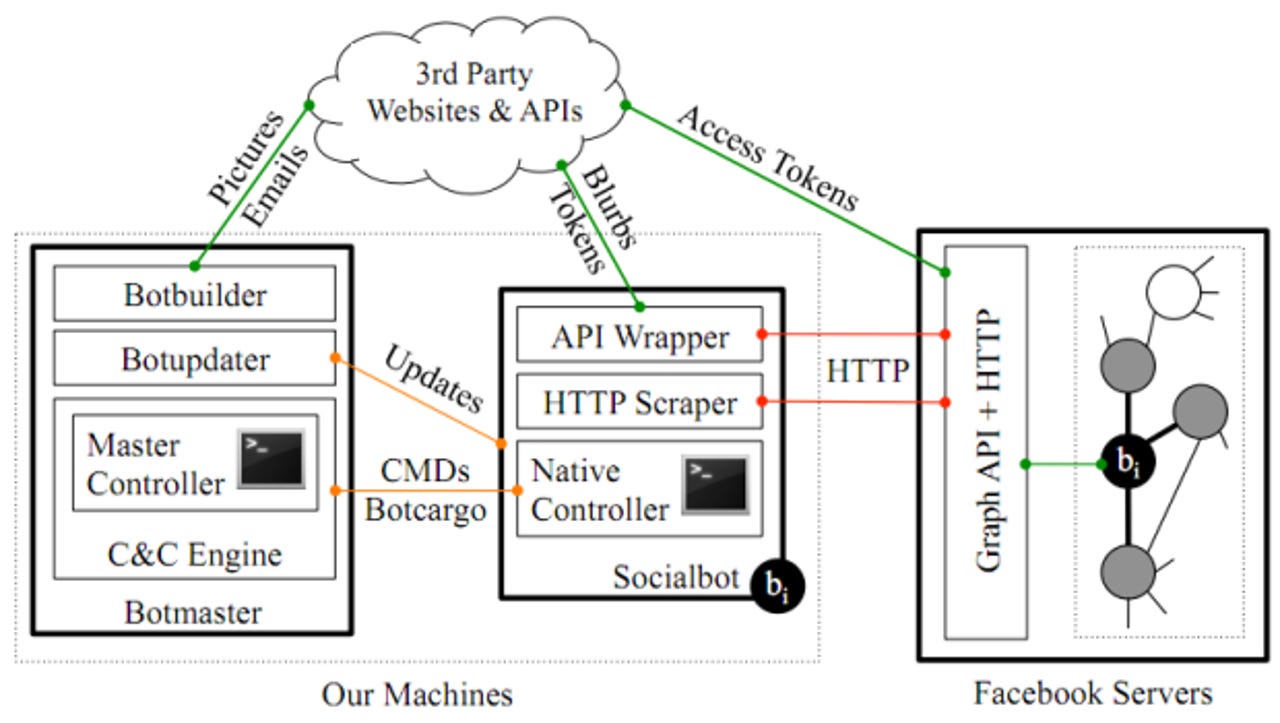

Now that the paper is available, however, a lot more details have come to light. First off, let's start with defining a socialbot: it's a piece of software that controls a social networking account and tries to act like a human being by performing basic tasks such as posting messages and making friend requests.

Although FIS should be able to stop the automated creation of such accounts, the researchers used online services to break CAPTCHAs and populated their bogus accounts' profile pictures with attractive photos from HotOrNot. They also generated fake Facebook status updates using an API provided by iheartquotes.com.

The researchers warn that socialbots can not only infiltrate friend networks to harvest personal information such as e-mail addresses and phone numbers, but they can also be used to spread misinformation and propaganda to influence others. Many socialbots working together can form an SbN that can easily be controlled by just one person.

The researchers' SbN that consisted of 102 socialbots and a single botmaster. In eight weeks, the SbN made 8,570 friend requests, 3,055 of which were accepted. Its extended network totaled 1,085,785 profiles (some users allow their profiles to be seen by friends of friends).

Each time a socialbot successfully friended one person, they would then attempt to become Facebook friends with that person's friends as well. As they became more embedded within friend networks, the acceptance rate of friend request reached 60 percent. The increase was due to what researchers called the "triadic closure principle," which claims that if two users have a mutual friend in common, they are three times more likely to accept a friend request. Unsurprisingly, the more friends a given Facebook user had, the more likely they are to accept a friend request from a socialbot.

The researchers were able to gather 35 percent of all the personally identifiable information found on their direct networks, and 24 percent from extended networks. All of this profile information was recorded, including 46,500 e-mail addresses and 14,500 home addresses. On average, each socialbot collected 175 new "chunks" of publicly-inaccessible users' data per day.

The researchers discovered that FIS only blocked 20 percent of the accounts used by the socialbots and that it only did so because suspicious users flagged the accounts. "In fact, we did not observe any evidence that the FIS detected what was really going on other than relying on users' feedback, which seems to be an essential but potentially dangerous component of the FIS," the researchers wrote.

After the eight week period was up, the researchers voluntarily dismantled the SbN. They did so not because Facebook's security team discovered it, but because of the amount of Internet traffic it had generated: approximately 250GB inbound and 3GB outbound.

For its part, Facebook said the socialbots' results were unrealistically successful because the researchers used a trusted university IP address. Furthermore, the company claimed that its records showed a higher success rate for FIS than that given by the researchers, although no figure was provided.

"We have numerous systems designed to detect fake accounts and prevent scraping of information," a Facebook spokesperson said in a statement. "We are constantly updating these systems to improve their effectiveness and address new kinds of attacks. We use credible research as part of that process. We have serious concerns about the methodology of the research by the University of British Columbia and we will be putting these concerns to them. In addition, as always, we encourage people to only connect with people they actually know and report any suspicious behavior they observe on the site."

See also: