Trust Backblaze's drive reliability data?

Let me begin by noting that I've never accepted money or services from Backblaze. I admire what they're doing, how they've bootstrapped the company and their willingness to ignore storage industry taboos.

So TweakTown's (TT) critique of Backblaze (BB) caught my attention. I worked for a drive manufacturer and have worked with multiple qual and support teams, so I hoped TT would shed some light on this arcane topic. They didn't.

The first fail

The post title is "Dispelling Backblaze's HDD Reliability Myth - The Real Story Covered." But it isn't a myth: it's real-life data.

Then TT questions BB's motives. Of course a small company wants publicity.

That they do it by providing statistically significant information about their hard drive experience – something millions of consumers are hungering for – strikes me as a fair exchange. Why don't we stick to what they said rather than throwing mud?

Sourcing

Backblaze is criticized for buying disk drives the way you and I do. They take advantage of pricing oddities and often purchase USB hard drives and remove the cases.

This does subject drives to extra handling. But since most BB drives appear to be bought this way, they all get the same handling, which should only affect the more delicate drives.

Enclosures and drive age

Backblaze's enclosures are also criticized because they put 45 drives in 4U box, with improved mountings over three generations. They say:

. . . we can see that the drives in use the longest suffer the highest failure rates. One likely reason is simple: these older drives are in revision 1.0 of their storage enclosures, which suffer from significant vibration issues that merited a redesign.

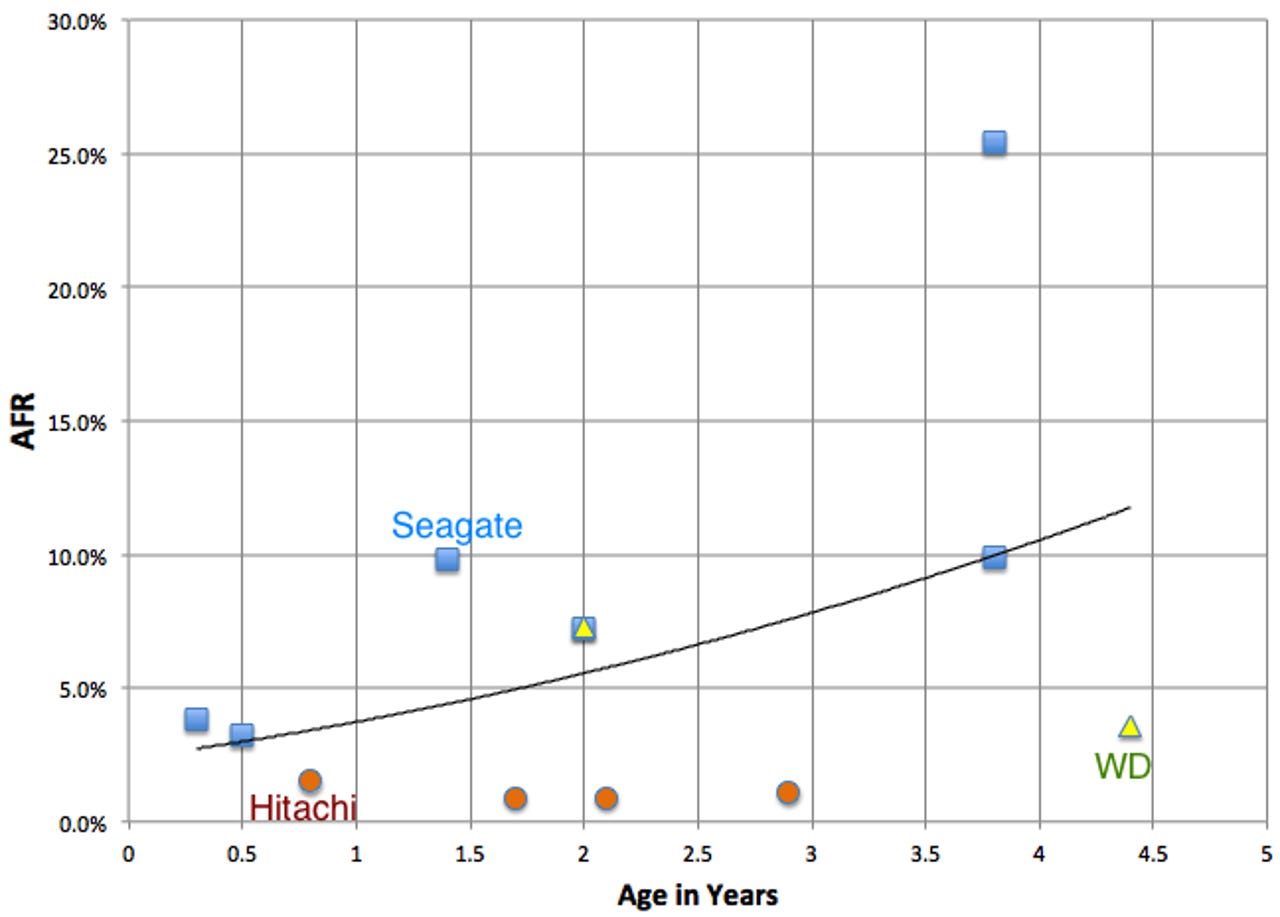

But here's a graph of AFR and age, by manufacturer, of BB's data (leaving out the Seagate drive with the 120% AFR to keep the graph readable). I'm not seeing the effect TweakTown is.

Vibration is an issue with drives, especially when seek rhythms synchronize, creating harmonics. However, with large block writes as the main BB workload the huge majority of seeks will be track-to-track with very low mechanical impact.

BB acknowledges that their workload is unique and not suited for every drive, whose results they leave out of the report. But wouldn't you like to know if one vendor is extra sensitive to vibration?

Temperature

TweakTown further states:

Backblaze claims that drive temperature doesn't affect drive life. That is counter to the observations of many others, including drive manufacturers.

In fact, the large scale Google/CMU (links below) studies of drives found that Backblaze is correct: temperatures have to be much higher than spec before drive life is hurt.

I attribute this to accelerated life testing: operation at high temperature. Engineers aren't stupid: drives are designed to work at higher than spec temperatures to give marketing the numbers they want.

Workloads

Later, TweakTown asserts:

Backblaze procures the cheapest possible HDD on the market at all times, regardless of its workload rating, and then subjects them to a harsh environment that is virtually guaranteed to destroy the drive. This leads to higher failure rates than observed in the wild.

The aggregate AFRs BB reported are in the ballpark of the other large scale studies. And the Google and Carnegie-Mellon studies found almost no AFR difference between "enterprise" and "consumer" drives in 24/7 server use. At best, BB can be accused of running accelerated life testing and finding that some drives do better than others.

TT also asserts that "Random data requires more movement, and thus creates more wear and tear on delicate HDD heads." Heads ride on air bearings over the platter, so it is more accurate to say that the head actuator assembly might sustain more wear, but again, there is no evidence from large scale studies that consumer drives can't handle frequent seeks.

Summary

TweakTown concludes with:

The data from Backblaze should not influence a purchasing decision by any consumer, regardless of what type of drive they are purchasing. . . . Even for the winners, the results aren't good; the failure rates are exponentially higher than those observed in the real-world.

Except, of course, that while some of the rates are way higher than have been reported in published studies, most of them aren't. Further, BB is careful to give drive model numbers, and it may very well be that today, for example, Seagate is competitive with high capacity drives.

The Storage Bits take

I understand a test engineer's desire for controlled environments and workloads for testing. But that isn't the real world: some drives are busier; some have higher ambient temps; some come from a bad run; or get banged around in shipment.

But rather than bash Backblaze for giving consumers the benefit of their experience, TweakTown should be asking, as I do, for other major drive users to come clean. I'm looking at you, Google, Amazon and Microsoft.

Google says they want to organize the world's information, but when it comes to something they have unique expertise in, they clam up. Amazon sells disks, but do you see reviews from their maintenance team?

I'd much rather have the results of millions of drive years over many more models. But we don't because the folks who know won't talk.

So yes, as a consumer, I would look at Backblaze's results. If I were upgrading my arrays tomorrow, I'd make an extra effort to buy Hitachi per the Backblaze experience. What they found squares with what I've heard from insiders over the last 10 years.

TweakTown repeatedly objected to the media attention this post got. If other players had already spoken it wouldn't be an issue, would it? I'll take Backblaze's info over nothing any day.

Comments welcome, of course. How about it, readers: would you prefer no info to the limited data Backblaze released?