Next-generation networks: An overview

Examine almost any emerging trend in the way people work, IT departments deliver applications or companies collect and process business information, and it'll translate into increasing pressure on today's wired and wireless networks at all scales of operation — data centre, LAN, campus, WAN.

That's because the new enterprise IT landscape of cloud services, mobility and BYOD, social media usage and big data analytics creates very different types of network traffic to the traditional mix of in-house client-server enterprise workloads. Not only is more bandwidth required to accommodate the richer workloads involved, but lower latency — particularly over wide-area networks — is also needed to keep response times for key cloud-based applications and services down to usable levels. As 'cloud-era' workloads become more complex and more mission-critical, IT managers will need increasingly sophisticated tools to manage network traffic and deliver an acceptable quality of service to the business. CIOs will also have to ensure that their security measures keep pace with these developing next-generation networks.

Are today's networks ready for 'next-generation' traffic?

If you're seeking an up-to-date 'state of the network' report, the recently released 2013 Cisco Global IT Impact Survey is a good place to start. This survey, which canvassed 1,321 IT decision makers in 13 countries (Australia, Brazil, Canada, China, France, Germany, India, Japan, Mexico, Russia, Spain, the UK and the US), seeks to assess the impact of IT departments on business-shaping decisions, and measure the relevance of the network to the business.

A key headline finding from Cisco's 2013 survey is that 78 percent of respondents feel that their networks are more critical to delivering applications than they were a year ago. That should come as no surprise, given the increasing prevalence of SaaS, mobile applications, social media and 'big data' analysis in the enterprise. However, the survey also reveals significant networking issues, with 41 percent of the IT professionals admitting that they are not ready to support BYOD (Bring Your Own Device) and 38 percent feeling unprepared for cloud deployments.

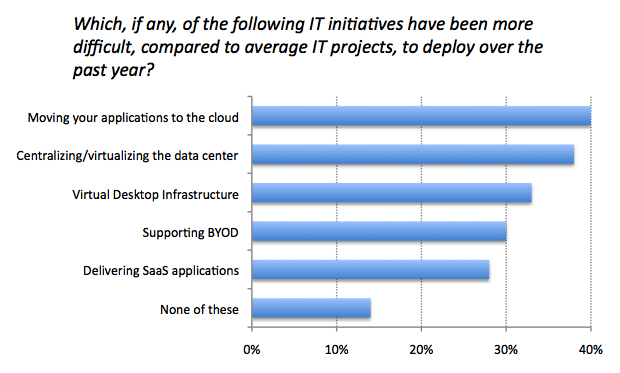

When asked to rank the most difficult IT initiatives to deploy over the past year, IT professionals point the finger at the following: moving applications to the cloud (40%); data center centralisation/virtualisation (38%); Virtual Desktop Infrastructure, or VDI (33%); BYOD support (30%); and SaaS deployment (28%).

Delving into the regional variation, the biggest perceived difficulty in the US is BYOD support, while in the UK it's VDI: IT professionals in all other regions list either cloud migration or data center consolidation as the most difficult IT initiative. The most confidence, IT-wise, is shown by the UK, Germany, Australia and France: in each of these countries, more than 20 percent of IT professionals rate none of the highlighted initiatives as any more difficult than average.

IoT and SDN awareness

When it comes to cutting-edge networking topics like the Internet of Things (IoT) and Software-Defined Networking (SDN), there's clearly some way to go before mainstream familiarity — let alone business impact — is achieved.

Although half of Cisco's respondents claim to be 'very familiar' with the Internet of Things, 42 percent are only 'somewhat familiar' while 9 percent are 'not familiar at all'. The most IoT-aware countries are Brazil, China, Mexico, Spain, the US and Germany; curiously, a quarter of the French IT professionals surveyed claim no familiarity at all with the IoT. Among those who are au fait with it, nearly half (48 percent) believe the IoT will open up new business possibilities, with particularly strong support for this view in China and Mexico.

Software-Defined Networking — the decoupling of the network's data layer from the control layer — is in a similarly transitional phase: although almost half of respondents are currently evaluating SDN solutions, 34 percent are not, and a surprisingly large proportion — 19 percent — know nothing about it. China shows a particularly strong affinity for SDN, along with the US, Brazil and Mexico. Among the current evaluators of SDN solutions, nearly three-quarters (71 percent) plan to do so within the next 12 months. Clearly, SDN's time is imminent.

The main drivers for implementing SDN solutions identified in Cisco's survey are operational cost savings and fast infrastructure scaling via automated provisioning, followed by traffic engineering opportunities, and custom forwarding and other custom applications.

Let's look in more detail at some of the components of the next-generation network.

Faster Ethernet

Increasing amounts of ever-richer network traffic require more bandwidth at the data link layer. Ethernet is the most prevalent data link protocol, and it has grown exponentially, Moore's Law fashion, over the years from 10Mbps back in 1983 to the current limit — 100Gbps:

Today, desktop client devices and servers generally connect to enterprise networks over Gigabit Ethernet (1GbE), with 10GbE links from the network edge to the switching core, often via an aggregation layer. Over the next few years, more servers will connect to the network over 10GbE, with 40GbE and 100GbE taking over the edge-to-aggregation and aggregation-to-core links. Cabling is a complicating factor: unlike 10GbE, which can use copper (Category 6a) cables and standard RJ-45 connectors, 40GbE and 100GbE will generally need to use optical multi-mode fibre (MMF) with MPO connectors for links up to 100m (MMF/OM3) or 150m (MMF/OM4). The fastest 100GbE technology will also increasingly be used as inter-LAN connections on campus networks and by carriers and service providers for wide-area network (WAN) links.

Demand for network bandwidth can only continue to increase, which is why the IEEE 802.3 Ethernet Working Group has already begun the process of defining the next step — 400 Gigabit Ethernet, which is expected to be ratified in 2017. After that, we'll be into the realm of Terabit Ethernet.

In August last year, telecoms and networking researchers Dell'Oro Group forecast that the 40GbE and 100GbE switch market would be worth $3 billion by 2016. Much of this growth, according to Dell'Oro, will be driven by the adoption of flexible cloud technology in data centres. High-end 40Gbps and 100Gbps Ethernet switches are now available from leading vendors including Cisco, Arista, Juniper Networks, Extreme Networks, Brocade and Huawei.

These high-end proprietary network switches are expensive, and the software that provides their intelligence is often neither customisable nor replaceable. This is the impetus behind the recent move by the Facebook-backed Open Compute Project (OCP) to develop a specification and a reference box for an open, OS-agnostic top-of-rack switch. The first serious work on this initiative took place in May at the first OCP Engineering Summit at MIT.

The OCP's initiative echoes the recent trend for mega-enterprises such as Google, Facebook, Amazon and Microsoft to buy inexpensive 'white box' network switches from Asian manufacturers and deploy their own control software — all indicative of an industry-wide need for more flexible and cost-efficient network infrastructure.

The extended enterprise

Building a next-generation enterprise network involves a lot more than installing faster wired Ethernet connections between clients, servers, routers and switches. For a start, the network needs to extend well beyond the confines of the in-house data centre and local area network to include the campus, branch offices, employees on the road and at home, and key partners, customers and/or clients. Network access needs to be available on a range of client (often 'bring your own') devices — PC, notebook, tablet, smartphone — over Wi-Fi and cellular links as well as wired connections. And with network traffic routinely including voice and video as well as data, application-specific traffic management becomes an increasingly important issue — particularly over bandwidth-limited wide-area networks — in order to ensure acceptable performance.

Overlain on top of all these demands is the need for more efficient utilisation of network resources, and for those resources to be rapidly deployable and easily scalable. These are, of course, the essential characteristics of highly virtualised 'cloud' computing, which will be implemented as private (in-house), public (outsourced) or hybrid clouds.

All this, when properly executed, should result in consolidated, more power- and space-efficient, data centers and flexible networks with 'anytime, anywhere' access for mobile-device-toting employees. Needless to say, next-generation networks also pose serious management and security challenges — particularly for IT professionals used to overseeing traditional (largely wired) enterprise networks and a limited roster of locked-down (mostly desktop) client devices.

Wired/wireless unification

A key concern in enterprise networking in recent years has been the unified management of wired and wireless networks — the latter often having emerged as a piecemeal addition to the wired LAN. With the focus moving inexorably from desktop to mobile devices, high-speed and high-quality wireless network access is becoming increasingly important, and ad hoc solutions are no longer acceptable.

Wi-Fi networks have evolved rapidly in recent years, with the current 2009-ratified standard being 802.11n, which operates in the 2.4GHz and 5GHz bands, supports MIMO and delivers data rates of up to 600Mbps per radio. The next standard, currently in draft (5.0) and expected to be ratified in 2014, is 802.11ac, which is restricted to the less crowded 5GHz band. It offers a number of improvements over 802.11n (including wider channel widths, more MIMO spatial streams, downlink multi-user MIMO and enhanced modulation) to deliver a theoretical maximum data rate of 6.9Gbps per radio (8 spatial streams with 160MHz channels). Draft-802.11ac-compliant kit, which has been available since 2012, currently delivers 1.3Gbps per radio (3 spatial streams with 80MHz channels).

As mentioned earlier, 41 percent of the IT professionals canvassed in Cisco's 2013 Global IT Impact Survey admitted that they were not ready to support BYOD. Although the survey didn't pursue the matter, a major cause of this unreadiness is likely to be the absence of a 'single pane of glass' console for managing both wired and wireless networks. This is changing, however — witness the fact that analyst firms are beginning to combine what used to be separate reports on wired and wireless infrastructure vendors. Gartner's latest Magic Quadrant identifies Cisco, HP and Aruba as the leaders in wired/wireless integration.

In the future, software-defined networking may provide an answer to wired/wireless unification, since the management of 'dumb' wireless access points via a separate wireless LAN controller has been common practice for years. Combining wired and wireless LAN control in a single SDN appliance could deliver the seamless integration and granular control that network managers are going to need in the mobile/BYOD-dominated enterprise.

For more on next-generation wireless technologies see the separate article by Rupert Goodwins.

Software-Defined Networking

The hottest topic in networking right now is SDN, or Software-Defined Networking, which provides an abstraction layer for the network in a similar way that hypervisors and virtual machines do for servers and desktop PCs. SDN is the trend of the moment because, after servers and storage, networking virtualisation represents the missing link in the ability to define, provision and manage networks, and the applications and services that run on them, in a flexible and scalable manner.

SDN is a relatively new field, and the IT community is still getting to grips with it. In Cisco's survey mentioned earlier, 19 percent of the IT professionals canvassed were unaware of SDN, while the main drivers for the 47 percent who were evaluating it were operational cost savings and fast infrastructure scalability via automated provisioning. Another recent survey from Swedish company Tail-f Systems, focusing specifically on SDN, reports broadly similar top-level findings: while 11 percent of the 237 enterprises surveyed are not discussing SDN at all, the main drivers among the 89 percent who are considering it are the increased number and importance of apps and services, the move to automating network management and the faster pace of new app/service deployment.

Perhaps the most telling finding in Tail-f's survey is the fact that although 92 percent of respondents felt they had a "pretty good" or "complete" knowledge of SDN, only 51 percent actually gave the correct definition in a four-way multiple choice question. Respondents proved better at aligning their reported and actual knowledge of the Open Networking Foundation's OpenFlow standard, with 75 percent claiming pretty good or complete understanding and 70 percent choosing the correct definition.

OpenFlow is an open standard that defines the communications interface between the control and infrastructure layers of an SDN architecture. It's curated by the Open Networking Foundation (ONF), whose membership includes most of the leading network infrastructure vendors.

An OpenFlow-compliant network device such as a (physical or virtual) switch or router has an open interface to its forwarding plane, allowing control to move from the device to centralised control software (see diagram above). OpenFlow uses programmable Flow Tables to determine how to handle incoming packets — perform a predetermined action or forward to the controller. This provides a very granular level of control over different kinds of network traffic, based on multiple parameters.

The ONF declared OpenFlow 1.3.x a stable protocol in May 2012, delivering incremental upgrades over the past year. Although OpenFlow has now received wide support from infrastructure vendors, for whom it is typically no more than a simple firmware upgrade, production deployments remain thin on the ground. The ONF, for example, has just two case studies on its website: an NEC project to revamp a Japanese hospital's complex and difficult-to-manage network infrastructure using its OpenFlow-based ProgrammableFlow technology; and, on a much larger scale, Google's widely-reported migration of its internal datacenter-to-datacenter WAN to an SDN infrastructure.

Google's 'G-scale' WAN is the search giant's largest production network, and it was built using 'white box' network switches and open-source OpenFlow-compliant routing stacks. According to the company, the benefits of SDN include: a unified view of the network fabric; high network resource utilisation (see graph above); faster failure handling; faster time to deployment; 'hitless' upgrades (without packet loss or capacity degradation); a high-fidelity environment for testing and running 'what-if' scenarios; and elastic compute thanks to the use of external servers for running control and management software.

OpenFlow is not the only enabling technology for software-defined networking, although it's likely to be widely supported. The closely related virtual network overlay is also talked about — particularly by network infrastructure vendors — as a complementary, and in some cases alternative, technology to OpenFlow-mediated SDN. Virtual network overlays parition the physical network into multiple logical networks, and a control plane protocol — which could be OpenFlow, or alternatives that operate along similar lines to MPLS VPNs — installs the flows that direct packets to their destinations. At the moment, network vendors use a mixture of control plane protocols for overlay networks, including OpenFlow, XMPP and OVS-DB.

Another widely supported initiative is the recently formed OpenDaylight Project, which is overseen by the Linux Foundation. OpenDaylight seeks to create an open-source SDN framework embracing the network application layer, the control layer and the (physical and virtual) infrastructure layer. The control layer uses the OSGi framework and REST API for the 'northbound' interface to the application layer, and supports multiple protocols — including OpenFlow — for the southbound interface to the infrastructure layer. Like the ONF, the OpenDaylight Project has an impressive roster of leading-vendor members, but it's too early to determine how effectively these players — most of them fierce competitors — will work together.

Security in next-generation networks

In traditional enterprise networks, where largely desk-bound users connect to IT resources within the company firewall in a client/server architecture, security policies are relatively straightforward to implement. Next-generation networks, by contrast, need to accommodate mobile users logging on, from a diverse array of devices and locations, to highly virtualised IT resources that may reside in the company's or a cloud service provider's data centre — or some combination of both. This more complex attack surface makes for a major security headache.

Little wonder, then, that security concerns rank highly when IT professionals are considering implementing cloud services and mobility on their networks:

Clearly, next-generation networks require integrated next-generation security systems rather than increasingly unmanageable add-on solutions. In the absence of such security systems, network managers will continue to struggle to move applications to the cloud, support BYOD and implement other 'modern' network services.

A key weapon at the enterprise network manager's disposal will be the so-called 'Next-Generation Firewall', or NGFW, that implements advanced features such as deep packet inspection, intrusion detection, application identification and fine-grained policy control. According to Gartner, firewall/VPNs and Intrusion Prevention Systems (IPS) are converging into NGFWs at the enterprise level, while UTM (Unified Threat Management) appliances hold sway in the SME market. Gartner's recent Magic Quadrant for Enterprise Network Firewalls identifies Check Point Software Technologies and Palo Alto Networks as the leaders in this emerging market.

Security is another area that could benefit from the advent of software-defined networking. Read our case study to discover how an Australian school upgraded its HP switches with OpenFlow firmware, created a rule on the SDN controller to catch and parse DNS traffic onto a server running HP's TippingPoint IPS, which then analyses the DNS requests for maliciousness.

WAN optimisation

Next-generation enterprise networks need to accommodate geographically dispersed locations such as branch offices, as well as homeworkers and mobile users, with employees increasingly accessing SaaS and other cloud services, running virtual desktops and transmitting other bandwidth-heavy traffic such as voice and video.

All this puts pressure on wide-area network (WAN) links, which is why WAN optimisation, employing a variety of techniques to squeeze the maximum performance and reliability from limited-bandwidth connections, will remain an important field for the foreseeable future. The longer you can postpone a connection speed upgrade, and the more you can consolidate network service provision, the more cost-effective your IT operation.

WAN optimisation controllers — either physical or virtual appliances — are the current tools for this job. Gartner's 2013 Magic Quadrant identifies a single clear leader in this market: Riverbed Technology, with Cisco (inevitably) as the main challenger. Open-source WAN optimisation tools such as TrafficSqueezer and WAN Proxy are also available for those with the skills to implement them.

As with security, WAN optimisation can be built into a software-defined network — witness the traffic engineering component of Google's SDN described above. Here, data centre-to-data centre WAN traffic is divided by the SDN controller into high-priority and low-priority classes, the former getting the bandwidth required to deliver high quality of service and the latter having to put up with various transmission impairments.