What race is your AI? Obama discussion adds politics to tech

Joi Ito, Director of the prestigious MIT Media Lab told President Obama he is concerned that the core development of Artificial Intelligence technology is being built by a "mostly white" and "predominately male gang of kids."

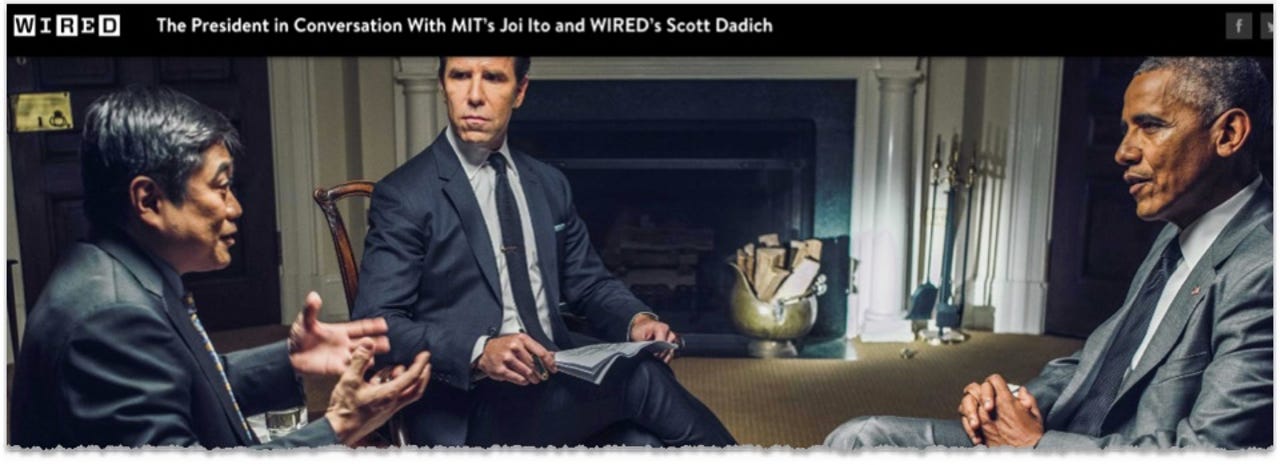

Ito's remarks were made at a recent meeting with Barack Obama and Scott Dadich, Editor-in-Chief of Wired Magazine. They have been published here: Barack Obama on Artificial Intelligence, Autonomous Cars, and the Future of Humanity | WIRED

Ito said:

This may upset some of my students at MIT, but one of my concerns is that it's been a predominately male gang of kids, mostly white, who are building the core computer science around AI.

They don't know how to communicate with people outside of their group. "They're more comfortable talking to computers than to human beings."

They think AI is an answer to "all the messy stuff like politics and society. They think machines will just figure it all out for us... Everybody needs to understand how AI behaves is important... because the question is, how do we build societal values into AI.

Foremski's Take: Raising the issue of race, privilege and the demands of civil society in how artificial intelligence (AI) systems are designed and deployed is a political and cultural powder keg.

President Obama said nothing and quickly switched the conversation to self-driving cars, and Scott Dadich did not pursue the subject.

But this issue of ethnicity, gender, income and much more, will undoubtably affect the future success of AI systems.

Your AI is not my AI...

As artificial intelligence systems touch more of us throughout the day, and in increasingly important ways such as in healthcare -- how do we know the system understands us?

If a health care AI system is designed by white males will it skew towards protecting the health of that group?

If the government uses AI systems for future services will it be fair to everyone?

"Everybody needs to understand how AI behaves," says Ito. The subtext: if we don't how AI behaves we will suspect it is working against us.

Profiling bans...

AI systems learn from studying human behavior but there are large differences between people. For example: AI systems serving a Hispanic community will require AI systems trained on that population. It will require ethnic profiling.

Similarly, the best AI systems will understand women's needs and will learn from profiling that population.

But will understanding how AI behaves, allow society to accept profiling by AI systems when it's not OK elsewhere?

Is there a backlash building, as suggested by Ito, against the notion that mostly white male technologists can design AI systems that are fair to all?

Will AI systems for African-Americans have to be developed by African-Americans in order to be accepted? Women for women, etc?

These incredibly sticky cultural issues will delay the use of AI and it might be a good thing. My concern is that our heady rush into AI will scale potentially bad decisions and make them worse.

We have enough trouble finding and deploying human intelligence, so why will artificial intelligence be any easier?

Dumb plus dumber does not add up to a genius. IQ doesn't work that way.