AMD 690G versus Intel G965 PC shootout

The integrated graphics market is one of the least glamorous platforms in desktop computing, but it is probably one of the most important since 90% of the PC market is dominated by integrated graphics. Not only is the chipset itself a valuable prize to AMD and Intel, but the chipset also determines which CPU gets put in to the system. This article will compare AMD's latest 690G integrated graphics chipset to Intel's G965 integrated graphics chipset.

The AMD 690G launched back in March 2007 and the Intel G965 is more than a year old with its successor the G35 due later this quarter. That leads to the question of why bother with this comparison since the Intel G35 is due in a month or two. The reason I'm doing releasing this now is because there is a lot of contention over which CPU is more energy efficient, which chipset does better at gaming, and which chipset is better at playing back video. The results of this test will also give us a general idea of what to expect with Intel's G35 chipset and it also gives much needed coverage for AMD's integrated graphics chipsets since many people are still under the false impression that AMD doesn't have a motherboard chipset.

As soon as I can get my hands on a G35 chipset or updated drivers that resolve some of the problems, I will post a follow up to this shootout. I'll also be able to quickly test the upcoming energy efficient AMD BE-2350 low-power processors and some of Intel's newer Core 2 CPUs which have been refined.

Hardware and Software configuration:

| AMD 690G platform | Intel G965 platform |

| MSI K9AGM2-FIH motherboard | Intel DG965WH motherboard |

| Size = MicroATX | Size = ATX |

| X2 5600+ 2.8 GHz dual-core CPU | E6600 2.4 GHz dual-core CPU |

| Two 512 MB DDR2-533 DRAM | Two 512 MB DDR2-533 DRAM |

| ATI X1250 integrated graphics | Intel GMA 3000 integrated graphics |

| 330 Watt SeaSonic Power Supply | 330 Watt SeaSonic Power Supply |

| Seagate 160 GB SATA 7200 RPM | Seagate 160 GB SATA 7200 RPM |

| Vista x86 32-bit | Vista x86 32-bit |

| ATI Catalyst 7.7 display driver | Intel Graphics driver v15.4.3 |

| Default Vista DVD CODEC | Default Vista DVD CODEC |

| Windows Media Player 11 for DVD playback | Windows Media Player 11 for DVD playback |

| AMD 690G platform | Intel G965 platform |

| MSI K9AGM2-FIH 690G motherboard has integrated HDMI digital video output in addition to VGA analog output. However, the digital HDMI out isn't going to fix the terrible video playback quality. | Intel DG965WH motherboard lacks built-in HDMI and requires an add-on card. While the card may only cost $10 or less to large manufacturers and PC makers, end-users who build their own computers will have a hard time finding those SDVO ADD2 add-on boards. Some of the newer G35-based Motherboards being released this quarter will come with HDMI ports. |

| The AMD 690G only has 4 SATA ports with RAID 0, 1, and 1+0 support. Neither of these RAID types are suitable for storage servers but is more than sufficient for most PC users. | The Intel G965 motherboard has one of the finest integrated storage RAID controllers on any motherboard. It has 6 SATA ports that support RAID 0, 1, 1+0, and 5. Its storage performance numbers are extremely good. |

| AMD X2 5600+ costs $150 | Intel E6550 costs $185 (replacement for E6600 which has FSB1333 and newer manufacturing process) |

| Low cost motherboard at $70. | Modest cost motherboard at $115 |

AMD versus Intel on Energy efficiency

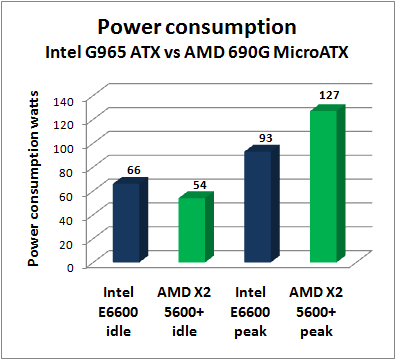

Energy efficiency between AMD and Intel is one of the most contentious and difficult things to compare because of the variation in system components beyond just the CPU. Just the difference in the motherboard is enough to flip the victor from one company to the other and this is why there are so many conflicting benchmarks posted on the Internet. Some of those benchmarks will declare AMD as the energy efficiency winner while others will declare Intel as the winner. I've run my own series of tests and I've brought in some external data to compare the numbers so I can explain why there is such a discrepancy in the results. First we have my power consumption numbers. Since I used a very high-efficiency power supply, I was able to get some of the lowest power measurements of any of the benchmarks I've seen posted on the Internet. Note that for typical desktop performance, the X2 5600+ and E6600 from AMD and Intel are roughly the equivalent performance on average though there is WIDE variation depending on the application. Some applications like scientific "High Performance Computing" workloads will favor AMD while media encoding and gaming workloads will favor Intel. To get the closest apples-to-apples comparison, these two processors were chosen.

Note: The "peak" power usage results were generated using WPrime v1.53 using two manual threads (must be run as administrator) which cranked both CPU cores to 100% utilization. Playing back 1080i HD video or 1680x1050 full screen gaming could only generate a little more than half of the load I was looking since they only cranked the CPU to a little more than 50% utilization. Only highly optimized multi-threaded applications can crank a multi-core CPU to 100% utilization.

The power consumption results as you can see are mixed and it has good news and bad news for both AMD and Intel. The good news for AMD is that they're able cut their clock speeds and voltages more drastically resulting in a silent PC that dropped down to a measly 54 watts of power consumption in idle which is 12 watts lower than the Intel system's idle state. This low power state can mostly be maintained while the user is typing up documents and playing back MP3s or other digital audio streams. Slightly more stressful tasks will take the AMD 690G-based PC up to a 70 to 80 watt state. The bad news for AMD is that its peak power consumption is substantially higher than Intel's chips and anyone who's constantly playing games on their AMD based PC or anyone who leaves Folding at Home running 24x7 is going to end up with a much bigger electric bill.

The good news for Intel is that their system is able to maintain a very reasonable power consumption levels even under peak loads. The Intel G965 system with an E6600 CPU is able to get by with 93 watts even under a full load. The bad news for Intel is that their idle state - while respectable - isn't as low as it could be had Intel been a little more aggressive in cutting clock speeds. The Intel G965 system ran at 66 watts idle but these results might be slightly unfair to Intel since the motherboard used in the Intel system is a full ATX motherboard with twice the number of memory DIMM slots, twice the number of PCI-E connectors, and 50% more SATA connectors. The AMD system is using a stripped down MicroATX motherboard so we might be comparing the mileage of a four seat vehicle to a two seat vehicle.

Furthermore, if we look at some power consumption results from Scott Wasson's article at TechReport.com, we can see that Wasson gets the opposite results for idle power consumption where the Intel E6600 system uses 121 watts whereas the AMD X2 5600+ system uses 126 watts in idle. Now Scott Wasson is actually using the exact same video card for both systems so the only difference is in the motherboard and CPU. Take a look at the chart I compiled from Wasson's report. The difference here is explained purely in the difference in motherboards. The Asus M2N32-SLI motherboard is a more fully loaded motherboard than the Intel D975XBX2 board.There will also be significant improvements in Intel's new 3-series chipset in energy efficiency. The P35 chipset for example is able to shave off 8 watts in idle mode compared to similarly equipped 965 motherboards in idle mode. Furthermore, I have reliable sources that are telling me about Intel's latest "Stepping G" revision of the Core 2 processor line that promises to slash power consumption by a significant amount and it will be due this quarter. The new Stepping G manufacturing process is able to shift Intel's entire line of processors down one notch on the TDP (Thermal Design Power) scale. This will for example allow Intel to take their 3.0 GHz "Clovertown" quad-core processor (used in Apple Workstation computers as of April 2007) to go from 150 watts max TDP to 120 watts so that it can be used in 8-core Server configurations. The existing 65 watt TDP CPUs will most likely use even less power. When I get my hands on a G35 motherboard and/or Stepping G CPU, I'll do a follow up to this article.

So the lesson here is that the power consumption is heavily dependent on the Motherboard used and the feature set of that motherboard. Larger motherboards with more sockets and connectors will naturally have more power consumption and smaller MicroATX boards like the MSI K9AGM2-FIH will take less power. If we factor out the motherboards, Intel and AMD processors have roughly the same idle power consumption but AMD processors consume far more power under peak loads.

To figure out why AMD is able to cut their power consumption so drastically, let's look at what's happening to the voltage and CPU clock speed. Here we have screen shots of the CPU-Z utility showing the difference between AMD's Cool'n'Quiet technology versus Intel's SpeedStep technology.

AMD is willing to slash their clock speed from 2.8 GHz with an FSB multiplier of 14 all the way down to a 5x multiplier which results in a 1 GHz processor. This is all done in real time depending on the workload and as soon as the computer needs more performance, the FSB multiplier is jacked back up to 14. This allows AMD to cut most of the power consumption in its CPUs.

Intel Enhanced SpeedStep in action:

Intel does something similar with SpeedStep by cutting their FSB multiplier from 9x to 6x which takes the clock speed from 2.4 GHz to 1.6 GHz and this results in a much more modest power saving in Intel processors. While that may be good enough to almost match AMD on idle power consumption, my question to Intel is why not make it even better? If the computer is going to sit there doing nothing most of the time, Intel should try and cut the idle power consumption of a PC using their mainstream CPUs down to 40 watts or below. It isn't good enough to just have an efficient sleep state since users don't want their computers to fall asleep because they want to be able to access the files and desktop remotely at any time. We can also forget about asking end-users to configure wake-on-LAN because even a former professional network engineer like me has a hard time over coming some of the difficulties.

<Next page - AMD versus Intel on Video Playback>

AMD versus Intel on Video Playback

Video playback quality has also been one of the more contentious issues with some people accusing Intel of rigging their demos to screw up AMD/ATI at Cebit earlier this year. I wrote on this topic back then explaining that it was most likely not a case of a rigged test but my poll showed that at least a simple majority of people thought Intel rigged it. I've now gone ahead and ran a series of tests using the latest graphics drivers from both AMD and Intel and I can assure you that nothing was rigged and I've included actual screenshots for you to see for yourself. The DVD video playback quality of ATI video cards (even in my higher-end X800 card) leave a lot to be desired. Anyone who doubts these results can replicate the test themselves and I've fully disclosed the methodology, driver version, software, and hardware.To test DVD video playback quality, I used the HQV benchmark from Silicon Optics and took screenshots of what I saw. First we'll start with the diagonal filter test which shows how well it handles the "Jaggies".

Diagonal filter "Jaggies" test:One of the tests that I couldn't really show in a screen shot was the roller coaster test. During the test run, the AMD 690G mysteriously stuttered during the playback.

Since a lot of DVDs were converted from 24 fps film, the 3-2 pull-down film conversion test can reveal ugly patterns and the rendering of detail.

3-2 pull-down film conversion test:The next test is a color bar and resolution test. It checks to see if the DVD player can play back very fine details and whether or not the color is rendered faithfully. Once again, Intel passes while AMD fails.

Intel G965 - Color bar and resolution test:Of course I could have ended my video playback testing right here and declared Intel's G965 perfect for DVD playback, but I took the testing a bit further. I took some DVDs with animated content and found that even the G965 had interlacing problems with certain video content. Silicon Optics HQV benchmark unfortunately doesn't test any animated content and the video card manufacturers and DVD makers don't put in the right optimizations for animated content. Most of the time the quality was OK but there were definitely problems with 4:3 full screen content and I'm going to be working with Intel to see if they can resolve these problems. I first reported this problem with NVIDIA and ATI video cards when Vista back in January when Vista launched and the problem has been confirmed by Microsoft. Note that this isn't just a Vista problem, interlacing artifacts have plagued video playback on all platforms for a long time.

One other test that the AMD ATI X1250 integrated graphics adapter did do well in was when I tried to play back my HDV (1920 by 1080 1080i) content that I captured from my consumer-grade Sony HDR-HC1 camcorder. Note that the file format has a ".dvr-ms" extension in Windows Vista. The video was able to play back smoothly without any stuttering. That was a pleasant surprise since I still can't even get an NVIDIA 6600 128 MB PCI-Express discrete graphics adapter to play that HDV footage back smoothly at full screen and it only works at 50% size. Intel's G965 did slightly better than the NVIDIA 6600 but it still stuttered a little at full screen. Ironically, all of these systems will smoothly play back 1080i HD MPEG2 content captured from over the air ATSC broadcasts and they will all play back the 1080i Windows Media Video content. Ironically the ATI X1250 can play back HD Video smoothly but it won't play back regular DVDs smoothly. As soon as I get an HD DVD drive (maybe the cheap Xbox 360 add-on drive), I'll try the HD DVD version of the HQV test.

<Next page - AMD 690G versus Intel G965 gaming performance>

AMD 690G versus Intel G965 gaming performance

Doing a gaming benchmark on integrated graphics solutions is kind of like comparing dumb and dumber. But since we're in the mood to compare, I have to give the users some idea of what kind of gaming experience they can expect. To test one of the more popular games, I dragged over a copy of my Valve folder which contains all of my Steam games which includes Half-life 2 and Counter Strike Source.Since everyone is using LCDs these days instead of CRT tube monitors, even the cheapest $110 17" LCD displays have 1280x1024 resolution and this makes it very difficult to get decent gaming performance. Sure you can run lower resolutions but it looks absolutely ugly on an LCD display which can't optically change resolution. The resolution I tested is 1680 by 1050 and that's a very popular resolution since 20-22 inch LCDs that run $200 to $400 are very popular in new computers. I ran the following two test scenarios with differing amounts of detail levels.

High quality setting for Counter Strike Source torture test:

- Resolution - 1680x1050

- Model detail - High

- Texture detail - High

- Shader detail - High

- Water detail - Reflect world

- Shadow detail - High

- Color correction - Disabled

- Antialiasing mode - None

- Filtering mode - Anisotropic 2x

- VSync - Disabled

- High Dynamic Range - Full (if available)

Low quality settings for Counter Strike Source torture test:

- Resolution - 1680x1050

- Model detail - Low

- Texture detail - Low

- Shader detail - Low

- Water detail - Simple reflections

- Shadow detail - Low

- Color correction - Disabled

- Antialiasing mode - None

- Filtering mode - Trilinear

- VSync - Disabled

- High Dynamic Range - None

As you can see, these are pretty pathetic results though AMD's newer 690G is the winner. You need at least 60 fps average (which means it can still drop to 30 fps or lower under extreme situations) for gaming to be enjoyable. Ideally you would want VSync turned on because you want to draw full frames to avoid ugly tearing effects and the only way to sustain the 60 fps rate required to match the refresh rate on an LCD is to have average frame rates in the 100 fps range. Unfortunately you can't get these results unless you're willing to spend $150 on an ATI X1950GT or at least on NVIDIA 8600 GT for $119 and the majority of PC shoppers won't spend that much money on a video card and they're stuck with whatever the Computer Retailer bundles in the system.

Conclusions

Breakdown of scoring (1 to 10 scale)| AMD | Intel | |

| CPU idle power consumption | 8 * | 7 ** |

| CPU peak power consumption | 4 * | 8 ** |

| Motherboard power consumption | 8 *** | 6 **** |

| HQV DVD benchmarks | 2 | 9 |

| DVD (Animation content) | 4 | 6 |

| HDV 1080i Camcorder ".dvr-ms" | 8 | 3 |

| Counter Strike Source torture test | 3 | 2 |

| Motherboard price | 8 *** | 5 **** |

| Storage capability | 5 *** | 8 **** |

| Digital Video HDMI output | 9 *** | 5 **** |

| CPU performance/dollar | 8 * | 6 ** |

| CPU Overclocking potential | 3 * | 9 ** |

The results are what they are and it's hard to declare a winner based on these mixed results because it completely depends on an individual's personal requirements. If you're looking for a simple low-cost PC and you don't care about DVD video playback quality or serious gaming, the AMD 690G may be the perfect solution for you. Those who do care about DVD video playback quality for a Media Center PC or storage capability should wait a few more months for the Intel G35 chipset which is superior in every way to the Intel G965. Then again you may look at the gaming results and decide you don't want anything to do with integrated graphics when the NVIDIA DirectX 10 8400GS 256 MB Discrete graphics adapter is available for $60 or something better. The thing about integrated graphics is that it is essentially a free video card and you can always add a video card later if you decide it isn't good enough.

These results contain praise and criticism for AMD and Intel. I'm hoping that they will respond with better drivers and better products which will ultimately result in happier customers and more sales.