Build a $2,500 supercomputer

Supercomputing Costco-style In 1997, IBM's Deep Blue supercomputer beat world chess champion Gary Kasparov. Today you can build a more powerful machine for less than $2,500 in an 11" x 12" x 17" box. That works out to less than $100 per gigaflop as of January, 2007

More good news: pricing out the components today the machine would only cost $1,300!

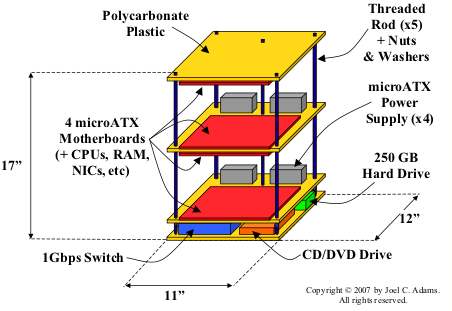

The recipe Professor Joel Adams and undergraduate Tim Brom built the machine at Calvin College in Grand Rapids, MI. Using the Beowulf cluster model, the Microwulf design includes

- 4 microATX motherboards with dual-core AMD Athlon 64 X2 3800 AM2+ processors

- 8 GigE ports - 1 built-in port on each motherboard, plus 1 added GigE PCI-express NIC

- 8 GB RAM - half of what a balanced system should have, but 16 GB would have busted their budget.

- 4 microATX power supplies

- 1 8-port GigE switch

- 250 GB hard drive & a CD/DVD drive

- 3 polycarbonate plastic shelves to mount the kit on plus 5 threaded rods to support the shelves

Here's a schematic diagram:

The architecture Beowulf clusters are based on a message-passing (MPI) infrastructure that uses a network to interconnect the nodes. Some Beowulf clusters have hundreds of nodes and scale nicely with the right workloads.

Microwulf has an economical version of the same architecture, built on Ubuntu Linux and MPI libraries.

The result Performance is a many-splendored thing. In the world of supercomputing the standard benchmark is Linpack, which solves a dense system of linear equations in 64-bit double precision arithmetic. Learn more about Linpack, HPL and their parameters here.

It is worth noting that with a 250 GB SATA drive, HPL doesn't do much I/O. The benchmark is testing float point performance on an in-memory problem. Above 30,000 the machine ran out of memory. Here are Microwulf's stats:

While unexceptional today, this performance would have made Microwulf the world's 6th fastest supercomputer in 1993. At less than $100 per gigaflop. Update: at today's prices about $50 per GFlop.

The Storage Bits take Humans aren't very good at forecasting exponential functions like Moore's Law. Microwulf is a good excuse to take stock of just how much computing has advanced in the last 15 years.

Millicomputing is the name of a related initiative to build powerful clusters out of very power-efficient processors and low-cost components. In another 10 years you'll be able to have the equivalent of a 5,000 node Google cluster in your den. Cluster-based virtual reality, anyone?

Update: Lots of great comments from some very experienced people. Thanks! A couple of folks pointed to a detailed tutorial written by Professor Adams - who graciously permitted me to use his copyrighted diagram - that I'd linked to but without flagging its importance.

Let me rectify that oversight. If you want to get into the details of the hardware and software this article on the Microwulf architecture and construction should suffice.

Comments welcome. Personally, I'm very happy with my quad-core Xeon, but I don't do much with computational fluid dynamics or protein folding.