A guide to server efficiency

With prices plummeting, there’s never been a better time to buy server hardware. Which is good, because it means you can get a lot more computing for your money. However, demand for processing power continues to rise, and despite the introduction of ever more powerful hardware, datacentre managers continue to cram yet more servers into already overcrowded racks. And that’s bad, because it leads to higher energy and cooling bills. So much so that it won’t be long before it costs more to simply power and cool a server than it does to buy it in the first place.

Fortunately there are measures that can be taken to alleviate the situation, and a number of new technologies to help; we’ll be outlining these in this guide to server efficiency.

Performance per what?

When it comes to consuming power, server processors are one of the biggest culprits, so it’s the processor vendors who are leading the present efficiency drive. Indeed, when illustrating how their products stack up against the competition, the likes of AMD, IBM, Intel and Sun have all but abandoned clock speed in favour of 'performance per watt'. This metric is designed to tell you how much performance you can expect from a processor for each watt of power drawn from the AC socket.

Unfortunately, performance per watt is something of nebulous concept, and there's no standard way of calculating such figures and no proper benchmarks to enable objective comparisons to be made.

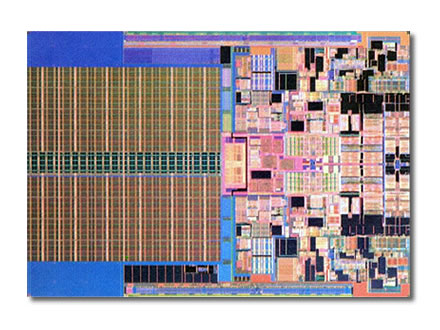

Still, it does show a desire on the part of processor vendors to make their chips more efficient, one of the principal techniques being to move to thinner dies (the wafer in which the transistors are etched). The thinner the die the less power is required to move electrons around it, so the more efficient the processor should be and the less excess heat is likely to be generated. At least that’s the theory; however, it’s not always translated into practice.

When Intel moved from 130nm to 90nm dies, for example, the power requirements for some of its chips actually rose. Likewise it had further problems when it moved to the 65nm silicon from which its Xeon server chips are now made.

Fortunately for Intel, the company has now sorted out most of the power issues, partly by moving to a totally new architecture (known as Intel Core), enabling it to match, if not exceed, the low energy claims AMD has been making for its Opteron processors for a number of years. Indeed, Intel has been getting very bullish over energy efficiency, with Intel CEO Paul Otellini recently predicting a 310 per cent increase in performance per watt by the end of the decade. By that time, the company's Xeon server processors (or whatever they’re called by then) should be based on even thinner 32nm dies, with interim 45nm chips set to be released in 2007/8.

The multi-core dilemma

On the face of it then, all you have to do to keep the lid on your energy and cooling bills is to upgrade to newest, thinnest and hopefully, most energy efficient processors. In particular you should look for low TDP (Thermal Design Power) ratings which, although not quite a performance per watt measure, will at least tell you whether a processor has been designed with power efficiency in mind.

However, as is so often the case, it’s never that simple, with a number of complicating factors waiting to upset the equation. Such as the introduction of first dual-core then quad-core processors, with 8-core and above likely to follow very soon.

Multi-core ought to be good news when it comes to efficiency. It enables, for example, one 2-way quad-core server (effectively 8 processor cores) to replace four 2-way servers fitted with old-fashioned single-core chips. This means just one power supply and one motherboard generating heat instead of four. There's also just one system disk spinning away, one set of cooling fans and so on — leading to quite clear and measurable gains in terms of reduced power and cooling requirements.

Unfortunately, as already pointed out, demand for server processing continues to rise. So you could soon find that you’re back up to four servers, each with a pair of quad-core processors. Moreover, because a lot of legacy server applications are single threaded they are unable to take advantage of SMP (Symmetric Multi-Processing), let alone extra cores. As a result, you could end up with lots of extra processor cores consuming power and generating heat while doing very little in the way of real work.

Even if you do have the latest in multi-threaded software, you’re unlikely to be using all of the processing power all the time. Which is why it’s worth looking for additional power management features built into the chips involved.

Power management

Again it’s down to the processor vendors to provide this, an example being AMD's Power Now! Optimized Power Management (OPM) technology available on the latest multi-core Opteron chips. OPM can fine-tune clock speed and voltage requirements automatically depending on CPU workloads; AMD claims efficiency gains of up to 75 per cent during idle periods using this technology.

Intel, naturally, has something similar called Demand Based Switching (DBS), which is an extension of the SpeedStep technology long available on Intel's mobile processors, for which similar claims are made.

Motherboard vendors are also helping here, incorporating additional remote monitoring and management functionality into their designs. And if you look in detail at the latest operating system products you’ll find an increasing emphasis on the ability to make efficient use of hardware resources. Turning off unwanted processes for example or, on the latest Windows Server 2008 and most Linux distributions, not even installing them in the first place.

Elsewhere, server and network management tools are being adapted to better monitor and control power and cooling, while vendors such as Dell are now offering customers capacity planning tools to better estimate power, cooling, airflow and other requirements.

Form factor

Another complication is the form factor, with servers seemingly getting smaller by the month. As such, rack-optimised products have now all but replaced old-style tower servers — at least as far as the enterprise datacentre is concerned. But these in their turn are now being rapidly phased out, and although rack-optimised servers are still being deployed, for maximum processor density and the ultimate in potential efficiency, blade servers are the way forward.

Essentially single-board computers, blade servers are designed to slot into a special rack-mount chassis to provide maximum processing power in the minimum space. Power and cooling are handled through the enclosure rather than on the blades themselves, minimising the components involved and making blade solutions much more efficient compared to their ordinary rack-optimised equivalents. Even so, it’s possible to get much the same specification on a blade as in a rack-optimised server.

That’s partly down to the fact that a blade doesn’t need its own power supply, cooling fan or bulky air flow ducting. But equally, the introduction of multi-core processors means more processing power in less space. You can also now get high-capacity memory modules and much smaller disk drives — HP, for example, is standardising on 2.5in. SAS (Serial Attached SCSI) drives across its entire ProLiant server range, with other server vendors taking a similar approach.

Of course you can encounter similar problems with blades to those outlined previously for multi-core processors. Blade servers let you do more with less, but growth in demand can mean moving from cabinets packed with rack-optimised servers to enclosures of similar size chock-full of blades. Moreover, first-generation blades empahsised performance, with little consideration given to power management or cooling. That's now changing, with the likes of HP, Dell and IBM increasingly keen to push the power management features of their latest blade products.

Thermostatically controlled fans able to react to changing conditions are now very much the order of the day in the latest products. Indeed, some vendors have even gone as far as patenting their fan technology, as with HP and the fans in its latest c-Class ProLiant blade enclosures. You’ll also find additional circuitry dedicated to monitoring and managing power and cooling, not only on the blades themselves but also through additional controllers to manage complete blade enclosures and racks plus custom software for secure remote management.

IBM and blades

By Bill Holtby

IBM is betting the farm on blades. For IBM, blades are the way forward for servers. They're space-efficient, modular and cost less to run than a standard tower or even a rack server.

IBM's blade server VP Doug Balog reckons that 'Customers want more integration, simplicity, performance and longer-lasting products'.

According to Balog, IBM came to blades by focusing on what it calls server-class technology. 'We then doubled the density for high-performance computing — applications such as the computations required by financial trading houses, oil and gas, and pharmaceutical companies. Now blade technology is now moving into the enterprise infrastructure.'

IBM is also not shy of taking pot-shots at HP, its major competitor in the blade market. 'While we're looking at new ways of using blades, HP, with its c-Class products, is only now in the process of moving customers from racks to blades. They have fewer than 10 per cent of their customers buying blades, while 25 per cent of IBM's Intel/AMD x86 server sales by revenue are blades', says Balog.

So why is everyone buying blades? According to Balog, as recently as three years ago, no customers were talking about power and cooling. 'But two years ago, customers suddenly started talking about this as the big issue and it continues today. Now everyone is interested in green computing.'

Blades aren't just for big enterprises either. 'We see the SMB market ready to explode with blades', says Balog.

Consequently, the company is also preparing to launch a blade server chassis aimed at small to medium-sized businesses — a 'small business' in IBM-speak is one with 1,000 employees or fewer. Expect the product to be a half-sized version of the company's entry-level BladeCenter chassis — perhaps with seven server slots rather than the 14 of its bigger brother, and fewer redundancy features.

Balog says that the IBM advantage is that it can tap into its mainframe heritage to deliver high levels of integration, reduce energy consumption, simplify interconnectivity and deliver central management.

BladeCenter: the heart of the matter

IBM's blade server product line is based around the 14-slot BladeCenter chassis, which it launched in 2002. Last year, IBM launched an upgrade, known as BladeCenter H, which added support for high-speed connectivity such as InfiniBand, along with larger power supplies and cooling systems. It also makes a ruggedised version, the BladeCenter T, which it sells largely to telcos and military establishments, in both standard and high-bandwidth versions.

Into the chassis plug not just servers, but also blades from IBM and others to add features such as communications technology and management. For example, Blade.org founder member Blade Network Technologies sells 10Gbit Ethernet products for the BladeCenter.

Although the BladeCenter chassis have been tweaked since launch to bring them up to date (adding support for DVD drives, for example), they've remained substantially unchanged to ensure backwards compatibility. By contrast HP's server blades are not interchangeable between its two chassis products, the p-Class and c-Class; HP says that this allows users to deploy the latest technology, and not be stuck with older, less capable kit.

IBM's innovation goes into the plug-in devices, which will work inside any BladeCenter chassis. IBM sells blades containing four types of processor. Its x86 devices contain AMD Opterons and Intel Xeons — Xeon-flavoured blades are available only as two-CPU servers, while you can buy Opteron-based servers in both two- and four-way variants. It makes an IBM POWER-based blade that sells into the slow-moving Unix market, plus a Cell processor-based device for multimedia and high-performance computing applications.

Blade.org

IBM puts great store by its Blade.org initiative. It set up the organisation in February 2006 in a bid to win the hearts and minds of hardware and software developers, and encourage them to develop products for its BladeCenter platform. At the heart of the initiative is the publication by IBM of the specifications of its BladeCenter chassis for free, allowing others to develop products around it. It now consists of 92 vendors including AMD, Brocade, Cisco, IBM, Nortel, Intel, Red Hat, VMware and a number of other high-visibility industry names.

According to IBM's Balog at least, Blade.org is achieving its objective, and is now self-funding.

HP, by contrast, only set up its equivalent Blade Connect initiative in April this year. HP reckons it consists of an online community of customers, partners and others with the aim of allowing them to interact and collaborate around its BladeSystem products.

Dell, Sun and others currently share the 20 per cent of the blade market that IBM and HP don't own. But it appears that IBM will have its work cut out to keep up with HP, whose product portfolio appears to have more resonance with enterprise buyers at the moment.

IBM knows that chassis sales today generate sales for blades and accessories tomorrow, so expect a vigorous response. If you're in the market for a blade server system, such intense competition can only be a good thing.

The virtualisation story

We’ve left virtualisation until almost last, not because it’s unimportant — far from it — but to allow space to do justice to this highly influential technology. And it really is worth it, virtualisation being one of the best ways to really ramp up server efficiency.

That’s because by virtualising the processors, memory and other hardware resources you’re able to configure and run multiple virtual machines (VMs) on the same physical server platform. Each of these VMs can then run an independent guest operating system, with the virtualisation software sharing out access to physical resources so that they’re effectively utilised rather than sitting idle while still consuming power and generating heat. If you beef up your hardware using the latest 64-bit multi-core processors, server consolidation takes on a whole new meaning.

Virtualisation also helps to address issues with older single-threaded applications as otherwise unemployed processors or processor cores can still be used by other VMs. Plus it’s possible to take advantage of the latest 64-bit processor technologies even when still running predominantly 32-bit applications and operating systems.

There are several different types of virtualisation, but in the industry-standard server market two approaches predominate. The first is where virtual machines running guest operating systems share access to server hardware via a host operating system — typically either Windows or Linux — through a virtual machine monitor (VMM), which is run much like any other application. Examples of this include VMWare Server from market leader VMWare, and Microsoft’s Virtual Server 2005, both of which can be downloaded for free and used to host both Windows and Linux VMs.

The other approach involves the use of a virtualisation 'hypervisor', which does away with the host operating system. Because it doesn’t use any host OS services, the hypervisor can be better tuned to the virtualisation role and, in theory, should enable guest VMs to make more efficient use of the host hardware resources.

Again, the market leader here is VMWare with its ESX Server product, now part of its Virtual Infrastructure 3 (Vi3) solution. This can be used to host a variety of guest VMs running Windows, Linux and Solaris with dynamic load balancing of resources to make the most efficient use of the supporting hardware, plus live migration of virtual machines for failover and maintenance.

Microsoft is also planning a hypervisor solution that will form part of Windows Server 2008 (it will be called Windows Server Virtualisation), although this won’t ship until up to 180 days after the new server OS is released. It will also lack the load balancing and live migration facilities that were originally planned, which will now appear in a later release.

XenSource is another increasingly popular virtualisation vendor, with its open-source Xen products. The processor vendors have been doing their bit too, adding hardware support for virtualisation to their latest chips. To this end you should look for processors with either Intel-VT or AMD-V capabilities. However, note that while some virtualisation solutions are reliant on these processor extensions, others can get by without them, with no real impact on overall effectiveness.

A holistic view

There are many other areas to examine if you want to improve server efficiency. Do you really need all those redundant power supplies, for instance? Similarly, you might want to look at reducing the number of internal disks dedicated to each machine. Direct-attached storage is both inefficient and hard to manage, whereas a SAN (Storage Area Network) can drastically cut the number of drives you have spinning away, as well as making it a lot easier to expand, protect and generally manage your storage requirements.

Operating systems can also have a bearing, as can applications — especially older programs which, if re-engineered using new technologies, can be made to do a lot more on fewer, less-powerful, servers. Indeed, if efficiency really is important to you, it’s worthwhile taking a much more holistic view of your servers and everything to do with them. Consider everything from the processor up, and you may be surprised at the savings you can make.