Siri: Will Microsoft's Bing give me quality search results?

When Apple officials announced in passing this week that Siri will use Bing instead of Google as its default Web search engine with iOS7, I saw more than a few skeptics wondering aloud about how the Bing results would compare, quality-wise.

I don't have any inside information as to how/why this collaboration came to pass, but it does seem Apple is intent on finding ways to reduce its dependence on Google, even if it requires teaming with another "enemy." Did comparative quality of search results have any bearing on the decision at all? Again, I have no idea, but I'm doubtful.

Just because I tend to believe politics trumps the "best" solution doesn't mean I am down on the quality of Bing's search results these days. As I've noted before on this blog, I've been using Bing with good success on my PCs, tablets and laptops in the past several months; on my phone, I haven't been as happy with the quality of my local Bing search results.

Microsoft officials are confident they can match, if not surpass, other search engines in terms of the amorphous concept of "search quality." In March 2012, Microsoft launched a "Bing Search Quality Insights" series of blog posts to explain what the company has been doing to improve overall search quality in Bing.

The latest installment in that series, today's June 12 post on experimentation and continuous improvement, focuses on techniques that the research team in Bing is using to improve the quality of search results. The post highlights a technique called "optimized interleaving," which combines result lists and tracking clicks to improve the relative quality of search results. The post highlights the findings in a research paper on measuring search results which was presented at the WSDM-2013 conference in February 2013.

Bing's research team, which includes a "few dozen" people, has been working on search for about a decade, said Bing Corporate Vice President of R&D Harry Shum. It's taken much of that time for Microsoft to improve its search quality so that it can stand against anyone else's, Shum said.

Exactly how one measures search quality remains a thorny problem, Shum said. Just because users get a ranked list of results doesn't insure that those at the top are definitely the most relevant.

The machine-learning systems powering Bing's back end already can handle tasks ranging from correcting spelling of queries, interpreting search intent, separating out junk pages and rank indexed pages. But these kinds of systems need to be optimized for user satisfaction, Shum noted in today's blog post. But this remains problematic because there's no objective way to measure user satisfaction, which is where "surrogate measures," such as the aforementioned interleaving algorithm fits in.

"Surprisingly, once your machine learning systems are powerful enough, your choice of surrogate measure has a strong influence on the kind of results you return," Shum blogged.

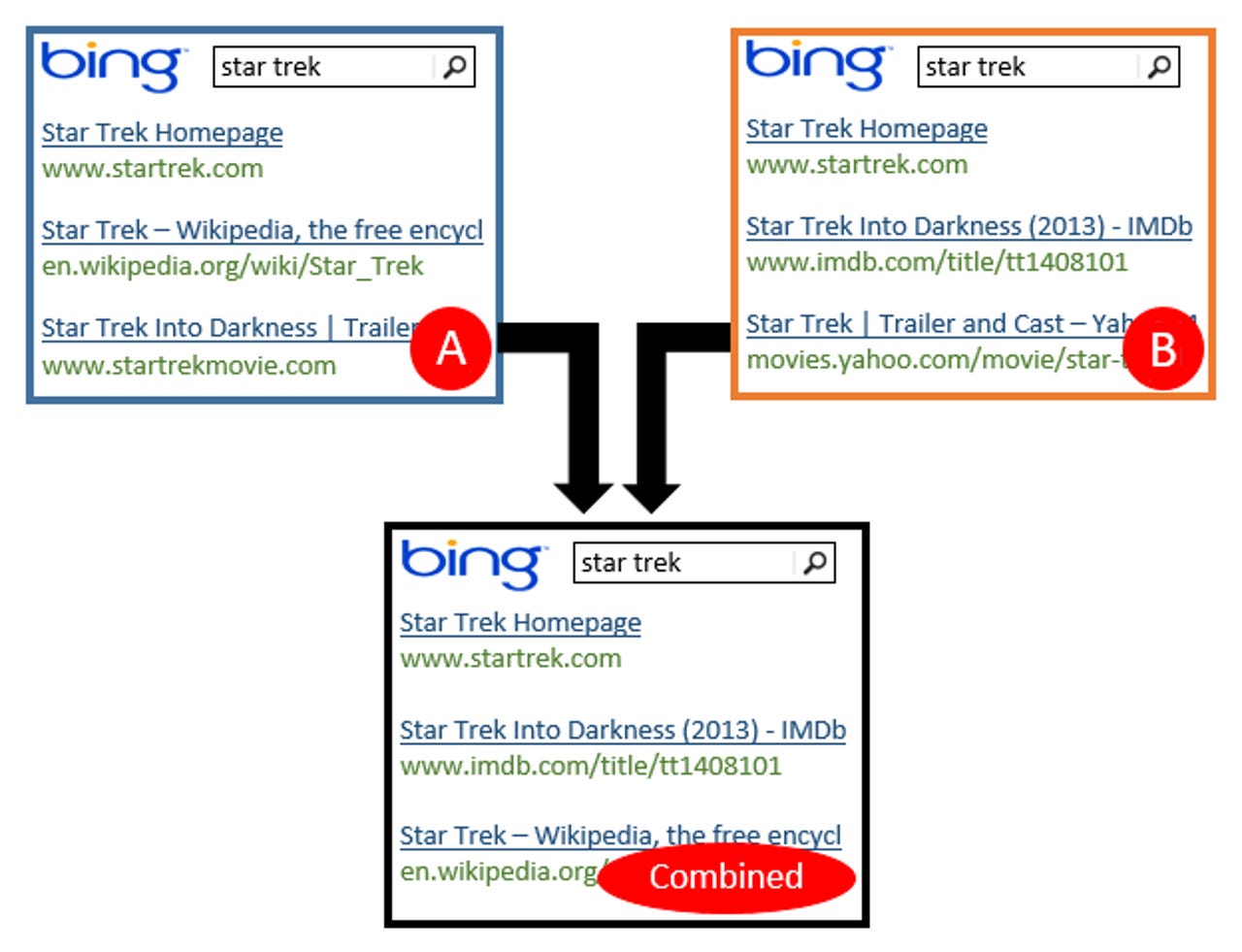

Bing researchers used a variant of A/B testing -- randomized experiments with two variants, often used in the medical field -- to create a solution that allows Microsoft to move search results that users prefer higher up in rankings. (The "interleaving" comes into play by allowing users to mix A results and B results, rather than simply choosing one set over the other.)

Microsoft already uses these techniques with Bing today, meaning users regularly take part in A/B experiments when they use Microsoft's search engine, Shum noted in the blog post.

Microsoft as a whole often uses A/B tests to hone products and services, but because Bing has millions of users and billions of clicks, results from these tests are far more statistically significant than they may be in other product groups, Shum said.

More specifics about how the interleaving algorithm works and how Microsoft is using it to hone Bing's results are in today's post.