Facebook reveals guide for dealing with spam, explicit content reports

In an effort to do a little damage control about potential damages found on Facebook, the world's largest social network has published a guide revealing how it deals with user-submitted reports.

For starters, Facebook asserts that it has 24-hour coverage each day for reports made to Facebook with hundreds of employees scattered in offices worldwide "to ensure that a team of Facebookers are handling reports at all times."

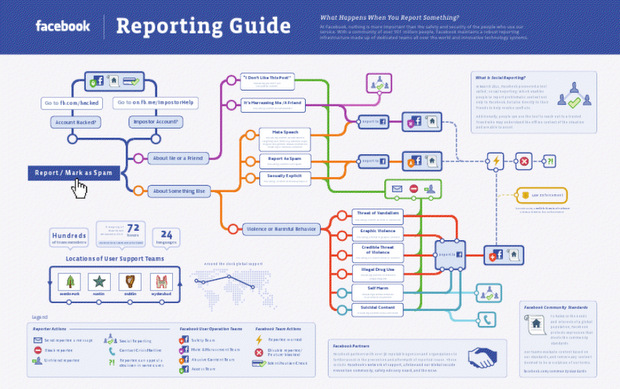

Beyond that, we now have the flow chart below to reference for how those reports can be addressed.

Depending on what is reported as spam or worse, Facebook's User Operations unit is divided into four specific teams that review certain report types: safety, hate/harassment, access issues, and abusive content.

From there, a few things could happen. If the content is deemed unworthy and violates Facebook policies, the content would be removed and the user who posted it would be warned.

But if it's really, really bad (i.e. explicit photos, impostor accounts, or offensive content, etc.), the report could escalate all the way up to being passed onto law enforcement agencies. This isn't out of the ordinary by any means as most major search and social networking companies already have infrastructures set up like this. But Facebook is taking the opportunity to be more transparent by revealing this guide in a simple flow chart that all users should be able to comprehend.

Facebook has a few more motives for publishing this guide. First of all, it should inform users not to be too casual about what content they report to Facebook as harmful. But it's also to reassure the 900 million and counting subscribers that Facebook is taking their reports seriously.

Chart via Facebook

Related:

- Salesforce.com goes mobile-first on latest platform release

- Salesforce Radian6 drinks from the Twitter firehose with global alliance

- Microsoft, Yammer and the land grab: Social enterprise lunacy

- Churchill Club podcast: The elephant in the enterprise: What role will Hadoop play?

- How stupid do you have to be to think you'll have privacy on Facebook?