How to make your website really, really fast

Steve Souders knows how to make a website speed through a web browser.

And he works at Google, one of the fastest websites around.

Web performace is a two-pronged beast: efficiency and response time. Efficiency deals with the scalability challenges of building a top 100 global website. You have millions of users and billions of page views, and it's awe-inspiring to understand the full scope of the backend architecture of something that large.

The set of directions that the HTML document gives to every process really determines the speed of the page.

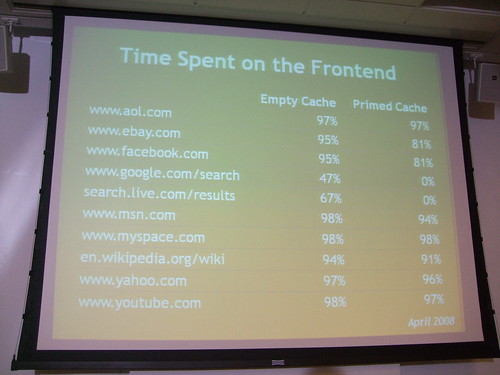

On iGoogle for example, only 17% of the page is backend, non-cached data and needs to be requested each time. The rest is front-end processing.

80-90% of the end-user response time is spent on the front-end. Start there when you want to figure out how you can make your site faster.

If you can cut this front-end time in half, your users will notice it. Offer greater potential for improvement and notice simple performance tweaks on the backend too.

14 tips for performance

- Make fewer HTTP requests

- Use a CDN

- Add an Expires header

- Gzip components

- Put stylesheets at the top

- Put scripts at the bottom

- Avoid CSS expressions

- Make JS and CSS external

- Reduce DNS lookups

- Minify JS

- Avoid redirects

- Remove duplicate scripts

- Configure ETags

- Make AJAX cacheable

YSlow is a Firebug extension that gives developers the chance to analyze every slow part of your website and test it against the 14 points mentioned above.

O'Reilly Velocity is a web performance and operations conference co-founded by Souders and Tim O'Reilly. There should be some really good talks this year.

Souders also taught a class at Stanford called High Performance Websites.

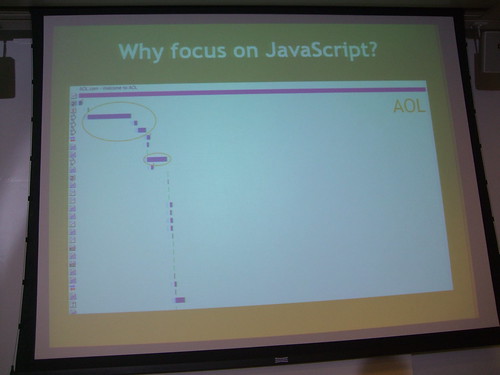

Why focus on Javascript? They have a huge impact on the page load time.

AOL has about 5 scripts accounting for about 60 or 80% load time.

Facebook has about a megabyte of Javascript.

JS is downloaded sequentially, even if the HTML document has already been downloaded. It won't draw anything on the screen unless the script is finished downloading.

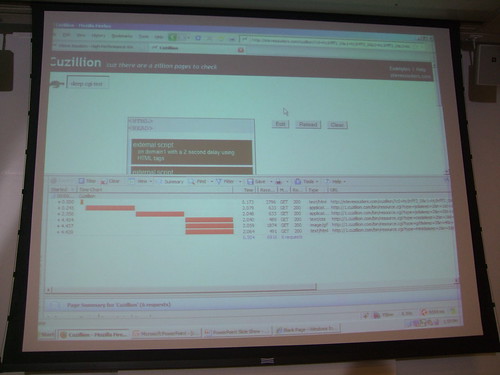

Cuzillion is a tool that does batch testing on webpages.

HTTPWatch is his preferred packet sniffer.

If you can split the Javascript in what's needed to render and "everything else", you will dramatically improve your page load time. Microsoft has a whitepaper that talks about how this can be done automatically with something called Doloto. Look at the source code of MSN.com and see how they do it.

But even if you can split the initial page load, you will still have external scripts that will have an impact on your page.

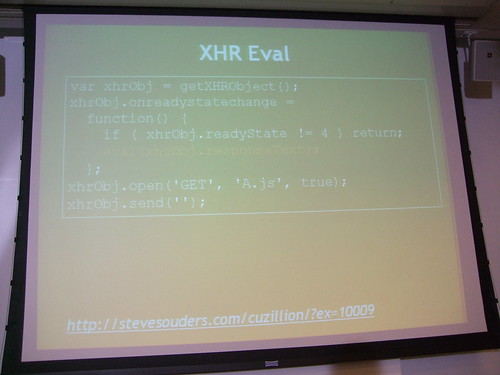

There are many ways to make your scripts load all at the same time. XHR evaluation is an option but you are open to XSS attacks and all scripts must have the same domain.

Putting a script in an iframe causes the JS to be downloaded in parallel with other resources on the page. You can use the DOM method for creating the head element using createElement.

Try the <script defer src="file.html">. This works in IE and FF 3.1, but it's not the best method. Domains can differ and you don't have to refactor your code though.

Don't even use the document.write method. It's terrible for many reasons.

It's always good to show busy indicators when the user needs feedback. Lazy-loading code sucks, but the user needs to know that the page isn't done.

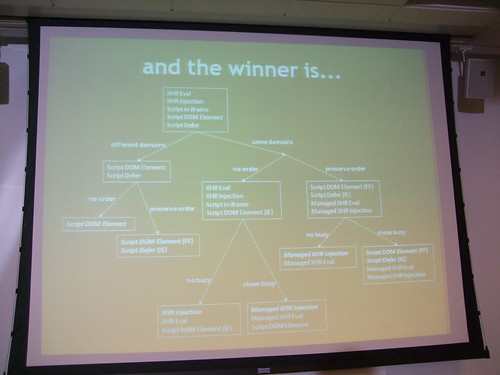

Ask yourself three questions:

- What's the URL of the script?

- Do I want to trigger busy signals?

- Does this script have to be executed in order or not?

Sometimes the user is waiting for their inbox to load, and you need the scripts to load in order. Other times it won't matter.

The best part: none of these techniques are that hard to implement.

Don't let scripts block other downloads either.

Stylesheets load in parallel, but if you have a stylesheet followed by an inline script, parallel downloads are broken.

Also, use link instead of @import.

Here is a link to Souders' UA profiler. It's a chart of all the compatibilities among all browsers regarding fast loading pages. Or as Souders puts it, a "community-driven project for gathering browser performance characteristics".

He also built something called Hammerhead, which adds a little tab to Firebug that tells you the load time of the page. It also clears the cache in between load times. You can compare websites side by side too.

In HTTP 1.1 you can do transfer encoding in chunks. Your browser can un-gzip even a partial HTML document and start parsing it before the stylesheet is even loaded. CNET.com does this.

IE7 will open two connections per server name, unless the traffic is HTTP 1.0. Optimize images with smush.it

Takeaways

Focus is on the front end. Many front end engineers are learning on the job, kinda teaching themselves. It's an under-represented but a critical part of the web community.Everything is going Javascript. It's the most painful thing to deal with on the page, and we need to identify and adopt some best practices in that space.

Speed matters. If you are waiting, you get bored and frustrated. When Google slowed down 500ms, they lost 20% traffic. Yahoo sped up their search results page only 400ms, and they got 5-10% faster. Amazon ties a 100ms latency to 1% sales loss. A faster page has an impact on revenue and cost savings.

Here is a link to Steve's presentation »

Souders wrote High Performance Websites in 2007.