Machine learning: Mainstream tools for your business

We're living in a sea of information, bathing in a firehose of data from across our businesses. We know we need to use it, but the question is how? How can we get value from that information, and at the same time get ready for the faster, bigger, deeper streams that are going to come from tomorrow's Internet of Things?

While analytics allow us to gain insights from what happened in the past, we need ways of predicting what's going to happen based on the information we're getting. Is that customer a fraudster? Will the compressor on a refrigerated delivery truck fail today or next week? Will there be a lot of traffic on my usual route home tonight? What did my colleague in Shanghai say over that Skype call just now?

They're all common questions that appear to have no answer. But if we take advantage of the data we have and the data we're getting, along with the masses of data in the wider world, we can get reasonably accurate answers. That's where machine learning (ML) comes into play; technologies that can offer predictive analytics to help you make the right business decision -- and often, to automate the process. In many cases, of course, you're already using machine-learning tools without realising it: in the autocorrecting keyboard on your smartphone or tablet; in Siri and Cortana's speech recognition; every time you scan and OCR a document; and when you read your Facebook feed, for example.

But the real benefit comes when you build machine learning into your own tools and applications, taking advantage of the cloud-scale services currently available from Microsoft, Google, IBM, and Amazon. Then there's the option of rolling your own tooling, taking advantage of the open-source tools that Facebook's AI Research group uses to explore how textual analysis can infer context; this approach can help with identifying mood in email messages for example, which is useful when you're building customer relations systems.

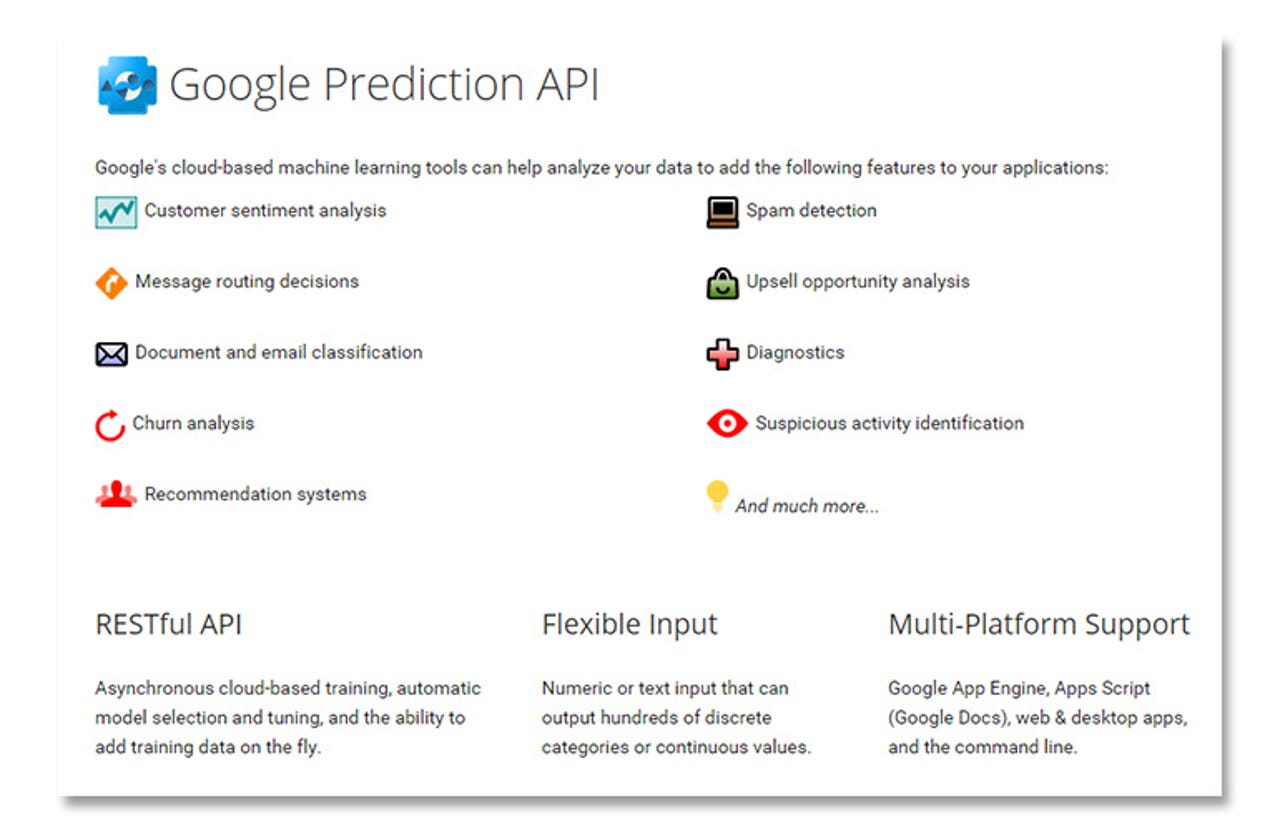

One of the first public cloud-scale ML systems was Google's Prediction API. Designed to predict trends based on large amounts of historical data, it's a fast and relatively cheap way of getting insights from your data. As with all ML systems, the key to getting good results from your data is having a good model to start with. That means having a large sample set in your training data (in this case up to 2.5GB), plus a well-designed column structure for the data you're using, which needs to be replicated in your queries. To speed things up, there's also support for the standard Predictive Model Markup Language, so you can bring models from on-premises machine learning and analytic tools to the cloud.

The Prediction API is designed to take advantage of the rest of Google's cloud services, working with its BigQuery and Cloud Storage platforms. There's also support for it in Google's PaaS App Engine developer tools. With a free six-month trial, you can start to build predictive applications relatively quickly -- although you're limited to 100 predictions a day and only 5MB of training data a day. In production it's still relatively cheap, with 1000 predictions costing just 50 cents.

Google provides a library of hosted prediction models to help you get started. These include tools to help you identify languages and understand social media sentiment. While these are pre-trained models, you can use them to get started writing your first Prediction API apps. A REST API means it's easy to include the Prediction API in your applications, whether they're running on Google's servers or in your data center.

Microsoft

Microsoft's Azure Machine Learning platform takes things a lot further, building on the frameworks developed by Microsoft Research's machine learning scientists. Again taking a data-driven approach to application development, the Azure ML tools are designed to help extract patterns from your data, using a series of different algorithms that can be mixed and matched to provide the learning tools your problem needs. A modular architecture means it's easy for Microsoft to add additional ML tools in the future, with affecting the code you're using.

At the heart of the service is Machine Learning Studio, which helps you design your machine learning system, providing tools for preprocessing and preparing learning sets and live data, exploring the data and building a model, and finally testing the model before deploying it in the Azure cloud. Finally, it gives you the APIs you'll need to call the model from your code. ML Studio gives you a drag-and-drop visual programming environment, in your browser, with the option of adding your own code modules alongside Azure ML's set of ML algorithms. Preprocessing tools in Azure ML include the ability to add missing values to data, as well as using mathematical preprocessing in languages like R.

Azure ML's experiment option lets you try out different learning algorithms, building candidate models that can be used with a subset of the training data to ensure that appropriate results are produced. You can choose different algorithms for different types of problem, with support for classification, regression, and clustering problems. Once built and tested, models can be tuned to help control the number of false positives and negatives produced.

Finalised Azure ML code can be exposed to the rest of your applications, using a standard set of APIs. Apps can work with streamed data, using Azure Event Hubs, or with data sets from other applications, and will scale with demand as they run on the Azure compute fabric. There's even an option to sell models in the Azure Marketplace, giving you an additional revenue stream.

Amazon

It's no surprise to learn that Amazon runs its own machine learning systems, which are used to drive its fraud detection and recommendation services, among others. The same code is now available on AWS, as the Amazon Machine Learning service. Like Azure ML it offers a visual tool for building models, along with tooling for managing your data.

Amazon ML works on data stored on Amazon's various storage services, including its S3 storage buckets and its Redshift cloud data warehouse. Training data sets are brought in, allowing you to choose appropriate schema and targets. Learning models are chosen based on the target data type, and then automatically build the model based on your data set. Although options are available to tune models, it's best to start with the defaults. Testing is handled by a 70-30 split of your training data, with 30 percent reserved for testing. Test results will not just show the results, but also how the various inputs contributed to the results. Once complete, a model can be used for batch predictions on data sets, or for continuous predictions on a real-time data feed.

Both options have APIs, so you can call them from your own code. While Amazon ML isn't yet available as a free trial, it's cheap enough to use in development, and robust enough for production. Once a model has been built you can use the service's graphical tools to tune for false-positives and false-negatives, in line with your business needs. Amazon provides a set of SDKs for most common development environments and platforms to simplify building apps that work with its ML tooling.

IBM

IBM's Watson is perhaps best known for its TV quiz show appearances, but IBM Research has been moving it to IBM's Bluemix cloud platform, with a range of different services intended to offer what IBM calls 'cognitive services'. Focusing more on prebuilt solutions rather than free-form machine learning tools, the Watson Developer Cloud gives you endpoints where you can include its services in your applications -- quickly adding natural-language tools, or analytics and recognition tools.

Each service in the Watson Developer Services catalogue gives you a quick link to the service API, as well as a sample code and a live demo. Applications are built using Bluemix's tooling, either in the web or in an Eclipse IDE. A set of command-line tools let you include Watson APIs in Cloud Foundry as well. Node.js tools also make it easier to include Watson services in your microservice web applications.

Watson's task focus makes it less flexible than other machine learning services, but you do get a quick on-ramp that simplifies the process of building AI techniques into your code.

If you want a very different approach to machine learning, with a focus on natural language and text analysis, you can now take advantage of work done by the Facebook AI Research (FAIR) group. FAIR uses the open-source Torch machine learning platform, and has been extending its use of convolutional neural nets using GPU computing to speed up the process of building and running neural nets. Torch is very much an academic AI tool, but it can help you design tooling that can quickly analyse images and text, extract meaning from unstructured data.

FAIR's open-source tooling can be compared with iniatives like Microsoft Research's Project Adam image recognition deep learning project -- technologies focused on delivering the next big bang in AI. We're already seeing the fruits of this in services like Skype Translator, with real-time speech recognition and translation, and you can start to use tools like this with Microsoft Research's Project Oxford APIs. Here you'll get access to face detection, speech recognition, and computer vision (with an invite-only natural language service).

Outlook

Machine learning technologies have quickly become mainstream tools, building on the compute capabilities of cloud services and the API-based service development model. RESTful APIs mean they're easy to add to your applications, and integration with common cloud storage platforms make them easy to train -- and cloud pricing models mean they're surprisingly economical to use, too. With tools like these, adding predictive analytics to your applications makes sense -- especially if you're focusing on personalisation or on identifying outlying events.

What seemed like science fiction just a few years ago is now commonplace and easy to use. With AI research at the core of many companies' R&D, it's going to be interesting to see what comes next.