Scientists demonstrate mind-controlled cursor

Scientists have harnessed electrical signals in the speech centres of the brain to move a cursor in a horizontal plane on a screen.

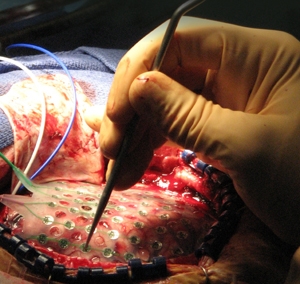

Scientists have test patients who were able to to move a cursor on a screen by thinking of or annunciating different phonemes. Photo credit: Eric Leuthardt

The research, led by Dr Eric C Leuthardt at Washington University in St Louis, tested four patients undergoing analysis for intractable epilepsy. The patients, who had sections of their skulls removed and electrodes attached to their brains, managed to move a cursor on a screen by thinking of or annunciating different phonemes.

The speech monitoring technique could be used in conjunction with techniques that attach electrodes to the parts of the brain that control movement, Leuthardt told ZDNet UK on Friday.

"Early applications are a complementary control feature to motor-systems control: controlling a cursor on a screen, [or] controlling a robotic arm in 3D space," he said.

During the experiment, electrode arrays were placed on parts of the brain linked to speech — Wernicke's area and Broca's area — and movement, measured in the motor cortex. Signals from the brain were noted and associated with different phonemes that were said or imagined by the patients — 'oo', 'ah', 'eh', and 'ee'.

Spoken and imagined phonemes created different signals, which were fed into a Dell computer running the BCI2000 software package. Patients were asked to move a cursor to a target position to the left or the right of a screen, and had target accuracies of between 68 and 91 percent, according to a paper published by the researchers in the Journal of Neural Engineering on Thursday.

The sensor arrays had 64 electrodes in an 8-by-8 matrix with a 10mm pitch. The patients participated for a week in the experiment, with 24-hour video monitoring. In addition, one of the patients had an experimental microarray with a 4-by-4 matrix of electrodes in a 1mm pitch.

Leuthardt said the next part of the experiment was to try to hone the system to recognise combinations of sounds and commands, and eventually meaning.

"We want to look at higher level speech decoding," Leuthardt said. "To start semantic decoding will be the main step. We want to work up the semantic tree. Could you truly have a conversation with your computer?"

The technique is designed for people who are quadriplegic or tetraplegic and will eventually be less invasive, said Leuthardt. The electrode arrays could be 'dime-sized', said Leuthardt, but at the moment the skull will have to remain open, with the brain exposed.

Get the latest technology news and analysis, blogs and reviews delivered directly to your inbox with ZDNet UK's newsletters.