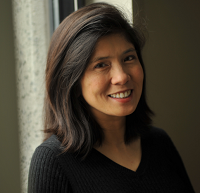

TechLines panelist profile: Archimedes' Katrina Montinola

Katrina Montinola's company is both a creator and consumer of big data and the tools necessary to get the most use for health care simulations.

Montinola, vice president of engineering for Archimedes, just completed a Hadoop implementation. To understand how Archimedes needs big data to thrive as a business, it helps to ponder healthcare, evidence based medicine and simulating clinical trials for multiple medical decisions. Montinola will be a panelist at ZDNet's TechLines roundtable focused on big data Oct. 4 in New York City. If you're local, work in the big data market and interested in attending ping me via the contact form.

Archimedes has a simulator that takes various humans---and all of their biomarkers---and examines what happens with cancer, diabetes and other illnesses under various care assumptions. These simulations---think SIMs for health care to some degree---can spit out data for 1 million people wrestling with a disease for two decades.

TechLines profiles: Ford's Michael Cavaretta on internal big data | NASA's Nicholas Skytland on big data literacy | TechLines panel Oct. 4: Finding the big data signals

"We run trials on everything to diseases to drug policies and smaller decisions made by insurance companies," explained Montinola. "These trials weren't possible before. Today we can do those trials in software."

Montinola, who manages software engineers behind Archimedes simulator, had a major scalability problem. Archimedes could run a simulation in hours, but take days to spit out results. The problem: Archimedes would take large data sets and stick them in the Postgres relational database. The bigger the data became the more the relational database hit its scale limitations.

"Hadoop allows us to have a customer design a simulation, run it and view results in hours instead of days," she said.

As for big data, "we can't get away from it," said Montinola. "Our business is creating these big data sets and there's no choice but to process them with technologies like Hadoop and put them in forms our customers can use."

Going forward Montinola reckons the story for Archimedes will become more interesting with new product launches based on the swelling river of big data. Among her key points:

- Big data feeds off itself. The more data you collect, the more potential there is to glean insight. "If the process is quick enough big data becomes more useful," said Montinola.

- The challenge is finding your way through all that data. Like a house filled with pack rats you can save a lot of stuff and find nothing.

- Storage is an ongoing issue. "We can give our scientists more storage and they just fill it up. They fill up whatever we give them based on 'you never know when we may need it,'" she said. "Storing the data is one thing, but you need a system to find everything when you need it. It's a constant battle on the IT side." Montinola offers the flip side to Ford's Michael Cavaretta, who wants to store everything.

- Recruiting talent in big data requires a nose for people that can understand scientist thinking as well as engineering wonks. "The people who have engineering backgrounds and can grasp some of the mathematics are very sought after," said Montinola.