Fighter pilots, periscopes and device software optimisation

You’ve got to admit it; nothing makes your brain feel numb more than terms like device software optimisation, or DSO to those in the know. It sounds like one of those vendor-created terms designed to sell us something we already have capabilities in, or can do with our existing toolset.

So why do we need it? We already have version control and change management software to help re-engineer desktop software to ‘devices’ in various forms – and if the software is being developed for a device from its first iteration then why should it need optimising in some special kind of way?

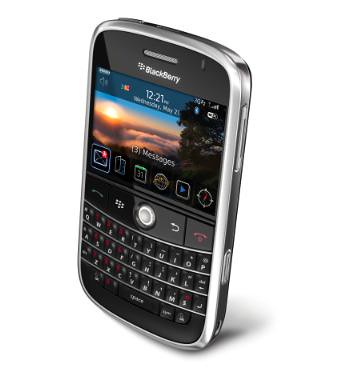

The BlackBerry gang at Research in Motion and Palm have been telling us for years about the various challenges of developing for ‘devices’ – you know the kind of thing: smaller screen, user input restrictions, limited battery life, slower processing power etc. Developing for these and other devices is different, so why do vendors like Wind River keep pushing the whole optimisation programme?

'

Image courtesy of: Research in Motion

'

Image courtesy of: Research in Motion

DSO has now, according to Wind River, encompassed multi-core software development and virtualisation techniques. The argument being that the ability to virtualise hardware allows multiple operating environments to share underlying processing cores, memory and other hardware resources. The company says that virtualisation presents the opportunity for device manufacturers to reduce hardware costs and power consumption as they add new capabilities to existing devices.

I think the problem here is what we mean by the term ‘devices’. It’s not just mobile phones, PDAs and other handhelds. The kind of device Wind River’s toolset would be used for could be a heads-up-display in a fighter pilot’s helmet or some kind of monitor inside a submarine periscope. Basically, this is the stuff of mission-critical aerospace and defense applications.

Maybe it’s just the way the term is structured that makes it feel uncomfortable. Wouldn’t DSO be better as Optimisation Software for Devices? It’s too late now I guess.