Google figures out how to make VR headsets vanish

No matter how good the virtual reality technology is, demonstrating its capabilities can often look farcical.

Demonstrators may prance around on a stage or in a booth, but unless interested consumers try on headsets for themselves, they cannot really experience the VR world on show.

Until now.

Google's research unit, together with Daydream Labs and YouTube Spaces, has taken on the VR headset and augmented the technology to create a way for spectators to see the VR headset user's full faces while they are immersed in virtual worlds.

By making the headset 'vanish,' as it were, Google and the tech giant's partners hope to give VR demonstrations a bit more dignity and immerse watchers more fully in the experience, giving them a "complete picture" of the VR world, according to a Google blog post.

On Tuesday, Google said that some of the disconnect between a virtual reality headset user and watchers can be solved through what is known as "Mixed Reality," which combines both the virtual context of a VR user and 2D video to let others experience something of the world.

However, VR headsets always hide the user's face, and so important cues in demonstrations -- such as facial expressions and emotion -- are taken away, which in turn lessens the impact VR demos can have.

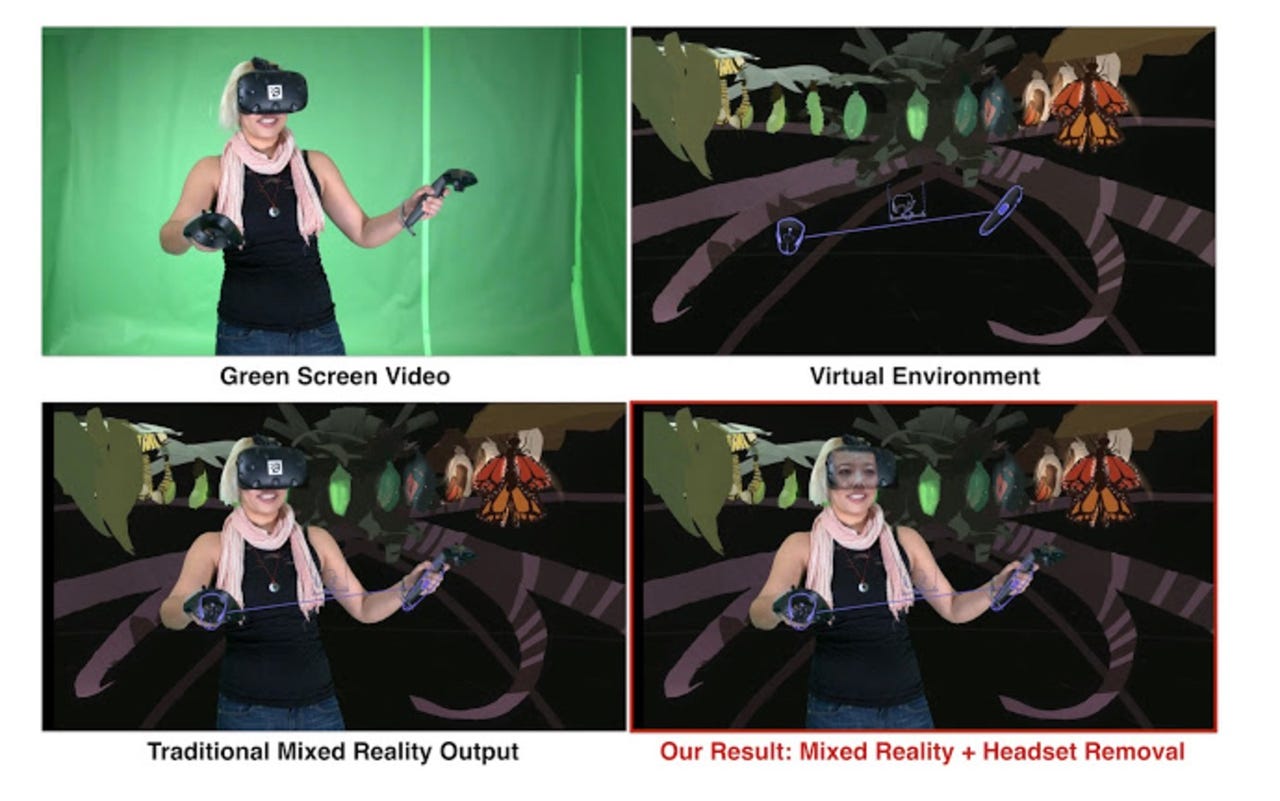

By using a combination of 3D vision, machine learning (ML) technology and graphics techniques, Google, Daydream Labs and YouTube Spaces have been working on solutions which "reveal the user's face by virtually "removing" the headset and create a realistic see-through effect."

The technology consists of three main components. The first is what Google calls "Dynamic face model capture," which utilizes a 3D model of the user's face as a "proxy" for the hidden face. This proxy is then used to synthesize the face in the Mixed Reality video, which makes it appear that the headset is being removed.

In order to create the model face, Google uses what it calls "gaze-dependent dynamic appearance," in which the user sits in front of a monitor and tracks a marker with their eyes. Their face is then calibrated in under a minute and synchronized with a map database of textures.

In the following stage, an external camera is used to capture a video stream of the VR user in front a green screen and the 3D face model is then aligned with the camera stream to give the headset the appearance of being removed.

Finally, the composition is rendered and checked to make sure the model face is consistent with the content in the camera stream. Google says it has been possible to capture the "true eye-gaze of the user" by combining the map database with an HTC Vive headset equipped with eye-tracking technology.

"Using the live gaze data from the tracker, we synthesize a face proxy that accurately represents the user's attention and blinks," Google says. "At run-time, the gaze database, captured in the preprocessing step, is searched for the most appropriate face image corresponding to the query gaze state."

The camera stream, displaying a see-through headset and the user's face and eye-gaze, is then merged with the VR environment to create the Mixed Reality video.

However, Google says that the application of this technology can go far beyond Mixed Reality.

"Headset removal is poised to enhance communication and social interaction in VR itself with diverse applications like VR video conference meetings, multiplayer VR gaming, and exploration with friends and family," the firm says. "Going from an utterly blank headset to being able to see, with photographic realism, the faces of fellow VR users promises to be a significant transition in the VR world, and we are excited to be a part of it."