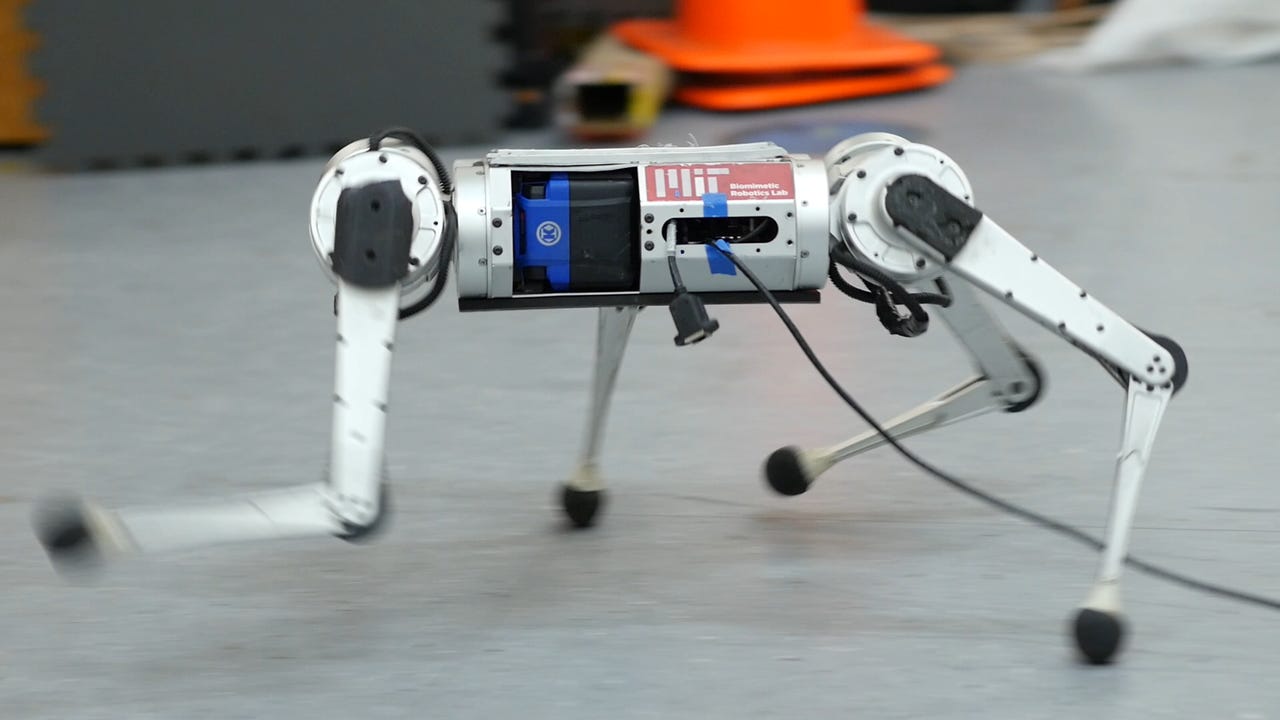

How MIT's robot Cheetah got its speed

There's a new version of a very quick quadrupedal robot from MIT's Computer Science and Artificial Intelligence Laboratory (CSAIL). While four-legged robots have garnered no end of attention over the last couple years, one surprisingly quotidian skill has been elusive for them: running.

That's because running in a real-world environment is phenomenally complex. The quick pace leaves scant room for robots to encounter, recover from, and adapt to challenges (e.g., slippery surfaces, physical obstacles, or uneven terrain). What's more, the stresses of running push hardware to its torque and stress limits. MIT CSAIL PhD student Gabriel Margolis and Institute of AI and Fundamental Interactions (IAIFI) postdoc fellow Ge Yang recently told MIT News:

ZDNET Recommends

In such conditions, the robot dynamics are hard to analytically model. The robot needs to respond quickly to changes in the environment, such as the moment it encounters ice while running on grass. If the robot is walking, it is moving slowly and the presence of snow is not typically an issue. Imagine if you were walking slowly, but carefully: you can traverse almost any terrain. Today's robots face an analogous problem. The problem is that moving on all terrains as if you were walking on ice is very inefficient, but is common among today's robots. Humans run fast on grass and slow down on ice - we adapt. Giving robots a similar capability to adapt requires quick identification of terrain changes and quickly adapting to prevent the robot from falling over. In summary, because it's impractical to build analytical (human designed) models of all possible terrains in advance, and the robot's dynamics become more complex at high-velocities, high-speed running is more challenging than walking.

What separates the latest MIT Mini Cheetah is how it copes. Previously, the MIT Cheetah 3 and Mini Cheetah used agile running controllers that were designed by human engineers who analyzed the physics of locomotion, formulated deficient abstractions, and implemented a specialized hierarchy of controllers to make the robot balance and run. That's the same way Boston Dynamics' Spot robot operates.

This new system relies on an experience model to learn in real time. In fact, by training its simple neural network in a simulator, the MIT robot can acquire 100 days' worth of experience on diverse terrains in just three hours.

"We developed an approach by which the robot's behavior improves from simulated experience, and our approach critically also enables successful deployment of those learned behaviors in the real-world," explain Margolis and Yang.

"The intuition behind why the robot's running skills work well in the real world is: Of all the environments it sees in this simulator, some will teach the robot skills that are useful in the real world. When operating in the real world, our controller identifies and executes the relevant skills in real-time," they added.

Of course, like any good academic research endeavor, the Mini Cheetah is more proof of concept and development than an end product, and the point here is how efficiently a robot can be made to cope with the real world. Margolis and Yang point out that paradigms of robotics development and deployment that require human oversight and input for efficient operation are not scalable.

Put simply, manual programming is labor intensive, and we're reaching a point where simulations and neural networks can do an astoundingly faster job. The hardware and sensors of the previous decades are now beginning to live up to their full potential, and that heralds a new day when robots will walk among us.

In fact, they might even run.