SPEC launches standardized energy efficiency benchmark

SPEC (Standard Performance Evaluation Corporation) launched its first standardized energy efficiency benchmark SPECpower_ssj2008 this week which tackles something that the computer industry has struggled to define in recent years. With datacenter energy costs spiraling out of control, server customers have struggled to sort out the conflicting messages from technology vendors about who is the energy efficiency leader. Now the industry has a standardized way to measure the energy efficiency of computer servers.

Even though this first version of SPEC Power only addresses server side Java performance, it is one of the most comprehensive standards for energy efficiency to date giving it instant credibility. Other energy efficiency metrics like the Green500 list simply takes the theoretical aggregate FLOPS (Floating Point Operations Per Second) of a cluster of computers and divides it by the measured peak power consumption or even peak rated power consumption if measurements aren't given. Since FLOPS aren't really a good real-world measurement of performance to begin with and most people don't operate their servers at peak loads or run massive clusters, the Green500 list simply isn't that useful of a metric.

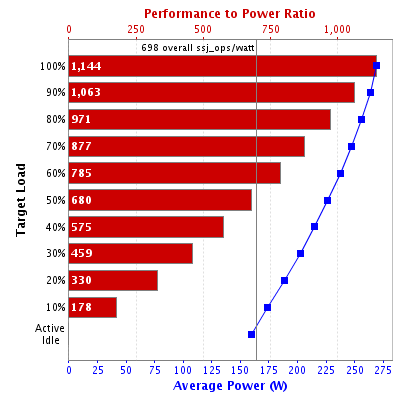

SPECpower_ssj2008 is basically a measure of ssj_ops/watt (server side Java operations per second per watt). I would personally prefer to call it ssj_opj (server side Java operations per unit of energy in Joules) since "per second per watt" is by definition "per Joule". SPECpower_ssj2008 factors in the fact that servers usually aren't operated at peak capacity and they're even idle at times. To factor for idle and peak load power consumption, average power consumption at 0, 10, 20, 30, all the way through 100 percent load capacity are measured and disclosed. Then server side Java operations per second are divided by the average power consumption in watts at every 10% increment and then all the scores are averaged again to produce the "overall" ssj_ops/watt metric. The following graph is from the current SPECpower_ssj2008 performance leader as of DEC 12th 2008 and it illustrates how this benchmark works.

I spoke to the President of SPEC Walter Bays yesterday about this new power benchmark and my preference for using ssj_opj was one of the topics that came up. I also asked Bays why there couldn't also be a SPECint_rate2006/watt or SPECfp_rate2006/watt measurement. Although Bays couldn't comment specifically on availability or the existence of future benchmarks, he did explain that the SPEC CPU (SPECint and SPECfp) benchmarks are peak throughput only which would be fairly simple to measure and interesting. The resulting metric would be a lot more valuable than the FLOPS/watt rating used in the Green500 list since SPEC CPU is much more comprehensive than a simple FLOP measurement. Bays also explained that SPECweb2005 might be a good candidate but it was a more complex benchmark (due to the multiple systems involved) making too much to tackle for the initial version of SPECpower.

<Next page - First server comparisons for SPECpower_ssj2008>

First server comparisons for SPECpower_ssj2008

On opening day (12/12/2007) of the benchmark, there have been exactly a dozen published submissions for SPECpower_ssj2008. Eleven of the systems are Intel based and one system is AMD based. The fastest dual-processor quad-core Intel system is based on the recently launched 3.0 GHz XEON E5450 45nm processor is capable of 1144 server side Java operations per Joule at peak load and it has an overall score of 698. The fastest single-processor quad-core Intel server is based on the aging year-old XEON X3220 2.4 GHz 65nm processor and it has an overall score of 667. Even though its absolute performance is less than half of the fastest dual-processor system, it also uses less than half the power which makes the efficiency score comparable.The only AMD based system submitted so far is based on an Opteron dual-processor dual-core 2216HE (High Efficiency model) chip running at 2.4 GHz. That system has an overall score of 203 though readers should note that the new High Efficiency 1.9 GHz Barcelona quad-core Opterons will undoubtedly do better when they get past their Barcelona launch problems. Also note that since Colfax International used 8 memory DIMMs instead of 4 like all the other vendors that submitted results, that probably added an unnecessary ~15 watts to the power consumption. If we factor that in the extra power consumed by the 4 extra DIMMs, the efficiency score might have been closer to 212. Since the performance jump of a quad-core 1.9 "Barcelona" over a dual-core 2.4 "K8" Opteron won't be enough to double and the power consumption won't change (same TDP for the chips and all other components remain the same), the new ssj_ops/watt score for AMD's 1.9 GHz Barcelona server will improve but it won't be enough to double.

Comparison of peak load efficiencies for the systems mentioned above

This first version of SPECpower is only representative of server side Java performance and energy efficiency and it doesn't necessarily translate to other server applications. Server side Java has traditionally been advantageous to Intel even at comparable core count and clock speeds (based on SPECjbb2005 results) so it's understandable that SPECpower_ssj2008 doesn't show well for AMD. AMD isn't at a clock-for-clock core-for-core disadvantage for SPECweb2005, so it might be reasonable to hypothesize that a hypothetical web server version of SPECpower would be more competitive for AMD if they can catch up on core count and clock speed. A hypothetical SPECfp_rate2006/watt metric (of interest to HPC customers) which is weighted more heavily to memory bandwidth would also be more competitive for AMD because of AMD's faster memory subsystem, but only if AMD can deliver higher clocked quad-core chips.

In conclusion, the new SPECpower_ssj2008 benchmark will likely be welcomed with open arms by server side Java customers. As a start, SPECpower_ssj2008 is an important milestone in the quest for a good energy metric. The market may also demand additional versions of SPECpower that encompass web serving, 2D/3D graphics applications, high performance computing, and general purpose computing. At least now we have a standardized framework to proceed.