Algorithms soon will run your life - and ruin it, if trained incorrectly

Something is happening in the design of AI models right now that -- unless it's fixed soon -- may have disastrous consequences for us humans, according to a recent experiment by an inter-university team of computer scientists from the University of Toronto (UoT) and the Massachusetts Institute of Technology (MIT).

All AI models need to be trained on vast amounts of data. However, there are reports that the way this is being done is deeply flawed.

Also: The ethics of generative AI: How we can harness this powerful technology

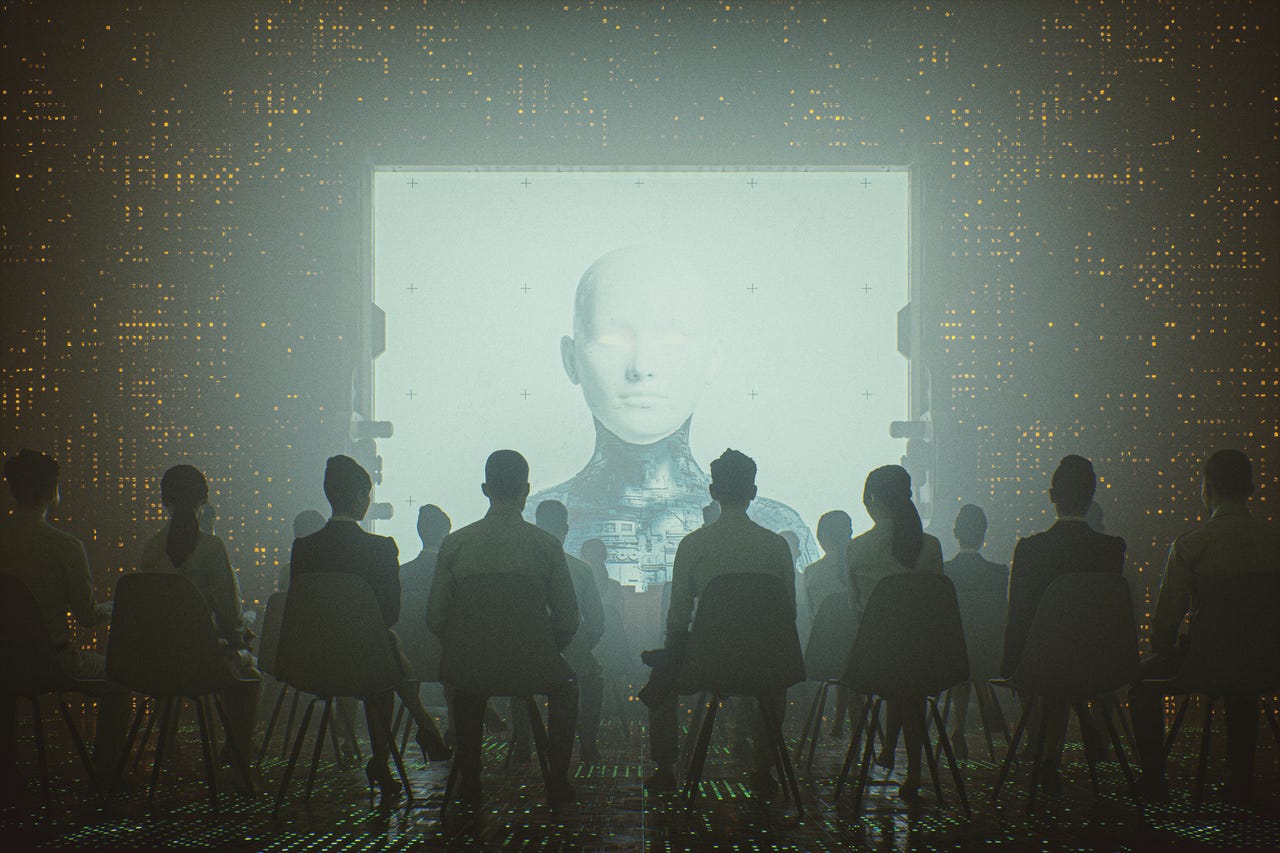

Take a look around you and behold the many major and minor ways that AI has already insinuated itself into your existence. Alexa reminds you about your appointments, health bots diagnose your fatigue, sentencing algorithms suggest prison time, and many AI tools have begun screening financial loans, to name just a few.

Imagine a decade from now when practically everything you do will have an algorithm as gatekeeper.

So, when you send in your application for a home rental or loan, or are waiting to be selected for a surgical procedure or a job that you are perfect for -- and are repeatedly rejected for all of them, it may not be simply a mysterious and unfortunate spate of bad luck.

Instead, the cause of these negative outcomes could be that the company behind the AI algorithms did a shoddy job in training them.

Specifically, as these scientists (Aparna Balagopalan, David Madras, David H. Yang, Dylan Hadfield-Menell, Gillian Hadfield, and Marzyeh Ghassemi) have highlighted in their recent paper in Science, AI systems trained on descriptive data invariably make much harsher decisions than humans would make.

Unless corrected, these findings suggest, such AI systems could cause havoc in areas of decision-making.

Training daze

In an earlier project that focused on how AI models justify their predictions, the aforementioned scientists became aware that humans in the study sometimes gave different responses when they were asked to attach descriptive versus normative labels to the data.

Normative claims are statements that flat out say what should or shouldn't happen ("He should study much harder to pass the exam.") What is at work here is a value judgment. Descriptive claims dwell on the 'is' with no opinion attached. ("The rose is red.")

Also: AI can be creative, ethical when applied humanly

This puzzled the team, so they decided to probe further with another experiment; this time they put together four different datasets to test drive different policies.

One was a data set of dog images used toward a hypothetical apartment's rule against allowing aggressive canines in.

The scientists then assembled a bunch of study participants to attach "descriptive" or " normative" labels on the data in a process that mirrors how data is trained.

Here's where things got interesting.

The descriptive labelers were asked to decide whether certain factual features were present or not – such as whether the dog was aggressive or unkempt. If the answer was "yes," then the rule was essentially violated -- but the participants had no idea that this rule existed when weighing in and therefore weren't aware that their answer would eject a hapless canine from the apartment.

Also: Organizations are fighting for the ethical adoption of AI. Here's how you can help

Meanwhile, another group of normative labelers were told about the policy prohibiting aggressive dogs, and then asked to stand judgment on each image.

It turns out that humans are far less likely to label an object as a violation when aware of a rule and much more likely to register a dog as aggressive (albeit unknowingly ) when asked to label things descriptively.

The difference wasn't by a small margin either. Descriptive labelers (those who didn't know the apartment rule but were asked to weigh in on aggressiveness) had unwittingly condemned 20% more dogs to doggy jail than those who were asked if the same image of the pooch broke the apartment rule or not.

Machine mayhem

The results of this experiment has serious implications for practically every aspect of how humans live, and especially so if you are not in a dominant sub-group.

For instance, consider the dangers of a "machine learning loop" where an algorithm is designed to evaluate Phd candidates. The algorithm is fed thousands of previous applications and -- under supervision -- learns who are successful candidates and who are not.

It then distills what a successful candidate should look like: High grades, top university pedigree, and racially white.

Also: Elon Musk's new AI bot has an attitude - but only for these X subscribers

The algorithm isn't racist, but the data that has been fed to it is biased in such a manner as to further perpetuate the same skewed view "whereby, for example, poor people have less access to credit because they are poor; and because they have less access to credit, they remain poor," says legal scholar Francisco de Abreu Duarte.

Today, this problem is all around us.

ProPublica, for instance, has reported about how an algorithm used widely in the US for sentencing defendants would falsely pick black defendants as most likely to re-offend at almost twice the rate as white defendants even though all evidence was to the contrary both at the time of sentencing and in years to come.

Five years ago, MIT researcher Joy Buolamwini exposed how the university lab's algorithms that are in use globally could not actually detect a black face, including hers. This changed only when she wore a white mask.

Also: AI at the edge: Fast times ahead for 5G and the Internet of Things

Other biases, against gender, ethnicity, or age are rife in AI -- but there's one big difference that makes them even more dangerous than biased human judgment.

"I think most artificial intelligence/machine-learning researchers assume that the human judgments in data and labels are biased, but this result is saying something worse," says Marzyeh Ghassemi, an assistant professor at MIT in Electrical Engineering and Computer Science and Institute for Medical Engineering & Science.

"These models are not even reproducing already-biased human judgments because the data they're being trained on has a flaw: Humans would label the features of images and text differently if they knew those features would be used for a judgment," she adds.

They are in fact delivering verdicts that are much worse than existing societal biases and that makes the relatively rudimentary process of AI data set labeling a ticking time bomb if done improperly.