Cerebras continues 'absolute domination’ of high-end compute, it says, with world’s hugest chip two-dot-oh

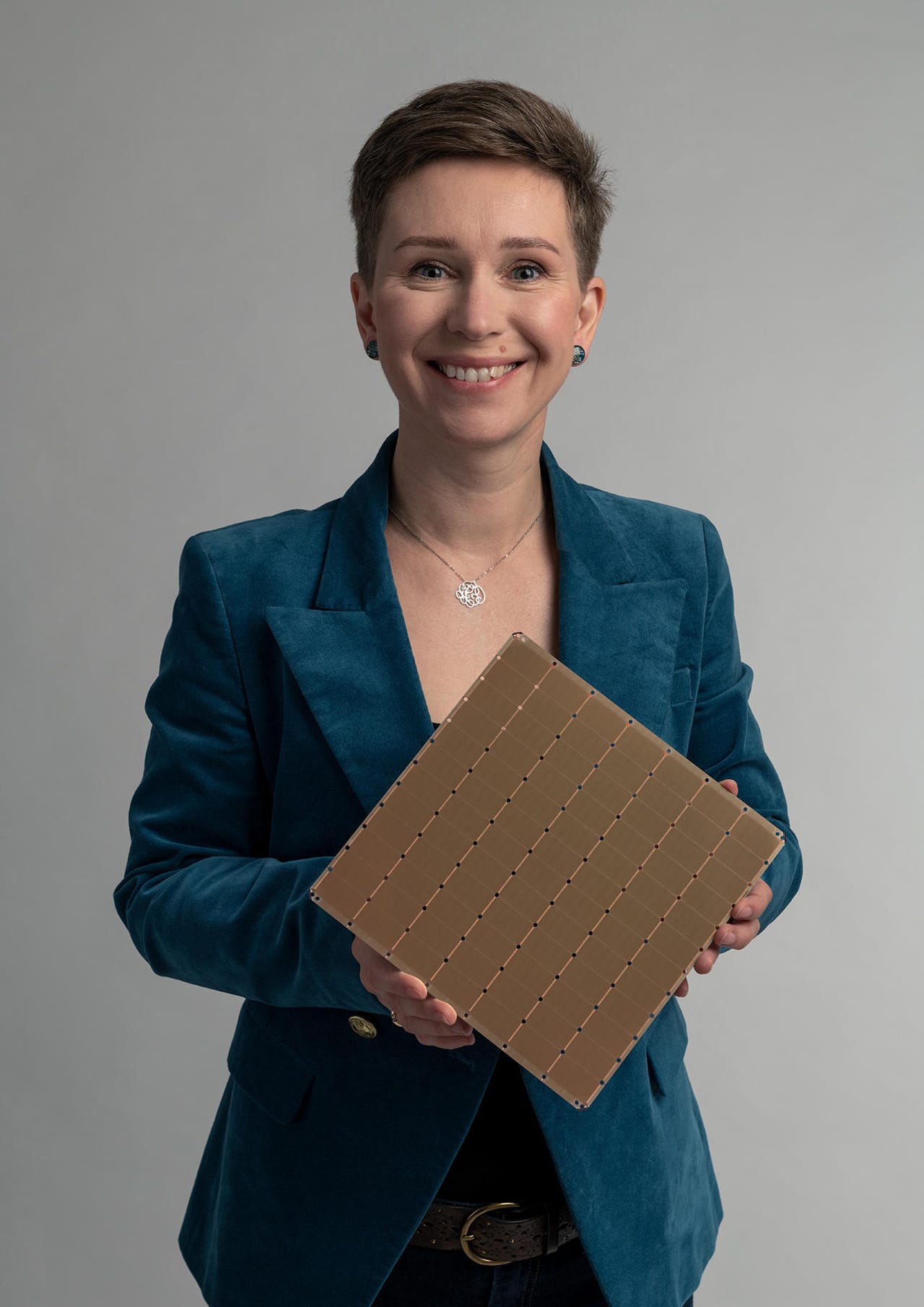

Cerebras Systems product manager for AI Natalia Vassilieva holds the company's WSE-2, a single chip measuring almost the entire surface of a twelve-inch semiconduor wafer.

Cerebras Systems, the Sunnyvale, California startup that stunned the world in 2019 by introducing a chip taking up almost the entirety of a twelve-inch semiconductor wafer, on Tuesday unveiled the second version of the part, the Wafer Scale Engine 2, or WSE-2.

The chip, measuring he same forty-six square millimeters, holds 2.6 trillion transistors, which is more than double the count of the original WSE chip. It is also 48 times the number of transistors of the world's biggest GPU, the "A100" from Nvidia.

The WSE-2 has 48 times as many transistors as Nvidia's latest GPU for AI, the A100.

The WSE-2, like its predecessor, was designed to crunch in parallel the linear algebra computations that make up the bulk of artificial intelligence tasks in machine learning and deep learning, where Cerebras competes with Nvidia's GPUs. The technology has also expended to high-performance computing tasks of the supercomputing variety, such as high-energy physics problems, regardless of whether they have any machine learning component.

Like its predecessor, the WSE-2 is utilized by being part of a pint-sized supercomputer, a dedicated machine that comes with all the networking and power supplies and water cooling required to run the thing. The new version, called the CS-2, fits in the same 26-inch high space in a standard telco rack as its predecessor, known as a 15RU slot. Three CS-2 machines can fit in a single rack. The machine will be generally available in the third quarter of this year.

The WSE-2 is in a new version of the company's dedicated AI computer, the CS-2. It features four fans on the bottom, and two pumps for the cooling system, on the upper right. Power supplies sit to the left, and ethernet ports above them.

In an interview with ZDNet, co-founder and CEO Andrew Feldman boasted of the company's ability to achieve a landmark in both chip and systems engineering in two years.

"We were able, in less time than most companies can make the next generation of a normal chip, to make the next generation of a wafer-scale chip," said Feldman.

"What it tells the world is this is a technical trajectory; we will do this again at smaller and smaller geometries, we've solved this problem."

More important, said Feldman, the increase in power of the part comes in the same size and the same power budget as the predecessor. "Whenever you can get an increase in the compute world of 2.3-x over a couple year period, you take that in a heartbeat," he said.

"That's just a monstrous jump, and it just continuous our absolute domination of the high end" of computing, he said.

The part was first teased during a presentation last year at a chip conference. And Cerebras's chief architect, Sean Lie, gave a technical talk Tuesday at a prestigious conference, The Linley Group Spring Processor Conference, to lay out the details.

The expanded transistor budget is made possible by shrinking transistors from the 16-nanometer process technology used in the original to 7-nanometer. Both the original and the new chip are fabricated by Taiwan Semiconductor Manufacturing, the world's largest contract electronics manufacturer.

The extra transistors more than double the key features of the WSE: the number of AI cores that can crunch linear algebra in parallel is now 850,000, up from 400,000; the on-chip SRAM has expanded from 18 gigabytes to 40 gigabytes; and the memory bandwidth has expanded from 9 petabytes to 20 petabytes per second. The total "fabric" bandwidth of the chip is now 220 petabits per second, up from 100.

In an homage to the neocortex, the youngest part of the brain, which is roughly the size of a dinner napkin, Cerebras promoted the chip Tuesday with a photograph showing it sitting between a knife and fork like a dinner plate.

"One of the things we avoid completely is this mess of trying to tie together lots of little machines, which is extraordinarily painful," said Feldman.

The main proposition of the WSE-2 and CS-2, as with the original model, is to avoid the complexity that makes tying together many CPUs or GPUs not only difficult but also a process of diminishing returns.

"You can't take a model designed for one GPU and run that model on many GPUs," said Feldman, referring to a deep learning neural network program, such as a natural language processing algorithm like Google's BERT.

To spread such an application across many systems, "you have to use different distributions of Pytorch and TensorFlow, you have think about the size of the memory, the memory bandwidth and the communications, the synchronization of overheads," he said. All those factors make accuracy and performance decline, he said.

"It's a mess, and that's all what you avoid." The WSE-2 has the appeal that the numerous parallel operations can be automatically distributed across its 850,000 cores without any extra work by the AI researcher, using the Cerebras software suite that automates how to break the workload into units that can be dispatched to each core.

The WSE-2 has the appeal that the numerous parallel operations can be automatically distributed across its 850,000 cores without any extra work by the AI researcher.

Cerebras has raised over $475 million in venture capital financing and has 330 employees. Feldman declined to say if the company is currently pursuing additional financing.

Aside from competing with the industry heavyweight, Nvidia, Cerebras is going up agains other startups in chip development, and also startups that have pursued a systems business. That includes the Bristol, U.K. startup Graphcore, and another Silicon Valley name, SambaNova Systems, which has received over $1 billion in financing.

Cerebras will step up its marketing efforts this year by having recently brought on board a highly experienced chief marketing officer, Rupal Hollenbeck, who was previously CMO at Oracle.