Everything announced at Nvidia's GTC 2021: A data center CPU, SDK for quantum simulations and more

After years in the making, Nvidia on Monday unveiled "Grace," an Arm-based CPU for the data center. The processor was one of several announcements delivered on Day One of Nvidia's Graphics Technology Conference (GTC) 2021, where the chipmaker will lay out its plans to advance accelerated computing. Along with Grace, Nvidia announced a next-generation data processing unit, new enterprise GPUs, a new autonomous driving SOC, a new cybersecurity application framework, a new SDK to speed up quantum circuit simulations and more.

GTC is all virtual this year, running Monday through Friday, giving Nvidia ample time to highlight its several product innovations. Nvidia has played a key role in advancing AI via GPUs, but its grander ambitions are no secret: In September, the company announced its intent to acquire chip IP vendor Arm for $40 billion.

"Leading-edge AI and data science are pushing today's computer architecture beyond its limits –processing unthinkable amounts of data," Nvidia founder and CEO Jensen Huang said in a statement Monday. "Using Arm's IP licensing model, Nvidia has built Grace, a CPU designed for giant-scale AI and HPC. Coupled with the GPU and DPU, Grace gives us the third foundational technology for computing, and the ability to rearchitect the data center to advance AI. Nvidia is now a three-chip company."

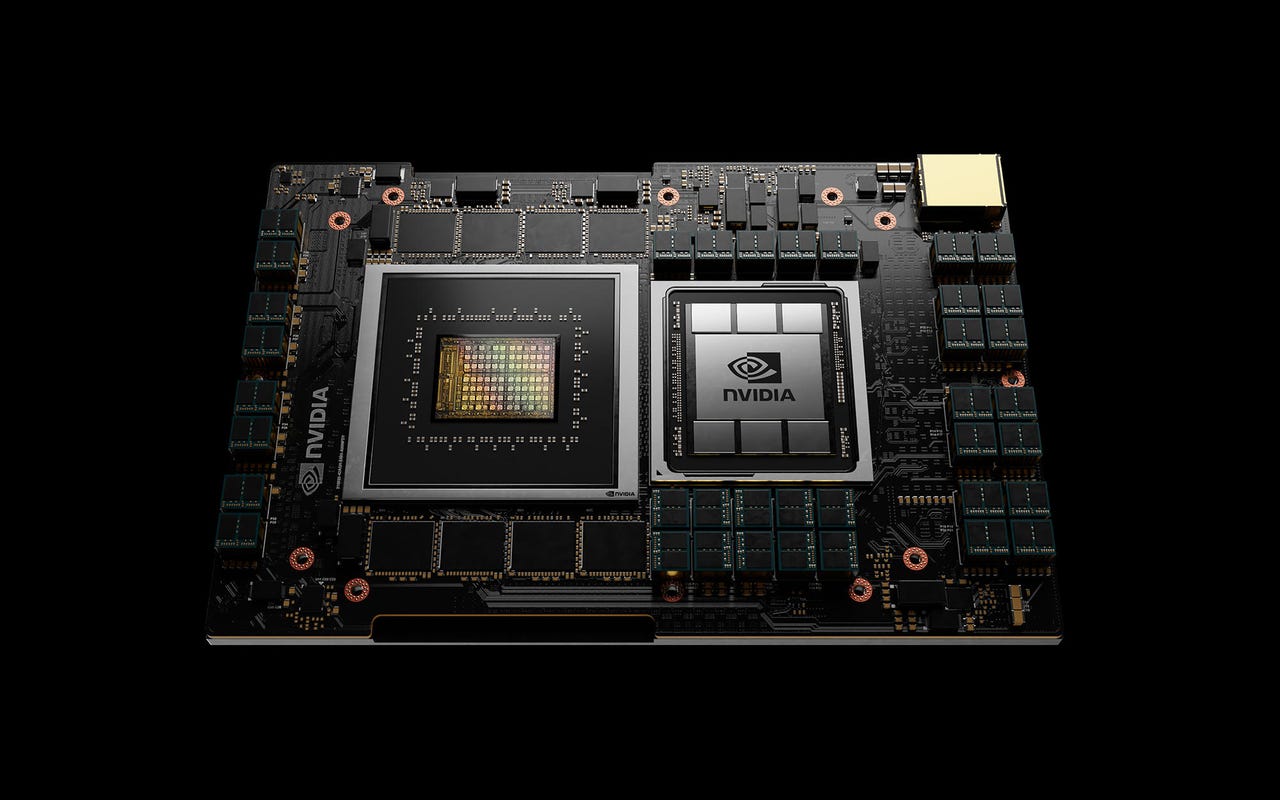

Grace, Nvidia's first data center CPU

Grace, Nvidia's Arm-based processor, is designed to power advanced applications that tap into large datasets and rely on ultra-fast compute and significant memory. That could include natural language processing, recommender systems and AI supercomputing. Huang called Grace "the basic building block of the modern data center."

A Grace-based system will be able to train a one trillion parameter natural language processing (NLP) model 10x faster than today's state-of-the-art Nvidia DGX-based systems, which run on x86 CPUs.

Nvidia's 4th Gen NVLink interconnect technology provides a 900 GB/s connection between the Grace CPU and Nvidia GPUs, enabling 30x higher aggregate bandwidth compared to today's leading servers.

The chip's LPDDR5x memory subsystem will deliver twice the bandwidth and 10x better energy efficiency compared with DDR4 memory. In addition, the new architecture provides unified cache coherence with a single memory address space, combining system and HBM GPU memory to simplify programmability.

The first customers to announce plans to deploy Grace are the Swiss National Supercomputing Centre (CSCS) and the US Department of Energy's Los Alamos National Laboratory. Both labs plan to launch Grace-powered supercomputers, built by HPE, in 2023.

Grace's broader availability is slated for 2023.

Huang explained how Grace, along with Nvidia's GPUs and DPUs, fits into Nvidia's data center roadmap.

Grace vs. Intel, AMD

On a call with reporters after his keynote, Huang discouraged the notion that Grace would negatively impact Nvidia's relationship with other data center chipmakers. Nvidia has "excellent" partnerships with Intel, AMD and others, he said.

"These are all companies who have really great products, and our strategy is to support them," he said. "By connecting our platform, Nvidia AI or RTX or Omniverse and all of our platform technologies, to their CPU, we can expand the overall market."

Asked whether Grace would move beyond the data center, Huang said that Nvidia builds technology "that's intended for the entire industry to be able to use, however they see fit. So Grace will be commercial in exactly the same way Nvidia GPUs are commercial."

He added, "Our primary preference is that we don't build something. If somebody else is building it, we're delighted to use it. That allows us to spare our critical resources and focus on advancing the industry in a way that is rather unique."

Grace, Huang continued, was built to solve the unique problem of training AI models that are gigantic.

"It takes month to train 1 trillion parameters, and the world would like to train 100 trillion parameters on multi-modal data, looking at video and text at the same time," he said. "The journey there is not going ot happen by using today's architecture."

Huang said that hundreds, or "it could be thousands," of companies will need giant systems powered by technology like Grace.

A problem like natural language processing, for example, needs to cover dozens of languages, as well as industry-specific language for different industries. Meanwhile, NLP requires constant training, since language naturally evolves.

"My sense is it will be a very, very large new market, just as GPUs were [initially] a $0 billion market," Huang said. "We tend to go after $0 billion markets because that's how we make a contribution to the industry. My intuition is it's going to be very large, but I don't know exactly" how large.

Omniverse Enterprise

After opening the Omniverse design and collaboration platform for open beta in December, Nvidia is now announcing Omniverse Enterprise. It brings the 3D design platform to the enterprise community to a familiar licensing model. It includes the Omnitverse nucleus server to create and view apps, as well as virtual workstation capabilities. It's designed to be deployed across organizations of any size, enabling teams to work together on complex projects.

So far, Nvidia has had more than 400 major companies using Omniverse. BMW Group created a complete digital twin of one of their factories, where more than 300 cars are passing through at any given time. The game publisher Activision is using it to organize more than 100,000 3D assets.

Meanwhile, Bentley Systems, the infrastructure engineering software company, is the first third party to develop a suite of applications on the Omniverse platform. Bentley software is used to design, simulate and model the largest infrastructure projects in the world.

BMW used Omniverse to create a digital twin of one of its factories

The Bluefield-3 DPU

Nvidia announced BlueField-3, the next-generation of its data processing unit (DPU) -- an accelerator designed to isolate the infrastructure services from applications running on x86 CPUs. In doing so, it accelerates software-defined networking, storage and cybersecurity.

The BlueField-3 DPU has 16 Arm A78 cores and accelerates networking traffic at a 400 Gbps line rate. It would take up to 300 CPU cores to deliver the equivalent data center services. The chip features 10x the accelerated compute power of the previous generation, as well as 4x the acceleration for cryptography. BlueField-3 is also the first DPU to support fifth-generation PCIe and offer time-synchronized data center acceleration.

Nvidia is also making available DOCA 1.0, its SDK to program BlueField.

The BlueField-3 DPU

Dell Technologies, Inspur, Lenovo and Supermicro are all integrating BlueField DPUs into their servers. Cloud service providers using DPUs include Baidu, JD.com and UCloud. Hybrid cloud platform partners supporting the BlueField-3 include Canonical, Red Hat and VMware. It's also supported by cybersecurity leaders Fortinet and Guardicore; storage providers DDN, NetApp and WekaIO; and edge platform providers Cloudflare, F5 and Juniper Networks.

BlueField-3 is fully backward-compatible with BlueField-2 and is expected to sample in Q1 2022.

New DGX SuperPOD

Nvidia unveiled the next generation of its DGX SuperPOD, which is a system comprising 20 or more DGX A100 along with Nvidia's InfiniBand HDR networking. The latest superPOD is cloud-native and multi-tenant. It uses Nvidia's BlueField-2 DPUs to offload, accelerate and isolate users' data. The new SuperPOD will be available in Q2 through Nvidia partners.

Meanwhile, Nvidia introduced Base Command to control AI training and operations on DGX SuperPOD infrastructure. It allows multiple users and IT teams to securely access, share and operate the infrastructure. Base Command will be available in Q2.

Nvidia also announced a new subscription offering for the DGX Station A100 -- a desktop model of an AI computer. The new offering should make it easier for organizations to work on AI development outside of the data center. Subscriptions start at a list price of $9,000 per month.

The cuQuantum SDK

While Nvidia isn't building a quantum computer, it is introducing the cuQuantum SDK to speed up quantum circuit simulations running on GPUs. Classical computers are already finding ways to host quantum simulations, and the cuQuantum library is designed to advance that research.

Researchers at Caltech working with cuQuantum were able to achieve a world record for simulation of the Google Sycamore circuit and were able to achieve 9x better performance per GPU.

Nvidia expects the performance gains and ease of use of cuQuantum will make it a foundational element for quantum frameworks and simulators. "I'm hoping cuQuantum would do for quantum computing what cuDNN did for deep learning," Huang said, referring to Nvidia's CUDA deep neural network library.

The EGX AI platform for the enterprise

Nvidia is introducing a new wave of Nvidia-Certified Servers featuring new enterprise GPUs, the A30 and the A10. The A30 is effectively a toned down version of the Nvidia A100. It supports a broad range of AI inference, training and traditional enterprise compute workloads. It can power AI use cases such as recommender systems, conversational AI and computer vision systems.

The A10 tensor core GPU is effectively a toned down version of the A40. It powers accelerated graphics, rendering, AI and compute workloads in mainstream Nvidia-certified systems. Both the A10 and A30 are built on the Nvidia Ampere architecture, and they provide 24 Gb of memory and PCIe Gen 4 memory bandwidth.

The Nvidia EGX platform

With the Nvidia EGX platform, enterprises can run AI workloads on infrastructure used for traditional business applications. Among those offering Nvidia-certified mainstream servers supporting EGX: Atos, Dell Technologies, GIGABYTE, H3C, Inspur, Lenovo, QCT and Supermicro. Lockheed Martin and Mass General Brigham are among the first incorporating these systems into their data centers.

Meanwhile, the high-volume enterprise servers announced in January are now certified to run the Nvidia AI Enterprise software suite, which is exclusively certified for VMware vSphere7.

AI-on-5G partnerships

The EGX platform platform is ideal for Nvidia's Aerial SDK, a development kit for software-defined 5G virtual radio area networks (vRANs).

"The Aerial 5G stack will allow us to extend [AI] all the way to the enterprise industrial edge... where AI will have the biggest impact," Huang told reporters this week. "Healthcare, warehouse logistics, manufacturing, retail... we haven't had the ability to bring AI to [these] industries until now."

Nvidia announced a number of partners that are developing solutions for Nvidia's "AI-on-5G platform" -- which is made up of the EGX platform, the Aerial SDK, and enterprise AI applications such as the Nvidia Isaac SDK. Fujitsu, Google Cloud, Mavenir, Radisys and Wind River are developing solutions for the platform.

Fine-tune AI models with TAO

Nvidia is diving into "a brand new endeavor... called pre-trained models," Huang told reporters this week. "They're like new college grads... trained for different tasks and skills."

Customers can choose pre-trained neural networks from the NGC catalog. They span the spectrum of AI jobs from computer vision and conversational AI to natural-language understanding. From there, a customer can fine-tune the model to meet their specific needs using Nvidia TAO.

TAO enables transfer learning -- taking features from an existing neural network and transferring them into a new one. It leverages small datasets users have on hand to give models a custom fit.

Data center security framework Morpheus

Nvidia is announcing a new AI application framework for cybersecurity called Morpheus. It can perform real-time inspection of all packets flowing through the data center.

Deploying Morpheus with security applications takes advantage of Nvidia AI computing, as well as BlueField-3 DPUs. Because BlueField is effectively a server running at the edge of every data center server, it acts as a sensor to monitor all the traffic between all the containers and VMs in a data center. It sends telemetry data to EGX server for deeper analysis. Using AI on EGX server, it can inspect every packet for unencrypted data, for example.

Hardware, software and cybersecurity vendors are working with Nvidia to optimize and integrate data center security offerings with the Morpheus AI framework. This includes ARIA Cybersecurity Solutions, Cloudflare, F5, Fortinet, and Guardicore. Hybrid cloud platform providers Canonical, Red Hat and VMware are also working with Nvidia.

Jarvis availability

Nvidia announced its Jarvis conversational AI framework is now available to anyone on the NGC platform. Developers can access pre-trained deep learning models and software tools to create interactive conversational AI services.

Jarvis models offer highly accurate automatic speech recognition, language understanding, real-time translations for multiple languages, and new text-to-speech capabilities for conversational AI agents. Utilizing GPU acceleration, the end-to-end speech pipeline (listening, understanding and generating a response) can be run in under 100 milliseconds.

Thousands of companies have asked to joined Jarvis's early access program since it began last May, with T-Mobile as one of its early users, Nvidia said.

The DRIVE Atlan platform, more automotive news

NVIDIA announced the next generation of its DRIVE platform, called Atlan. It's the first 1000-TOPS automotive processor, offering a 4x performance increase over the previous generation Orin.

The Atlan SOC also features next-generation GPU architecture, new Arm CPU cores, new deep learning and computer vision accelerators. It's equipped with BlueField, with the full programmability required to prevent data breaches and cyberattacks.

Nvidia is targeting automakers' 2025 models with the new SOC, ensuring it won't cannabalize sales of Orin, the previous generation of the platform. The Orin platform (254 TOPS) has already been selected by leading automakers for production timelines starting in 2022.

Nvidia's DRIVE Sim

Nvidia also said that Volvo Cars is expanding its collaboration with the company to use the Orin SOC to power the autonomous driving computer in next generation Volvo models. The first car featuring this SOC is expected to be the next-gen Volvo XC90.

Meanwhile, Nvidia's DRIVE Sim is now powered by Omniverse high-fidelity autonomous vehicle simulation. It can generate datasets to train the vehicle's perception system and provide a virtual proving ground to test the vehicle's decision-making process. The platform can be connected to the AV stack in software-in-the-loop or hardware-in-the-loop configurations to test the full driving experience.

Raised Revenue Outlook for Q1 2022

During its annual Investor Day conference, Nvidia raised its first quarter fiscal 2022 revenue expectations, citing outperformance in all four of its market platforms. Previously, the company gave an outlook of $5.3 billion in revenue, plus or minus 2 percent, for Q1.

Nvidia's market platforms include Gaming, Data Center, Professional Visualization and Automotive. The company is also raising its outlook for revenue from its new cryptocurrency mining processor to $150 million, up from $50 million.

"Within Data Center we have good visibility, and we expect another strong year. Industries are increasingly using AI to improve their products and services," Colette Kress, Nvidia EVP and CFO, said in a statement. "We expect this will lead to increased consumption of our platform through cloud service providers, resulting in more purchases as we go through the year. Our EGX platform has strong momentum, and we expect this will drive increased revenue from enterprise and edge computing deployments in the second half of the year."