Global survey: Most people expect humans will grow to trust, even love, AI

In the movie "Her," a man falls in love with his operating system, and romance ensues. In reality, people may or may not expect to build romantic relationships with AI systems in the future. They do, however, expect that humans will one day love and trust AI systems enough to depend on them for their well being.

A new global survey on attitudes toward AI, commissioned by ARM, illustrates how AI uses cases may evolve, given the level of trust humans expect to have in the technology. The survey only polled those who have a basic understanding of AI, finding that most expect humans to develop emotional connections with their AI-powered tools.

More than seven in 10 believe humans will grow to trust AI devices to the point where they could replace some human relationships, such as serving as a caretaker for the elderly. Fifty-seven percent said they personally would trust AI that much.

In fact, more than six in 10 people expect that, by the year 2050, humans will love AI as they would love a pet. Nearly five in 10 said they themselves would grow to love AI like a pet by that point.

The survey also reveals that people expect their interactions with AI to become more natural. As many as 94 percent of of global consumers think it is important that AI devices can understand and communicate using natural human language.

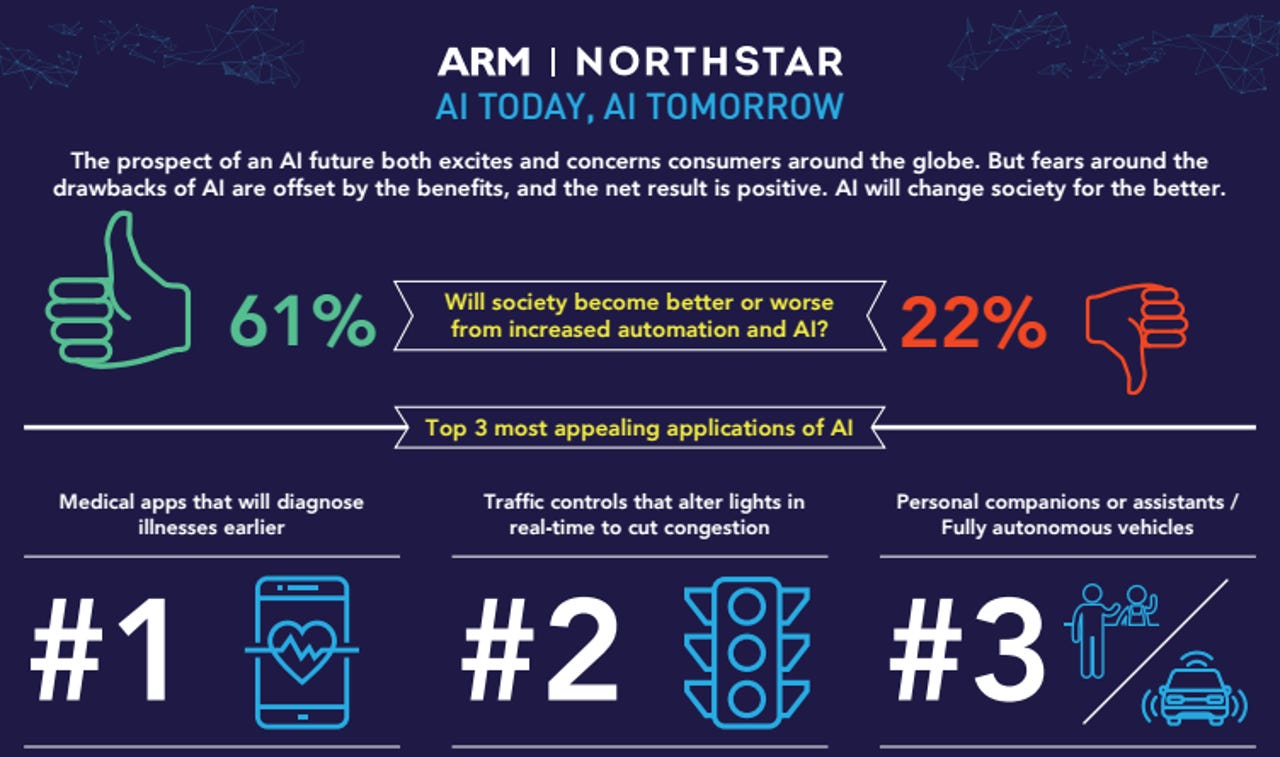

It may come as no surprise that people expect to build relationships with AI, given that most survey respondents see its most promising applications in the medical field. Out of a list of several ways AI could be used in the future, most people (67 percent) said they were most interested in medical applications that offer early diagnosis of illness.

As many as 54 percent said they were interested in using AI to serve as a personal companion or assistant -- another scenario that could benefit from an emotional connection. Other popular applications such as traffic control (appealing to 60 percent) were less personal.

While respondents in the survey were largely interested in AI-powered medical applications, the idea of visiting an AI doctor over a human doctor received a more mixed reaction. Just 47 percent said they'd probably prefer an AI doctor by the year 2050; the other 53 percent said they'd expect to prefer a human doctor.

The survey, conducted by Northstar Research Partners on behalf of ARM, polled 3,938 consumers globally. The survey only included respondents had at least a basic understanding of AI.

ARM CEO Simon Segars said in the report that he expected the survey would show "more concern that machines might take over the world." However, he continued, "it appears that people who know anything about AI seem to park the dystopian view in the realms of fiction."

The survey did show different attitudes in different regions of the world. For instance, 74 percent of respondents in Asia said AI will have a positive impact on society, while just 42 percent of people in Europe said so.

"Planning for the exact nature and speed of change is likely to depend on the local economy," Segars said.

The survey shows people expect to see a major shift to AI within the coming years: Just over a third said AI is already having a notable impact on their daily lives, yet three-quarters say they expect AI to have a big impact on their lives by 2022.

While there's a lot of optimism about AI, respondents also expressed concern about a variety of issues, including AI's impact on the job market. Asked about the biggest drawback of a future in which AI significantly impacts human life, 30 percent cited fewer or different jobs for humans. Another 12 percent cited societal issues around fewer opportunities for humans. Twenty percent were most concerned about giving some control over our lives to machines, while 18 percent were most concerned about more data being shared and potentially stolen.