Informatica Introduces AI Model Governance

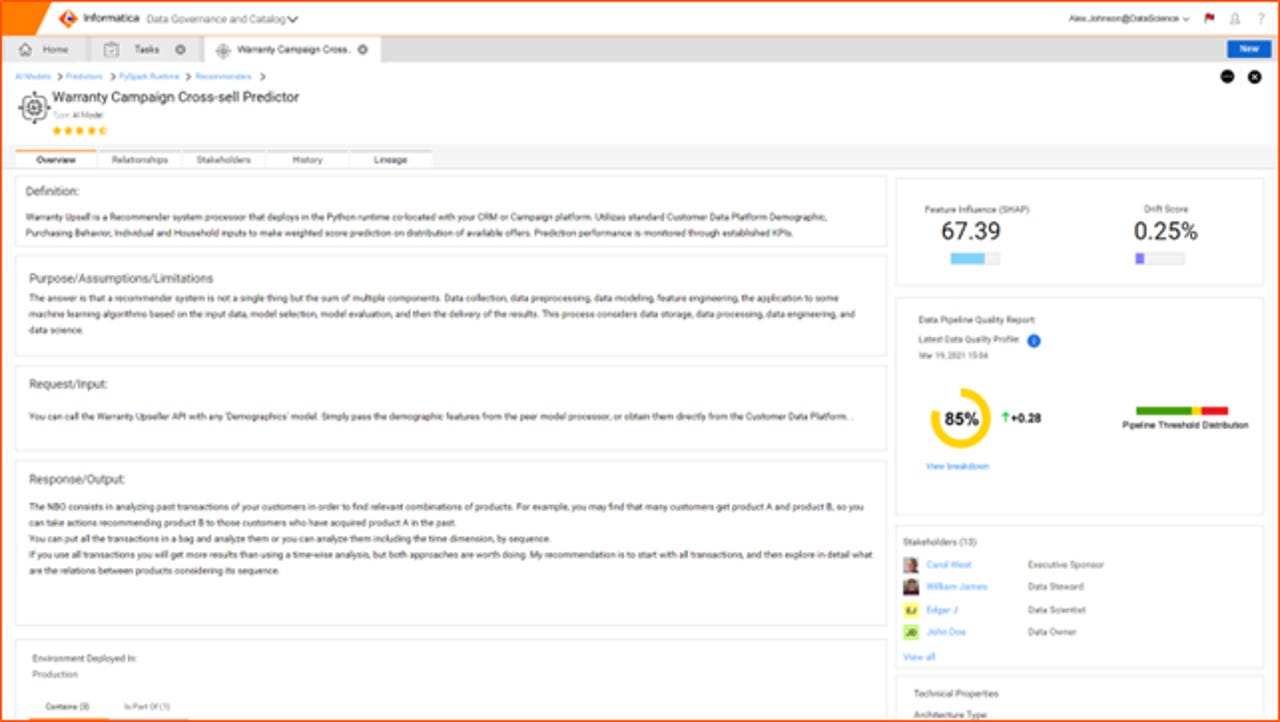

Informatica AI Model Governance model view

Today, Informatica is announcing the launch of Cloud Data Governance and Catalog (CDGC), which unites many prior features of the Informatica platform with new capabilities, all packaged into a cloud-native solution. My ZDNet Big on Data colleague Tony Baer has a great write-up of CDGC offering overall.

Also read: Informatica's latest acquisition extends its data catalog to hard-to-get sources

Among the new features in CDGC is an AI Model Governance (AIMG) capability, which seeks to map DevOps principles to the machine learning (ML)/AI lifecycle. We cover AIMG in this post.

The More You Know

AIMG is intended to provide traceability of an AI asset throughout its lifecycle, from inception through production, iteration and training, deployment and "KPI delivery" – which is Informatica's characterization of its model monitoring capabilities. In AIMG, models are documented with such metadata as their processing types, staging, weights and algorithm. Also tracked are prediction input data and KPIs around predictive outcome/accuracy, risk, drift and performance.

In AIMG's approach, the ML model and its data dependencies are treated as a single unit of governance control and managed across the deployment lifecycle. There might be several instances of a model in deployment, each of which is individually monitored in terms of data quality, data drift and corresponding impact on the model's performance.

Collaboration is Key

In a typical machine learning project, many individuals, with differing organizational roles, interact. For example, a line of business executive might initiate by engaging a data scientist to test a hypothesis about a business process. The data scientist then obtains the operational data, synthesizes it, and produces a model as output.

But afterwards, other participants, including ML engineers, data engineers, data stewards and citizen analysts may become involved as well. And it's these non-data scientist participants that are the intended audience for AI Model Governance, with the goal of providing model visibility to stakeholders within it.

Practically Speaking

From a practical perspective, a data steward or other professional can use Informatica's AI Governance Metamodel to view the model and access information including definitions, documentation of inputs/outputs, semantic relationships, feature content and related tags, ownership information, change management and data flows.

AIMG also provides AI model lineage, which includes data pipeline flows, data dependency highlights and lineage, through test, validation and production. According to the company, a feature coming in the future will be an ML-oriented extension of the self-service marketplace experience in Informatica Axon Data Governance, wherein users will be able to browse governed ML models and request access to them by "purchasing" them.

All Together Now

With its inclusion of AI Model Governance, Informatica's new Cloud Data Governance and Catalog is working to unify MLOps with DataOps, and, by extension, data science with data management. The disciplines within these parings are often siloed within an organization; Informatica hopes to bust these silos by increasing documentation and providing visibility to other individuals involved in ML projects.

Informatica joins Cloudera in extending a base data governance platform to handle ML models. Cloudera achieved this through enhancements to the Apache Atlas open source technology underlying its data catalog and lineage capabilities, just as Informatica is doing with its corresponding commercial platform capabilities. Hopefully, the two enterprise-focused companies are anchoring a trend, rather than forming an outlier pair. Governing data and ML models together makes sense for vendors and should start to make sense for more customers, too, as they make ML model deployment and use part of their regular operations.

Pamela Steger contributed to the reporting in this post