Nvidia's fabulous fakes unpack the black box of AI

What are neural networks actually doing?

That is the question of the "black box" of AI that has been much debated in recent years. This week, the machine learning scientists at Nvidia report some progress in understanding what's going on.

In a bravura display of fake facial images, the researchers claim a novel way to separate out the aspects of pictures that the neural net "sees" at a high level, such as the orientation of an object, and at a low level, such as details of texture.

The results, shown in a video posted by the team, are some of the most stunning fakes ever conjured by the now-familiar techniques of "generative adversarial networks," or GANs, an innovation first introduced back in 2014.

Also: Nvidia AI research points to an evolution of the chip business

In the paper, "A Style-Based Generator Architecture for Generative Adversarial Networks," posted Thursday on the arXiv pre-print servers, Nvidia researchers Tero Karras, Samuli Laine, and Timo Aila build on work they did a year earlier making fake headshots with GANs.

The motivation, expressed up front, is to get a handle on what GANs are doing.

As a result, there's "no quantitative way to compare different generators against each other."

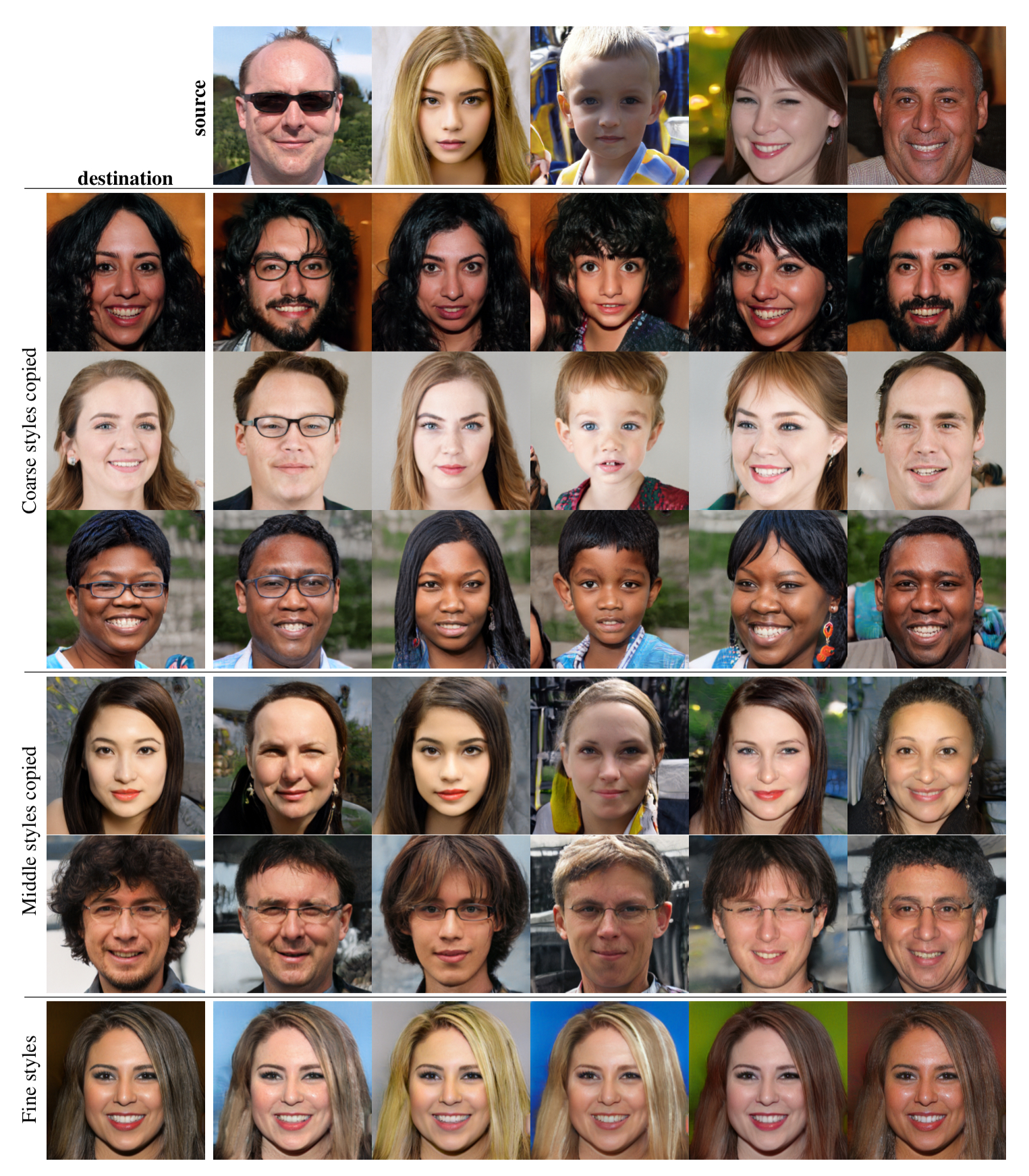

Fake faces along the top row provide "style" features that can be combined with source fakes in the left-hand column, creating a vast array of fakes in the middle of the picture.

The crux of their solution is to insert an extra piece into their neural network that separates out what they term "high-level" features of faces, such as the angle of the head, from low-level features such as skin tone.

By so doing, the authors were able to train the network to create its new images by adjusting those features, or properties, independently of one another. They base the approach on something called "style transfer," a way of generating images that can copy the brush techniques of a Vincent Van Gogh, say, and map it onto a photo of a street scene to create a new picture in the style of the artist.

Also: Facebook Oculus research crafts strange mashup of John Oliver and Stephen Colbert

Using a technique called "adaptive instance normalization," or "AdaIN," introduced last year by Cornell University researchers Xun Huang Serge Belongie, the high-level and low level features can be extracted from each image to create a style.

The Nvidia team added a twist: they can manipulate the different levels of features, high-level to low-level freely, a much more nimble way to mix and match the properties of the faces, from head size on down to the freckles.

By tuning the images in such a way, the theoretical payoff is that it's clearer what the network is doing at each instance of its process, a kind of window into its operation.

The practical effect is the ability to rapidly, effortlessly "morph" fake headshots by adjusting controls in software as easily as you would change the color of a picture in Photoshop, which you can see demonstrated in the video.

Must read

- 'AI is very, very stupid,' says Google's AI leader (CNET)

- Baidu creates Kunlun silicon for AI

- Unified Google AI division a clear signal of AI's future (TechRepublic

A striking additional discovery is that the GAN they created is now working on much less information than past fakes. Rather than laboriously "mapping" from pixels of one image to another to transfer styles, it's using only the style cues it gets from the AdaIN.

As the authors write, "We find it quite remarkable that the synthesis network is able to produce meaningful results even though it receives input only through the styles that control the AdaIN operations."

Think of it as the Mr. Potato Head of headshots. Fakes will never be the same again.

Innovative artificial intelligence, machine learning projects to watch

Previous and related coverage:

What is AI? Everything you need to know

An executive guide to artificial intelligence, from machine learning and general AI to neural networks.

What is deep learning? Everything you need to know

The lowdown on deep learning: from how it relates to the wider field of machine learning through to how to get started with it.

What is machine learning? Everything you need to know

This guide explains what machine learning is, how it is related to artificial intelligence, how it works and why it matters.

What is cloud computing? Everything you need to know about

An introduction to cloud computing right from the basics up to IaaS and PaaS, hybrid, public, and private cloud.