OpenAI spent $160,000 on Upwork for Minecraft gamers to train a neural net

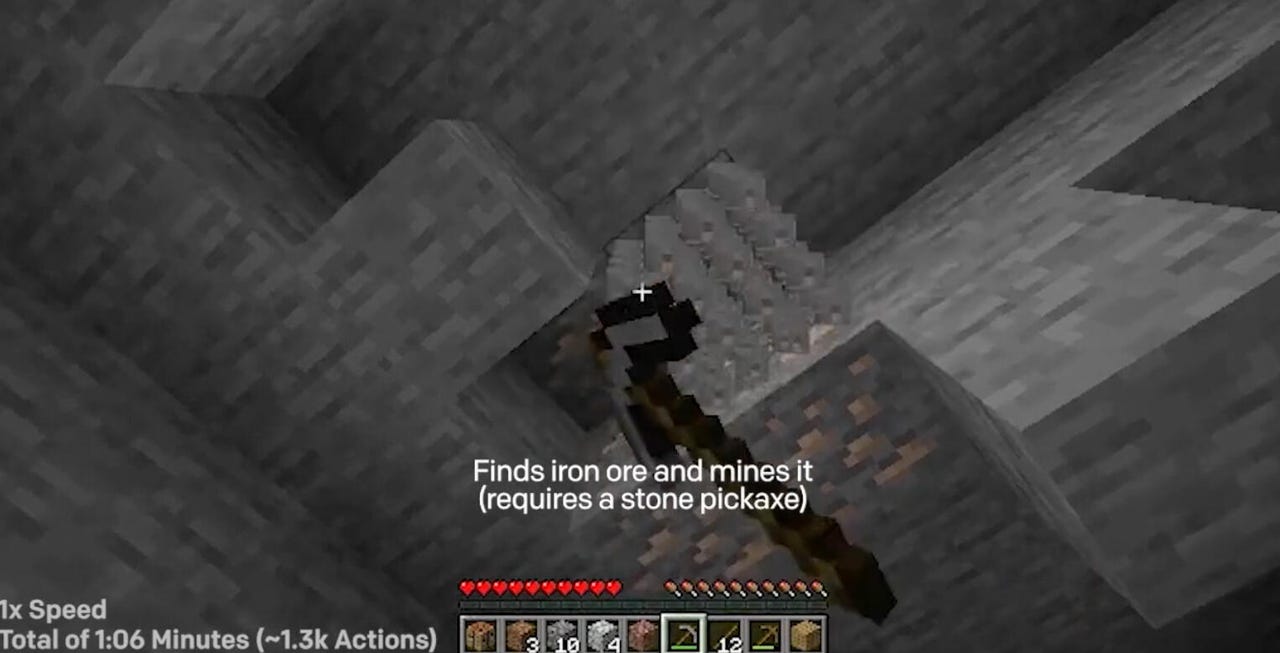

From the video of VPT pursuing the making of a diamong pickaxe in Minecraft. The computer program achieved the feat in ten minutes, half the time it would take a proficient human player to do it.

How important might it be to master the "diamond tool" in Minecraft?

Important enough to spend $160,000, according to OpenAI, the artificial intelligence startup.

That is the amount of money that a team at OpenAI spent to hire players of Minecraft on the online job listings platform Upwork to submit videos of themselves playing the game.

ZDNET Recommends

In a paper unveiled this week, "Video PreTraining (VPT): Learning to Act by Watching Unlabeled Online Videos," OpenAI researchers Bowen Baker and team break ground in the use of large datasets to train a neural network to mimic human keystrokes to solve different tasks in the video game. (A blog post has also been posted by OpenAI.)

A plethora of neural networks have conquered various types of games via what's called reinforcement learning in recent years, including DeepMind DeepMind's AlphaZero, which took on chess, Go, and Shogi, and the subsequent MuZero program, which added the ability to handle Atari games.

Baker and team wanted to develop a neural network for the more complex "open world" game environment of Minecraft, where an array of keystrokes allow players far greater degrees of freedom than in chess or Atari games.

Also: AI in Sixty Seconds

The research literature, the authors write, includes a "vast amount" of work on Minecraft. But the VPT work is unique, they write, for its scope and scale: "To the best of our knowledge, there is no published work that operates in the full, unmodified human action space, which includes drag-and-drop inventory management and item crafting."

The work of building the neural network, called VPT, took place in two stages. The first stage needed human game players or contractors, who assembled 4,500 hours of game play. The researchers later figured out that they only really needed about 2,000 hours.

Baker and team describe the process:

We had the applications open for a day, and then randomly selected 10 applicants for the first round of contractors. Later in the project, as we needed more data and as some contractors asked to terminate their contracts, we added more applicants from the original pool as well as referrals from the currently working contractors. The contractors were paid $20 per hour (minus Upwork platform fees and applicable taxes). All of the results presented in this paper are based on about 4,500 hours of data (including data recorded to gather statistics of human play that was not used for training), which cost us around $90,000. Over the course of the project, we collected some data we did not use due to bugs in the recorder and for some ideas we ultimately did not pursue. In total, we spent about $160k for contractor compensation over the course of the project. However, as we discuss in Sec. 4.6, we could likely obtain most of our results with an IDM trained using only $2000 worth of data, i.e. the foundation VPT model, BC fine-tuning to the earlygame_keyword dataset, and the RL fine-tuning results. Collecting the contractor_house dataset cost about $8000. Because we used the IDM trained on about 2000 hours of contractor data, the actual cost of contractor data for those results was around $40,000.

For those 4,500 hours, they attached labels to the frames of game video for actions such as "inventory," to check a player's collection of objects, using the "E" key; and "sneak," to move "carefully" in the current direction, using the SHIFT key. Those actions are recorded as JSON text strings at each moment of game play and stored with the video frames.

The frames of gameplay with their labeled actions were used to train a neural net called an inverse dynamics model, or IDM, which learns what actions go with what frames. The IDM is a mash-up of several kinds of neural nets, including a 3-D convolutional neural net and a ResNet to parse the video frames, and several Transformer networks of attention to predict the next video frame.

Also: Sentient? Google LaMDA feels like a typical chatbot

That IDM's trained ability is then used on a much larger set of video footage, a total of 70,000 hours of unlabeled Minecraft footage gathered from the Web. The IDM applies "pseudo-labels" to that vastly larger collection. In other words, the IDM, and the contractor fees, are a way to bootstrap a huge video training set.

The training regimen for VPT.

As expensive as the contractor payment might seem, the approach represents a big cost savings, the authors write. If they had to collect contractor data equivalent to the 70,000 hours of Web videos, it would be vastly more expensive.

"If we could cheaply collect a labeled contractor dataset of a similar order of magnitude as web_clean, then this would not be important; however, collecting that scale of data would have cost millions of dollars."

Using the 70,000 hours, the authors then train a second neural network, also made up of Transformer layers, to mimic the user actions in the videos, a common practice known as "behavioral cloning."

The point of the work is to find a way to train a general purpose computer "agent" that can use the wealth of the data on the Internet that has no labels to solve tasks that involve causality, meaning, and sequences of actions that have a necessary relationship from one to the next.

"The results presented in this paper help pave the path to utilizing the wealth of unlabeled data on the web for sequential decision domains," they write.

The work can conceivably be used for numerous computer tasks that require sequences of mouse clicks and other human operator controls, they suggest.

"While we only experiment in Minecraft, we believe that VPT provides a general recipe for training behavioral priors in hard, yet generic, action spaces in any domain that has a large amount of freely available unlabeled data, such as computer usage."

Open-AI is best known for the large language program called GPT-3, which also uses a "pre-trained" approach based on tons of Web data that is not labeled. In a sense, the Minecraft game is extending that approach to mimicry of behavior in the domain of sequential computer tasks captured via video.

The ultimate achievement is to in some cases exceed the time required for a human to achieve one of the hardest tasks, obtaining a diamond pickaxe.

In Minecraft, diamond-based tools just last longer and can do more damage. Diamond pickaxes are the only ones that are specifically important to most gamers. You need a diamond pickaxe to mine obsidian and a fictional material called netherite, both of which are important for endgame activities such as enchanting tables and making netherite equipment.

After training the VPT to learn all sorts of Minecraft tasks, the authors used a "fine-tuning" approach that developed a reinforcement learning neural network to fashion a diamond pickaxe in a faster-than-normal time.

"To demonstrate the efficacy of RL fine-tuning, we chose the challenging goal of obtaining a diamond pickaxe within 10 minutes starting from a fresh Minecraft survival world," they write.

This is challenging for humans, who usually take twice as long to do it, if they can do it at all:

Doing so involves acquiring a sequence of difficult-to-obtain items that require complex skills like mining, inventory management, crafting with and without a crafting table, tool use, operating a furnace, and mining at the lowest depths, where many hazards like enemies and lava exist (Fig. 6). Adding to the difficulty, progress can be easily lost by dropping items, destroying items, or dying. Obtaining a diamond pickaxe more often than not takes a proficient human over 20 minutes (24,000 actions).

In assembling both the contractor data and the unlabeled 70,000 hours of Web video, the authors were mindful of the prospect of offensive content. "The contractors could theoretically use Minecraft's open-world property to generate personally identifiable information and/or offensive content (e.g. by using Minecraft blocks to write their name or offensive messages, then finding a spot from which the message would be visible)," they write, though they didn't see this in the videos from contractors the authors watched.

"Of course, we train our BC [behavioral cloning] models on videos from the internet of people playing Minecraft, and if such behavior is in those videos our model could also potentially learn it, although we expect such behavior is rare enough that our model would not be likely to reproduce it," they write.

Where does such a general agent go next? The idea is that having conquered diamond axes, VPT, or its offspring, can do all kinds of things that a person might do with a mouse and keyboard, including booing tickets, surfing social media, or navigating maps.