Stuck with a lo-res image? Google's real 'zoom in, enhance' can fix it

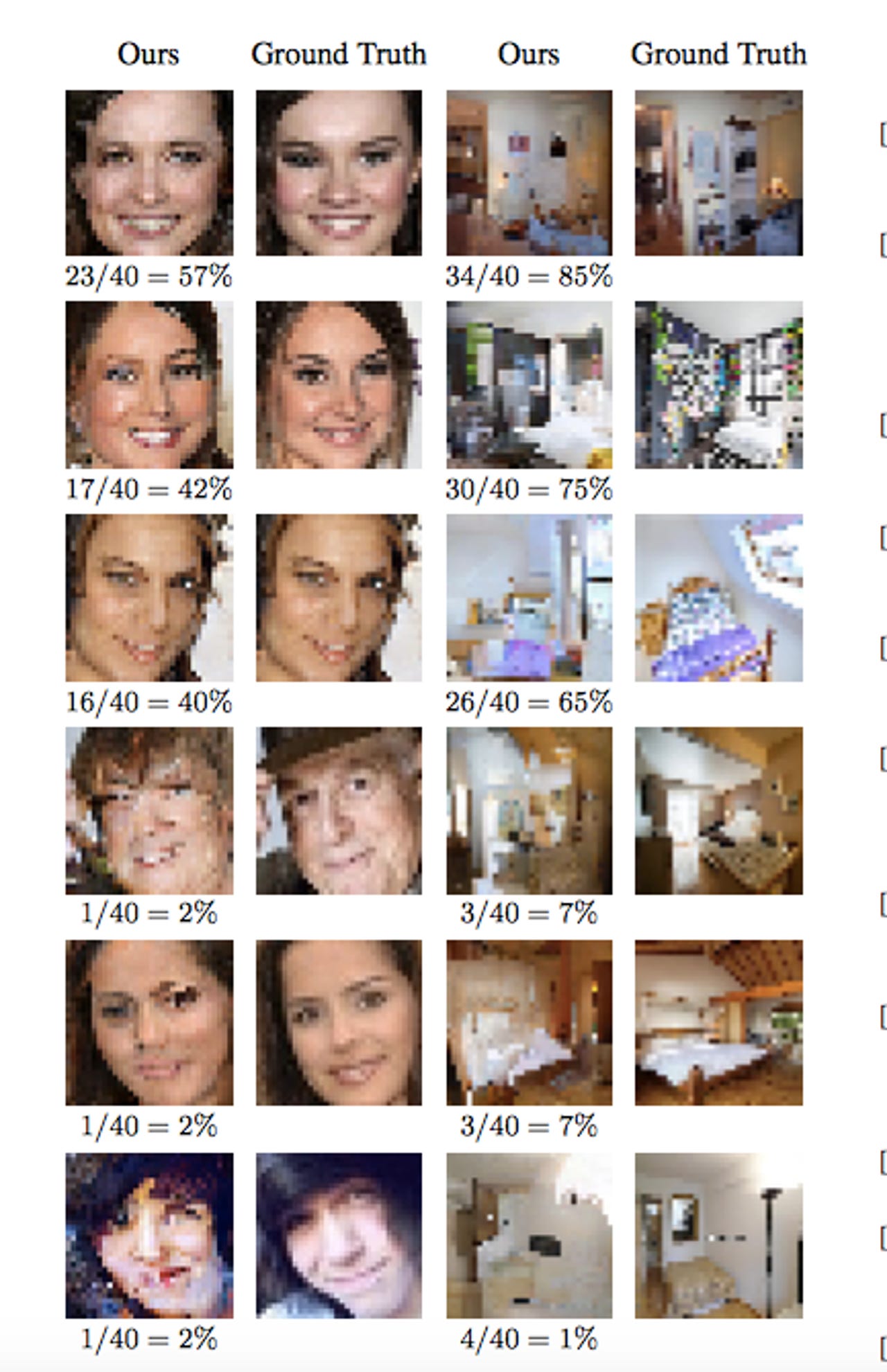

Human study: The fractions below the images show how many times a person chose the enhanced image over the real image.

Until now, only Hollywood could zoom and enhance a grainy image to move a plot along with newly clear photographic evidence, but Google's neural network can now do it too. Almost.

Researchers from Google's AI Brain team have devised a pair of neural networks to look at a blurry eight-pixel by eight-pixel image of a face and reconstruct it. While it can't actually recover details that aren't in the original image, it can, like an artist who has prior knowledge of a face, fill in the blanks in a plausible way.

According to Google's researchers, the model they built plays artist by taking details from a real 64-pixel by 64-pixel image, such as a person's hair and face details, to synthesize a 32-pixel image. In this case, the training data was a large number of photos of celebrity faces and bedrooms.

"The conditioning network effectively maps a low-resolution image to a distribution over corresponding high-resolution images, while the prior models high-resolution details to make the outputs look more realistic," Google's Brain researchers explain.

As Ars Technica notes, the conditioning network maps the blurry image with other high-resolution images that, when shrunk to eight by eight, can be used to find a match.

Meanwhile, the prior network has learned that, for example, a brown pixel on the top of the source image is likely to be an eyebrow and so applies hi-res eyebrow-shaped brown pixels to that part of the source.

When human observers were shown the real image of a celebrity face versus the computer enhanced images, 10 percent were fooled. Ars notes that 50 percent would be a perfect score.

Given the enhanced images are impressions of what the network thinks a blurry image would look like, it probably couldn't be used as evidence in a criminal investigation, however it still may help move an inquiry along.