A round of Web 2.0 reductionism

The idea of reductionism holds that the nature of complex things can always be reduced to simpler, more fundamental ideas. In contrast, Tim O'Reilly's now-famous meme-map of Web 2.0 is a terrific piece of largely holistic analysis. Holisim, which is the opposite of reductionism, says that the properties of any given system cannot be determined by the mere sum of its parts. In a small but important way, this captures an essential aspect of the debates that swirl around Web 2.0 and the next generation of the Web in general.

In these terms, this has given us one set of thinkers attempting to explain what's happening on the Web by exploring the fundamental precepts. At the same time, others explain this in terms of the things of what we're actually seeing happen on the Web (online software as a service, self-organizing communities, Wikipedia, BitTorrent, Salesforce, Amazon Web Services, etc.) Neither view is complete of course, though combining them could possibly help.

Over the last week, in light of the many folks continuing to grouse about the nebulosity of Web 2.0, we've seen some attempts to reduce Web 2.0 to its constituent elements, and with some success. Certainly, Tim Bray recently weighed in with a heartfelt analysis a few days ago:

Every day that goes by I believe more and more that the only important new thing is that the Net is read-write. Everything that matters follows from that.

And so did Tim O'Reilly himself in his recent UC Berkley speech which Greg Linden wrote about yesterday, where Tim clarified things a little:

A true Web 2.0 application is one that gets better the more people use it. Google gets smarter every time someone makes a link on the web. Google gets smarter every time someone makes a search. It gets smarter every time someone clicks on an ad. And it immediately acts on that information to improve the experience for everyone else.

It's for this reason that I argue that the real heart of Web 2.0 is harnessing collective intelligence.

O'Reilly, one of the principle explainers of Web 2.0, actually showed his hand earlier on this line of thinking when he wrote about Ajit Jaokar's work to uncover the root ideas of Web 2.0 identied harnessing collective intelligence as the most fundamental component. This is a key refinement of the over-reduced version of Web 2.0 that Tim Bray espouses.

Even Dave Winer chimed in on this topic today, also pointing out that a read-write Web is nothing new. And also, though this is obviously true, it's missing this key refinement as well.

This last step, this last piece of the puzzle, is almost certainly the central difference between the Web of yesterday and the Web of tomorrow. The read-write aspect of the Web that Bray and Winer refer to has been with us since the first forms-capable browser was widely available (circa 1994). In this specific sense, Web 2.0 is certainly nothing new at all. But it's only when this read-write aspect is used in a way that actually creates greater shared knowledge for all, does this make a noticeable, even dramatic, difference.

However, up until recently, most uses of the read-write Web were for publishers, online shopping, or other one-to-many and solitary, point-to-point uses. And while that may still be the case today, the trends are now telling us a dramatically different story: the read-write Web is being used in a participatory way in a widespread fashion.

It was the widespread adoption of blogs, wikis, and other read-write techniques that ushered in a common I-write-and-everyone-reads-it usage pattern. This is the harnessing part that a pure conception of a read-write Web does not focus on and the public at large is just starting to understand.

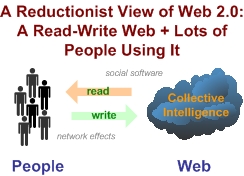

Thus, Read-write Web + People Using It = Web 2.0.

Now, that's not to say that other important trends haven't been happening simultaneously to enable this. I was recently on a panel with Google's profoundly insightful Adam Bosworth (full video here) where we debated the state of Web 2.0. In his mind, the most impotant change over the last few years has actually been in the improvement of the underlying physics of the Internet. The widespread penetration of broadband Internet access now allows many things that weren't possible before.

From a read-write Web perspective, much better connectivity enabes user contributions (trying uploading pictures to Flickr or videos to YouTube on a dial-up connection), and rich internet applications (RIAs) that provide simple yet powerful interaction interfaces that can capture knowledge from a user's UI gestures, to name just two.

So in a reductionist view, the existence of a read-write Web is necessary but not sufficient to trigger what we're seeing on the Web today. It's the existance of widely accessible and heavily used read-write areas of the Web and a very large and skilled Web population that is interesting, willing, and able to contribute and consume read-write content.

I've said time and again that Web 2.0 requires people at its core to work. Not-so-coincidentally, this is true of enterprises as well (some good coverage of this by Om Malik over the weekend). The Web and enterprises are both made of people and overlaying them in a non-disruptive way could change the way we run our businesses forever.

Note: This is just a brief interlude between our explorations of Web 2.0 strategies in the enterprise (Part 1, Part 2, Part 3, Part 4 here). Stay tuned for the next round shortly.