Academics hide humans from surveillance cameras with 2D prints

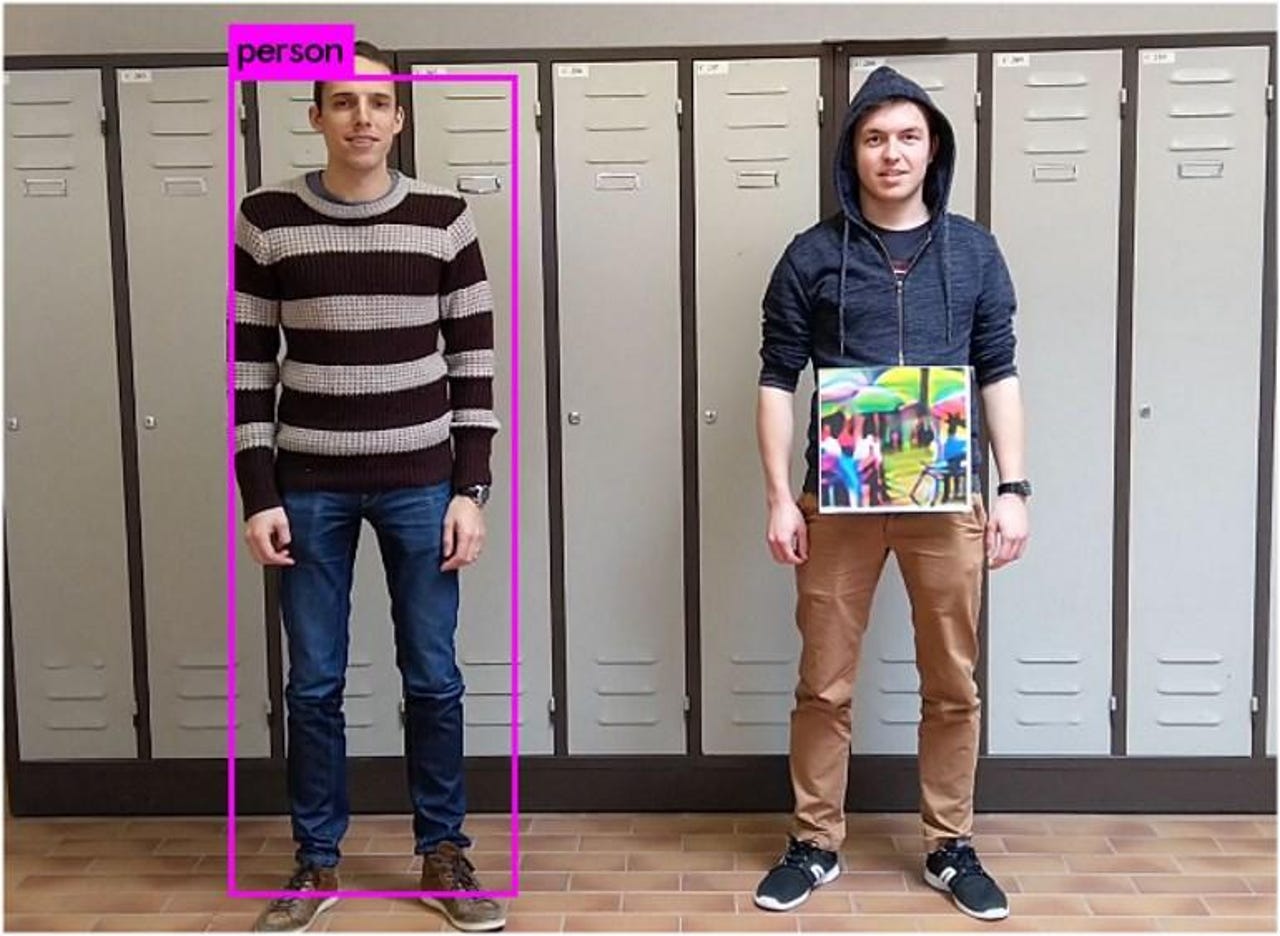

Academics from a Belgian university have devised a method that uses a simple 2D image that can be printed on shirts or bags to make wearers invisible to camera surveillance systems that rely on machine learning to recognize humans in live video feeds.

The 2D image (called a "patch" in the research paper) must be placed around the middle of a person's "detection box" and must face the surveillance camera at all times.

This does not hide a person's real face from the video feed (unless the person also wears a mask), but it prevents any human detection algorithm from spotting a human body when entering the frame and triggering a subsequent facial recognition check.

Looking for your next t-shirt?

Nowadays, the need to come up with a video-detection-dodging method has arisen after machine-learning-powered surveillance systems are now being widely deployed in some areas of the globe, mainly by law enforcement, oppressive regimes, or large retailers.

For the past few months, academics from the Catholic University in Leuven (KU Leuven) have experimented with the idea of overlaying a malformed image (called a "patch") on top of a person's detection box in order to trick machine learning systems into misclassifying a human as a nondescript object.

The research team experimented with various types of patches, such as randomly-generated image noise or blurred out images, but found out that photos of random objects that go through multiple image processing operations fare better than others.

For example, the image patch they came up with (Fig4, c) was created by taking a random image, which they rotated 20 degrees each way, scaled up and down randomly, added random noise, and modified rightness and contrast at random.

The result was a pattern that can be applied on clothing, bags, or carried on other objects, and the person wearing this "patch" would become invisible to surveillance systems that employ human detection algorithms.

The same system can also be adapted to hide certain objects from view. For example, a "patch" could hide cars or bags from view as well, if the surveillance system is configured to detect certain objects instead of humans.

The current "patch" is not foolproof, as the surveillance system can very quickly re-acquire a person if the patch is not clearly visible or the angle changes, but it is a first step towards designing a system that fools some of the smart surveillance systems that are currently being deployed across the world.

More details about this work are available in the research paper titled "Fooling automated surveillance cameras: adversarial patches to attack person detection," published last week. Researchers also released the source code they used to generate the image patches.

This work is also one of the first time academics have tried to hide people from surveillance systems using 2D prints. Previous work has focused on hiding persons from facial recognition software using eyeglasses with a special frame [1, 2]. Facial recognition was already struggling with people wearing hats.

Tricking image classification and object detection systems isn't a new trend. For years, academics have been fooling the object detection systems installed on autonomous cars --usually via stickers placed on road signs [1, 2] or by paint dots left on the road.

13 technologies that are safer than passwords

More cybersecurity coverage:

- Nokia 9 buggy update lets anyone bypass fingerprint scanner with a pack of gum

- Mobile app used in Car2go fraud scheme to steal 100 vehicles

- French government releases in-house IM app to replace WhatsApp and Telegram use

- EU votes to create gigantic biometrics database

- PayPal receives patent for ransomware detection technology

- Hackers reveal how to trick a Tesla into steering towards oncoming traffic

- Vulnerabilities discovered in industrial equipment increased 30% in 2018 TechRepublic

- Amazon workers eavesdrop on your talks with Alexa CNET