Android phones can now read books, signs, business cards via Google's Mobile Vision

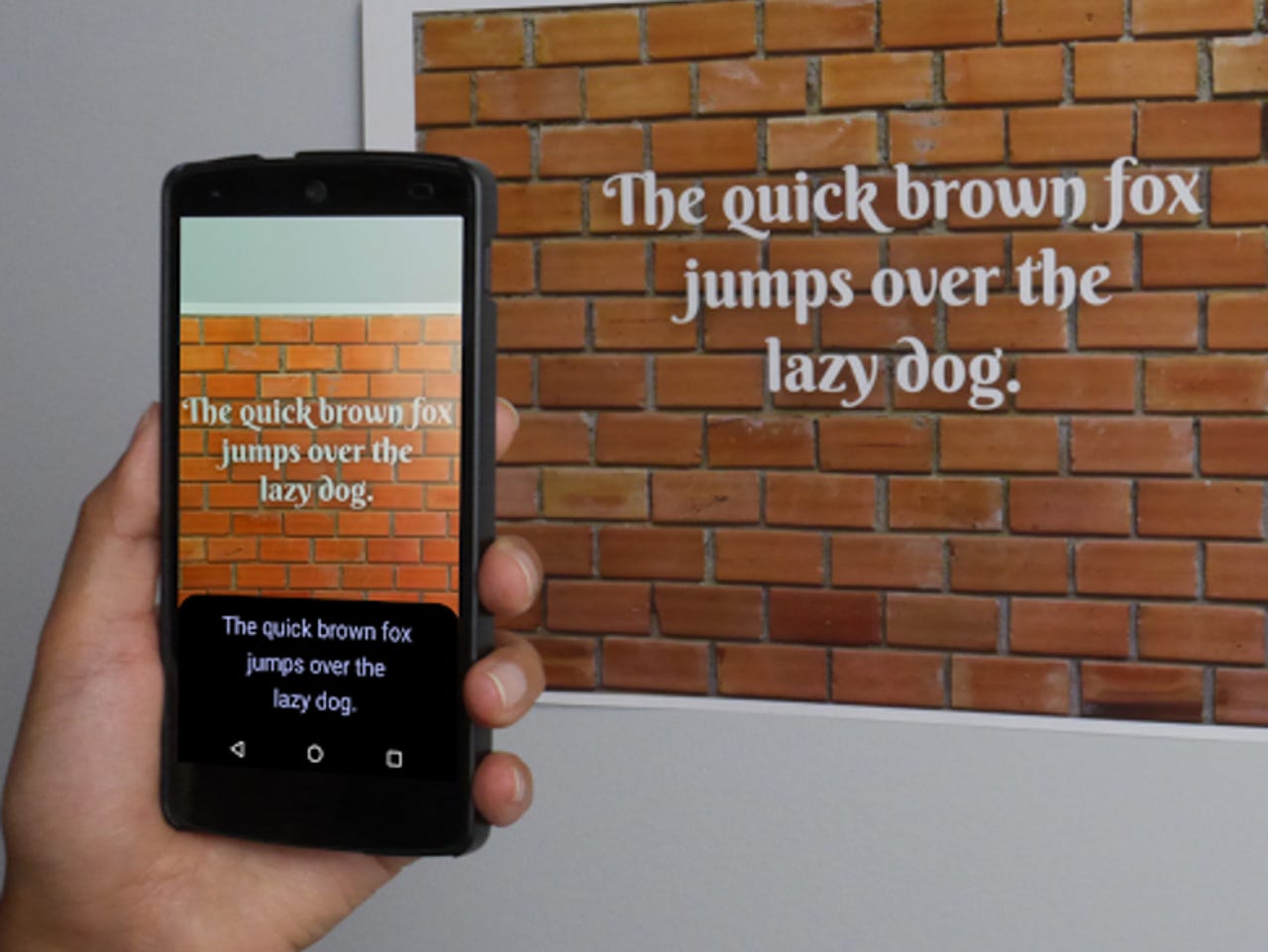

Google's Mobile Vision now gains the ability to read text.

Google has introduced a new Text API for its Mobile Vision framework that allows Android developers to integrate optical-character recognition (OCR) into their apps.

The new Text API appears in the recently-updated Google Play Services version 9.2, which restores Mobile Vision, Google's system to make it easy for developers to add facial detection and barcode-reading functionality to Android apps.

The Text OCR technology currently can recognize text in any Latin-based language, covering most European languages, including English, German, and French, as well as Turkish.

It detects text in real time on the device, just like the facial-recognition and barcode reader systems. This feature makes it suited to real-time applications and gives developers similar capabilities to those offered by the Google Translate app.

Google provides a number of use-cases for the technology. Developers can employ it to organize photos that contain text, or automate data entry for credit cards, receipts, and business cards.

It can also be used to embed translate features via Google's Cloud Translate API, and keep track of objects. Alternatively, developers can use it to enable accessibility features through Android's TextToSpeech engine.

The company has also provided an overview of how to integrate the OCR feature into Android apps.

The OCR breaks text into paragraphs, lines, or words, as well as the text itself.

Google removed Mobile Vision in version 9.0 due to a serious bug in the service, telling developers to avoid adding new features until the issue was fixed.

The Mobile Vision API arrived last year with just the Barcode and Face APIs. Besides facial recognition, apps could also identify features on a face such as the eyes, nose, and mouth, as well as tell whether faces are smiling or if the individual's eyes are open.