Apple details gesture control for Apple Watch in patent filing

Apple's gesture sensor system for the Apple Watch

The Apple Watch could get even more personal in future with Apple exploring ways to sense movements in tendons and bones to read hand gestures as input commands.

When announcing the Apple Watch, Apple boasted that it was the most personal device ever, primarily because it could be used to capture and transmit the wearer's heartbeat by interrogating the veins beneath the wrist.

Apple appears to be keen on extending the device's sensing capabilities to other parts of the wrist area, including bones and tendons, but rather than measuring heart rate, it wants to detect movements of the user's hand, arm, wrist and fingers and interpret them as commands.

The gesture sensor system it outlines in a new patent application would offer an alternative to voice or touch input, the main way of giving instructions to the Watch and iPhone today.

Apple's heart rate sensor for the Apple Watch, for example, works in tandem with a semiconductor and LEDS, which rapidly blast flashes of green light at the user's veins to detect the volume of red blood coursing through the wrist.

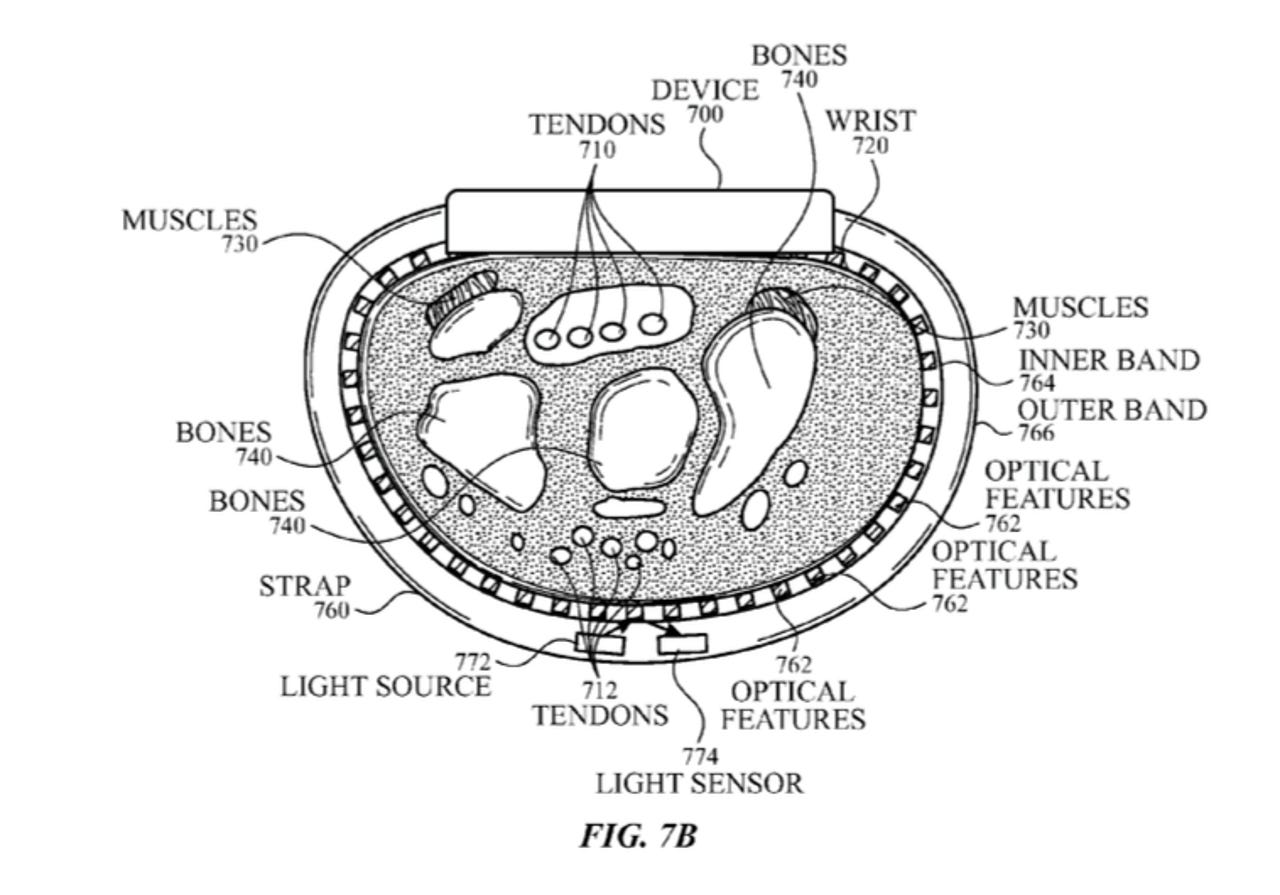

Instead of going under the skin, Apple is looking at an optical system that works on the surface of the skin to find out what's going on beneath it. The light sensors on a wrist strap and the device would "generate a reflectance profile from the reflectance of the light off the user's tendons, skin, muscles, and bones".

As Apple explains: "When the user flexes or extends the fingers, tendons can cause a ripple in the surface of the user's skin located at wrist. Each of the fingers can cause a ripple at a different location, and the light source and light sensor pair can detect the corresponding tendon moving closer to or away from the skin surface. As a tendon moves, the gap between the tendon and the light source and light sensor pair can change, resulting in a change in the reflectance profile."

Other sensors that could be used to detect gestures include a myoeletric sensor to measure changes in electric signals in tendons when coupled with the user's movements, as well as sensors already found in phones, such as accelerometer and gyroscope.

In the case of the accelerometer and gyroscope the device could be configured to read predefined gestures such as hand waving, palm up and down movements, or hand up and hand down movements.

The device would also include a programmable interface that allows apps to record gestures, such as one finger pointing, to link up with specific commands, or alternatively the device could collect data on gestures and actions that typically follow them in order to second-guess the user's intended commands.

The system may also have some use as a translator between deaf people who can sign and others that cannot by the device interpreting hand signals and using a speaker to announce the phrase or letter.

As usual, Apple's patent applications don't always turn up in actual products, but it does signal one of the ways Apple is thinking about developing features that could further its potential as the most personal device.