C3.ai lands IBM partnership and more customers for its artificial intelligence and IoT platform

There are plenty of tools and point solutions that address bits and pieces of the challenge of delivering artificial intelligence (AI) and Internet of things (IoT) applications. C3.ai's focus is on delivering an end-to-end platform for developing, deploying and running these applications in production at scale.

Whether customers use every aspect of the C3.ai platform or not, big enterprise-scale companies seem to be attracted by that promise of quickly developing and running innovative, data-driven applications at scale. There was plenty of evidence of that fact at C3.ai's February 25-27 Transform conference in San Francisco, where customers including Bank of America, Shell, 3M and Engie detailed their deployments.

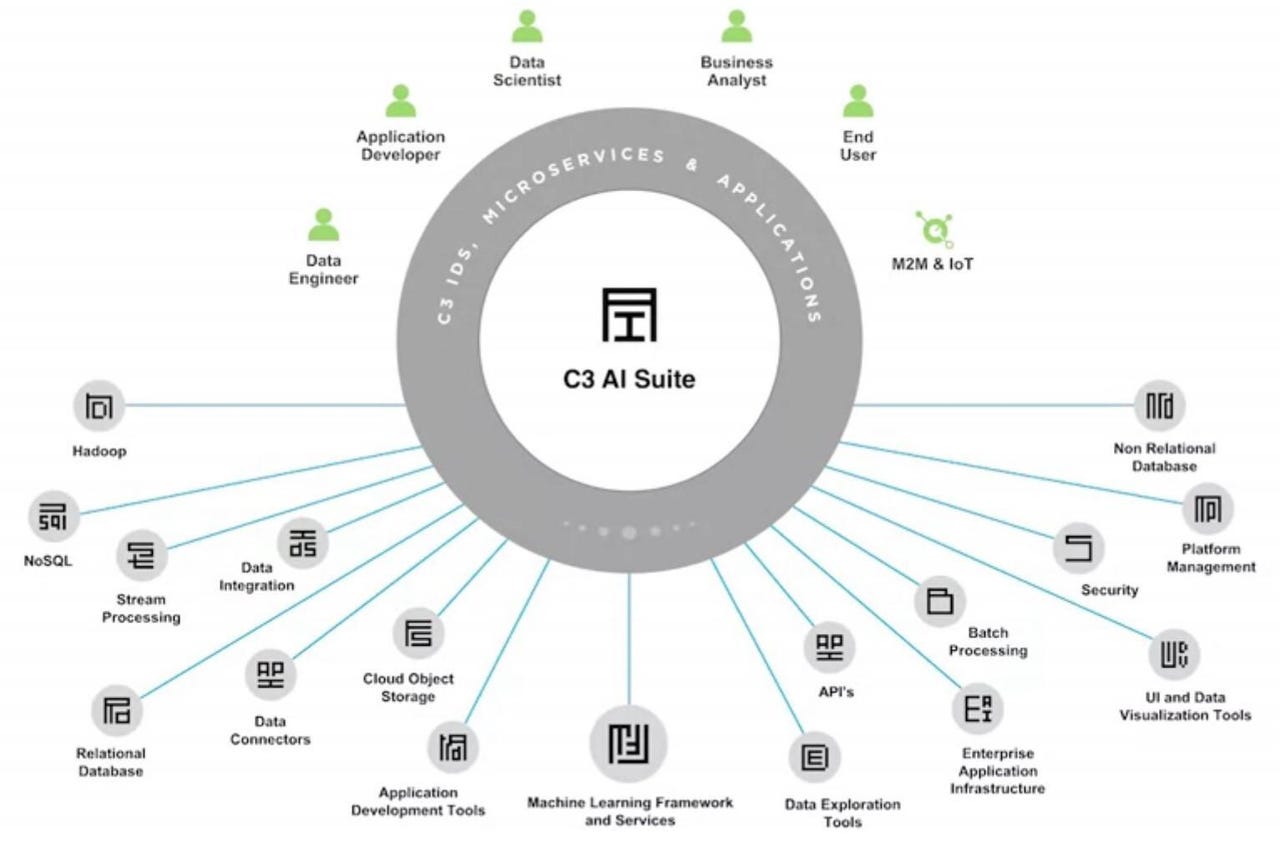

C3.ai's cloud-first platform is comprehensive, addressing the needs of developers, data engineers and data scientists, and the operational teams challenged with bringing applications into production at scale. Most of the components are based on open source software, such as PostgreSQL, Cassandra, Kafka and Hadoop. Yet the platform is also designed to be modular and open, so customers can swap in preferred tools and components, whether those might be integrated development environments, data platforms, AI and machine learning (ML) frameworks and tools, or DevOps components (see integrations slide, below).

The C3.ai platform is comprehensive and built on open-source software, yet it's also modular, so customers can use favored components.

C3.ai offers connectors and integrations to these and other popular integrated development environments, frameworks, suites, tools and DevOps options. (Source: C3.ai)

The core, differentiating aspect of the C3.ai platform is its "type system" architecture, which ensures development speed, repeatability and scalability. The type system uses metadata to represent everything in the end-to-end development-to-deployment process: all data and data sources, underlying storage technologies, data science models, data-processing services, applications and application services. Everything is represented in a consistent, abstracted way that hides the complexity of behind-the-scenes technologies and that simplifies application development, deployment and ongoing optimization.

C3.ai offers its platform as a service running on Microsoft Azure, but it can also run on private clouds or other public clouds. It's also possible to straddle hybrid and multi-cloud deployment, with clustered, multitenant instances running on-premises and on one or more public clouds.

C3.ai's combination of agility and scalability, along with flexibility and openness to third-party tools, seems to be gaining traction. At Transform 2020, company founder and CEO Tom Siebel (and also the founder and longtime CEO of Siebel Systems) reported that C3.ai is on track for a 143% increase in bookings to $447 million in fiscal year 2020, up from $184 million in FY 2019. That's a respectable scale for an 11-year-old company with just under 500 employees. Yet, to realize the company's ambitions, Siebel acknowledged that C3.ai will need the help of larger partners.

C3.ai founder and CEO Tom Siebel keynoting at the company's February 25-27 Transform event in San Francisco.

To that end, Siebel announced at Transform C3.ai's latest partnership, a strategic alliance with IBM Services to become the company's first preferred global systems integrator. The two companies said the deal will "fast-track the delivery of enterprise-scale industry and domain specific AI applications."

The IBM deal follows on the heels of C3.ai's 2019 joint venture partnership with Baker Hughes to jointly deliver C3.ai's AI platform and applications, along with combined industry and technology expertise, to the oil and gas industry. And in 2018, C3.ai announced a strategic partnership with Microsoft to use Azure as its preferred public cloud platform (although it also announced separate partnerships that year with Amazon Web Services and Google Cloud Platform).

Daniel Jeavons, Shell's general manager of data science, details the company's data-driven predictive maintenance and optimization apps built on C3.ai.

What most impressed me at C3.ai's event was the number and depth of customer presentations detailing extensive deployments and ambitious deployment plans:

- Shell executive Daniel Jeavons, general manager of data science, updated attendees on a now-two-year-old C3.ai deployment that started with predictive maintenance IoT applications that now predict and prevent unplanned failures of literally thousands of valves and compressors. These now-monitored assets are in use in onshore and offshore oil and gas production facilities in more than 20 locations around the globe. Avoidance of outages at just one of these locations is, thus far, credited with saving $2 million. Next up, Shell is using the same data foundation and reusable components to develop a power-optimization application for electronic submersible pumps.

- 3M executive Jennifer Austin, manufacturing & supply chain analytics implementation leader, detailed progress on data-science-driven inventory optimization, pricing analytics and supply chain risk-management applications developed and delivered over the last two years. Austin acknowledged that 3M is still working on the challenges of change management user adoption, in certain areas. But the inventory optimization application alone is expected to generate as much as $200 million in annual savings while improving service levels.

- Bank of America executive Brice Rosenzweig, co-head of the bank's Data and Innovation Group, detailed progress on building out a more business-user-accessible data platform and early (not-yet-in-production) progress on cash management and lending optimization applications built on the C3.ai platform. A customer since last fall, Bank of America is now considering at least a dozen additional use cases that would leverage the C3.ai platform, Rosenzweig said.

Bank of America executive Brice Rosenzweig discusses progress on cash management and lending optimization applications in development.

My Take on C3.ai's Progress

C3.ai detailed its plans to push further into the banking and financial services sector, addressing use cases including anti-money laundering, customer churn management, cash management and securities lending and more. But executives stressed that C3.ai will emphasize "accelerants" of development and delivery rather than building out every possible application. It's a sound approach, as financial services resist one-size-fits-all solutions for what might be differentiating capabilities. At the same time these accelerants, including reusable data objects and analytic objects, will all be built on the C3.ai platform. Thus, customers will have the advantages of rapid development and deployment, but their customized applications won't break when C3.ai updates its platform.

A key strength for C3.ai – and one that every customer I talked to seems to be exploiting – is the openness to swap in and use preferred tools for X or Y while relying on the platform and type system architecture for abstracting complexity and running data-driven applications at scale. Bank of America, for example, use DataRobot for data science exploration while Shell uses a combination of Alteryx, Databricks and TIBCO Spotfire for rapid proof-of-concept work before bringing models and applications into production at scale on the C3.ai platform. C3.ai's platform and ambitions are big and broad, so I see the company's pragmatic openness to best-of-breed options and cloud-native services as sensible, realistic and in tune with the openness and flexibility that customers are after.

C3.ai executives repeatedly emphasized that its roadmap is very customer driven. In 2019 the focus was on adding customer-requested connectors and integrations, low-code development options and user-interface improvements. A Spring 2020 upgrade will deliver improvements to the data-exploration and hyper-parameter optimization features of C3.ai's Ex Machina data science studio. The company's Summer 2020 release will focus on the developer experience, with improvements supporting serverless computing, self-healing capabilities and sub-second deployment speeds.

With its deals with IBM, Microsoft and Baker Hughes, C3.ai is clearly scaling up its sales and delivery capacity, yet it remains a midsized company that obviously takes pride in staying close to its customers. "There's no customer where we have a traditional arm's-length [vendor-to-customer] relationship," Siebel concluded in his keynote address. "All of our customers are on speed dial."

Related Reading:

C3.ai Speeds Digital Transformation Driven by AI and IoT Applications

Salesforce Dreamforce 2018 Spotlights Identity, Integration, AI and Getting More For Less

Climate Corp. Scales Up Data Science to Power Precision Agriculture