Dallas Police's killer robot sparks debate

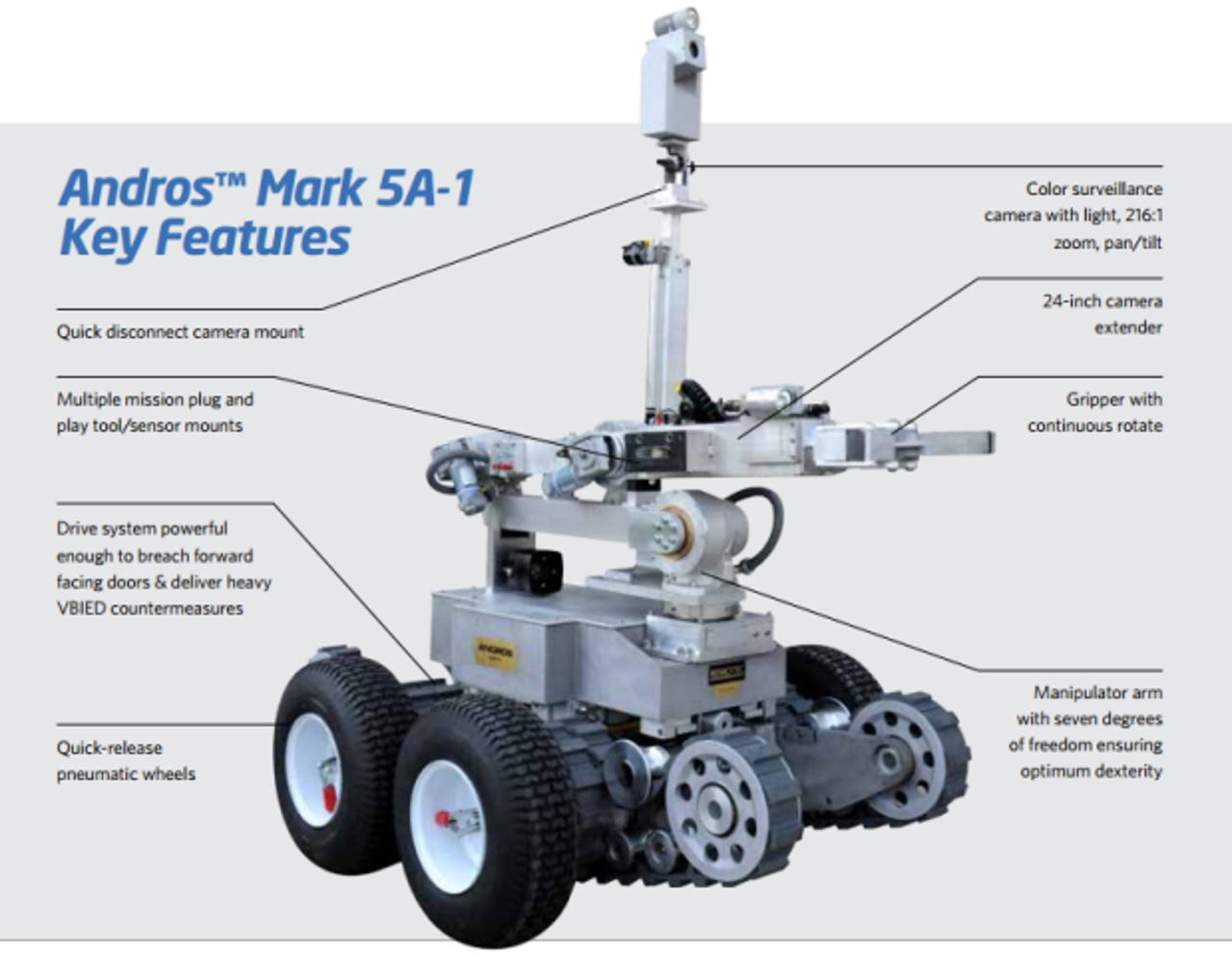

The robot was a Remotec Andros Mark V-A1, which the Dallas Police Department purchased in 2008 for $151,000. (Image: Northrop Grumman)

Several Dallas police officers had already been killed, and the shooter told negotiators he wanted to kill a few more. They had him cornered, but he kept firing shots, so Dallas Police Chief David Brown instructed officers to find a creative way to end the standoff.

Fifteen minutes later, they sent a robot to deliver a handful of explosives that quickly killed the suspect. This event, which took place last Thursday, was the first known instance of police using a lethal robot in American history.

"We saw no other option but to use our bomb robot and place a device on its extension for it to detonate where the suspect was. Other options would have exposed our officers to grave danger," said Chief Brown in a press conference. "The suspect is deceased as a result of detonating the bomb."

The action unfolded at a peaceful protest to honor the lives of Alton Sterling and Philandro Castile. A sniper, who has since been identified as 25-year-old Micah Xavier Johnson, shot and killed five police officers and injured seven more.

During the standoff, Johnson told negotiators he wanted to kill white police officers because he was angry after seeing viral videos in which Sterling and Castile appear to have been killed by police. According to Chief Brown, during hours of negotiations, Johnson lied to negotiators, laughed, sang, and excitedly asked how many police he had killed. He also bragged about his plans to kill more.

Chief Brown said he wouldn't hesitate to use the lethal robot again, if he had to. "This wasn't an ethical dilemma for me," he said in a press conference. "I'd do it again. I'd do it to save our officers' lives."

This unprecedented police-use of a robot to kill puts a spotlight on technology in law enforcement and raises important questions about how institutions could and should use robots.

We asked Shannon Vallor, a technology ethics expert based at Santa Clara University, to share her thoughts on the matter. She said, "Although the lethal use of the bomb robot in the Dallas case may well have been justified legally and morally, it opens up a far more disturbing possibility: the normalization, and significant expansion in use, of new forms of remote lethality in law enforcement."

The Dallas robot has started a long overdue public discussion about the policies that can be put in place to ensure responsible and safe use of robots in policing and other institutions. Robots are becoming less expensive and easier to use, so their widespread adoption is inevitable.

What will happen as robots are used by more cops in the future? What about hospitals, schools, airports, and private businesses? Vallor is president of the Society for Philosophy and Technology, a board member of the Foundation for Responsible Robotics, and author of a forthcoming book on the philosophy of technology. Not surprisingly, she had a strong opinion about the Dallas events. She told ZDNet:

Of course, police snipers already allow for a form of 'killing at a distance'; but a bomb robot takes this a step further, and in a direction that requires immediate public attention and discussion. We already have significant problems ensuring that lethal force is used with appropriate restraint and justification by human officers in the field. Now we must face the prospect of adding a powerful and dangerous new means of killing into the mix.

The robot used in Dallas was wirelessly controlled by a human, but robotic technology is becoming more autonomous every day. This makes the debate about the appropriate use of robots even more urgent. Vallor points out that although there is already a fierce debate about the legal and moral status of lethal autonomous robots in military settings, the same conversation should be happening about domestic law enforcement.

"As is so often the case," she said, "the technology here is running far ahead of the legal and ethical constraints that will be needed to ensure that uses of police robots promote the already fragile goods of justice, safety, and public trust."