Facebook upgrades Iowa data center with M2M-friendly fabric

Facebook's home grown engineering efforts are well known within the tech community -- perhaps to the extend that the social network's social graphs and data troves often feel like a tangled mess.

More about Facebook's infrastructure:

That could come with the territory for a technology company that has ballooned to encompass more than 1.35 billion users worldwide in just over a decade.

Big data management is a work in progress for virtually any business these days, and Facebook's momentum in this regard shows no sign of slowing down.

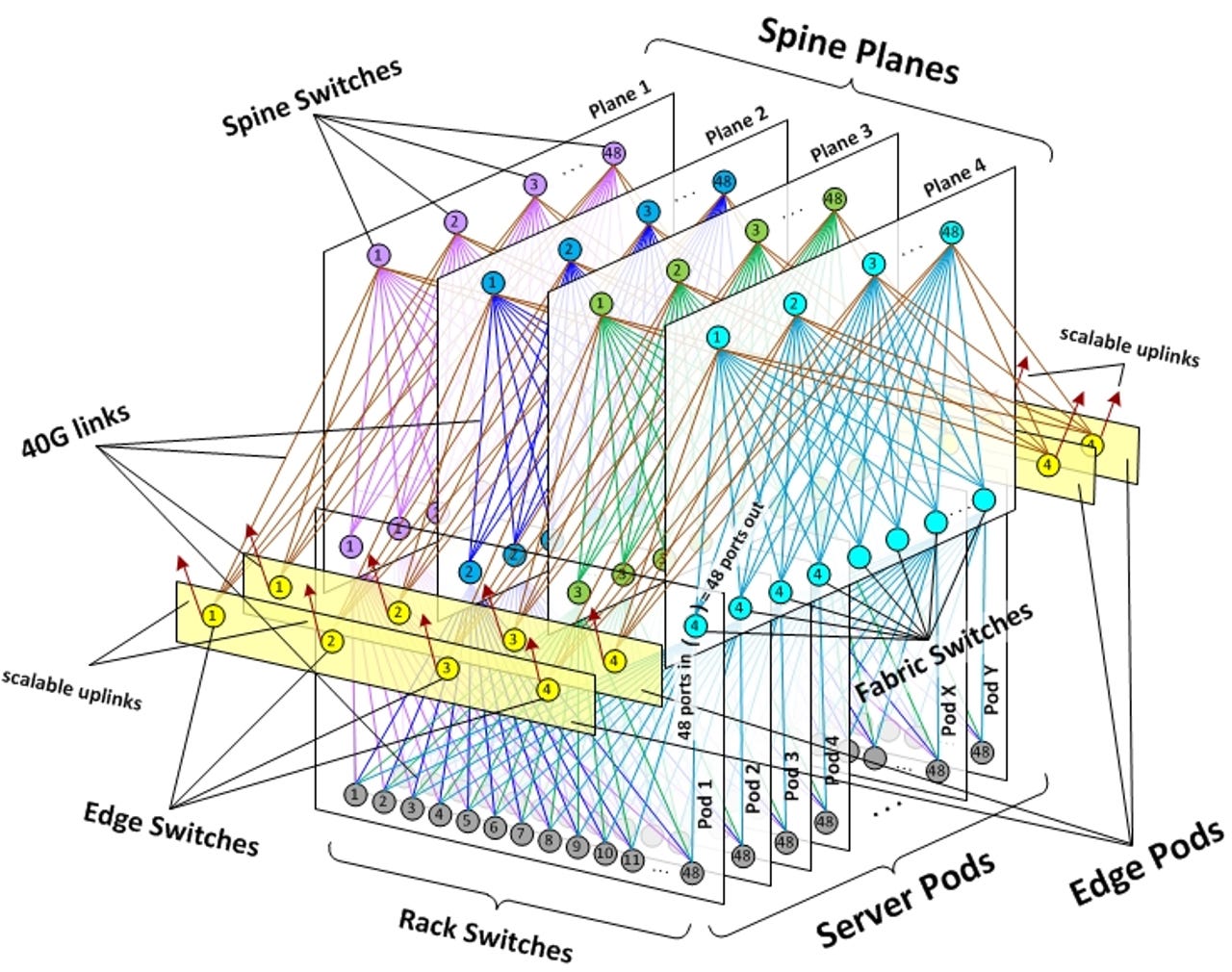

The Menlo Park, Calif.-headquartered company is bolstering its data center in Altoona, Iowa with a data center fabric described as a next-generation architecture developed for speeding up machine-to-machine traffic while having a better grasp on growing at scale.

Such objectives are critical for Facebook as the social media brand continues to rely on mobile channels for much of its revenue.

"We took a 'top down' approach – thinking in terms of the overall network first, and then translating the necessary actions to individual topology elements and devices," Andreyev continued.

According to its third quarter earnings report published in October, Facebook serves more than 1.12 billion monthly mobile users. Furthermore, revenue from mobile advertising jumped by 49 percent, now accounting for 66 percent of all advertising revenue as of Q3.

Following the establishment of Altoona 1 more than a year ago, Facebook unveiled plans earlier this year to add a second building, aptly named Altoona 2, to the compound.

Facebook has plotted data centers elsewhere in Prineville, Oregon; Forest City, North Carolina; and Luleå, Sweden.

With the new fabric installation, Facebook now touts Altoona as its most advanced data center in speeding up machine-to-machine traffic.

Alexey Andreyev, a network engineer at Facebook, explained in a blog post on Friday how the fabric was designed in order to adapt to rapidly changing needs of users, apps, and the network:

The amount of traffic from Facebook to Internet – we call it “machine to user” traffic – is large and ever increasing, as more people connect and as we create new products and services. However, this type of traffic is only the tip of the iceberg. What happens inside the Facebook data centers – “machine to machine” traffic – is several orders of magnitude larger than what goes out to the Internet.

Andreyev noted Facebook's rate machine-to-machine traffic growth "remains exponential, and the volume has been doubling at an interval of less than year."

But Andreyev admitted the initial concept of just designing the fabric was "complicated and intimidating because of the number of devices and links."

"We took a 'top down' approach – thinking in terms of the overall network first, and then translating the necessary actions to individual topology elements and devices," Andreyev continued.

Interestingly, as Andreyev highlighted, the fabric’s physical and cabling infrastructure is actually less complex than the network topology drawings, asserting infrastructure teams and building designs considered shorter cabling lengths and other details throughout the process.

"In dealing with some of the largest-scale networks in the world, the Facebook network engineering team has learned to embrace the 'keep it simple, stupid' principle," Andreyev quipped. "By nature, the systems we are working with can be large and complex, but we strive to keep their components as basic and robust as possible, and we reduce the operational complexity through design and automation."

Images via Facebook