Facebook vows stronger action against repeat misinformation-sharing offenders

Facebook has made a promise to take stronger action against people who repeatedly share misinformation, introducing penalties such as account restrictions.

"Whether it's false or misleading content about COVID-19 and vaccines, climate change, elections, or other topics, we're making sure fewer people see misinformation on our apps," Facebook said in a blog post.

"Starting today, we will reduce the distribution of all posts in News Feed from an individual's Facebook account if they repeatedly share content that has been rated by one of our fact-checking partners."

Facebook claims to already reduce a single post's reach in News Feed if it has been debunked.

The social media giant is simultaneously launching a new alert tool that lets users know if they're interacting with content that's been rated by a fact checker.

"We currently notify people when they share content that a fact-checker later rates, and now we've redesigned these notifications to make it easier to understand when this happens," Facebook said.

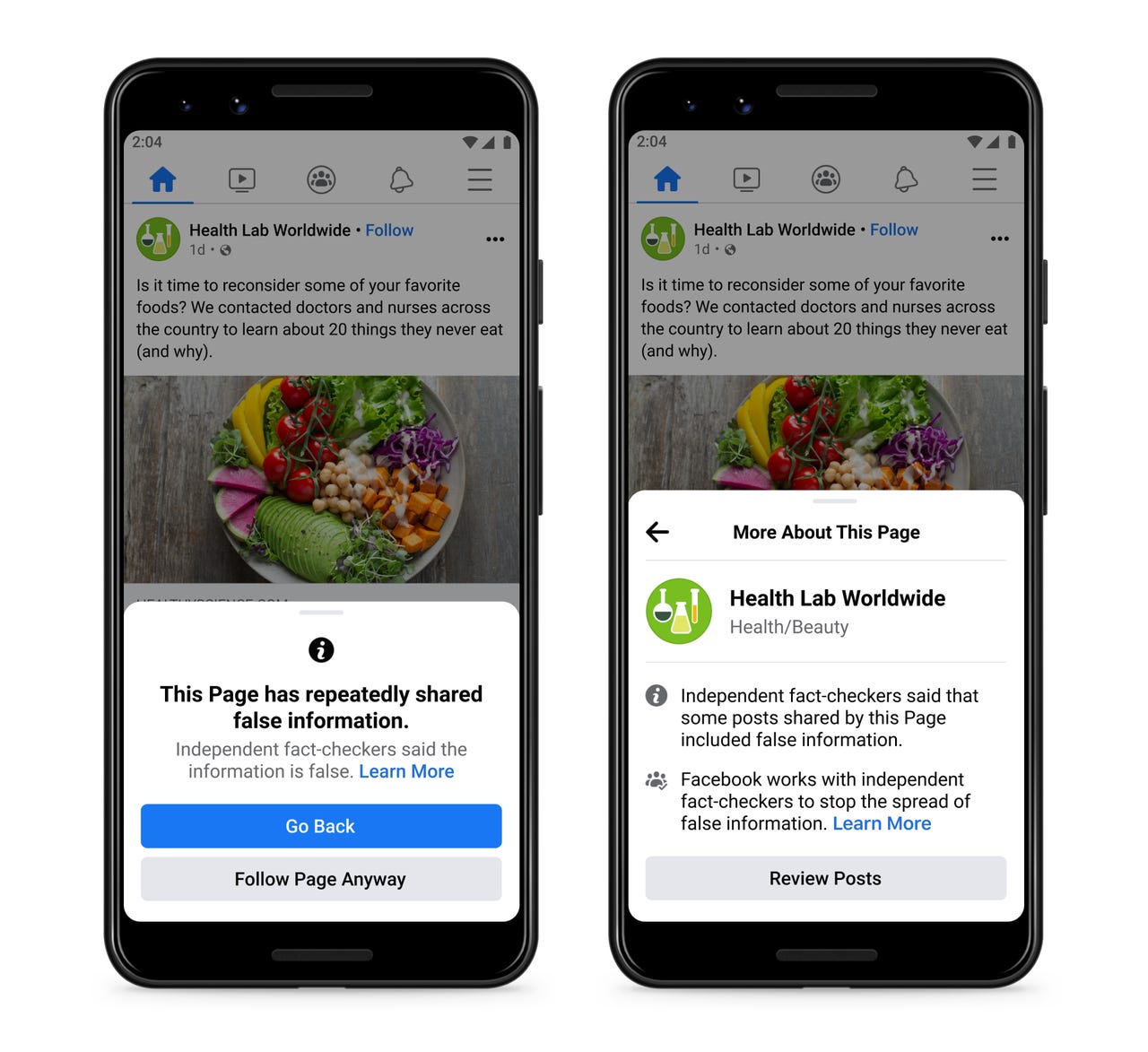

"We want to give people more information before they like a Page that has repeatedly shared content that fact-checkers have rated, so you'll see a pop up if you go to like one of these Pages."

Must read: Everything you need to know about Facebook's Oversight Board

The notification includes the fact checker's article debunking the claim as well as a prompt to share the article with their followers. It also includes a notice that people who repeatedly share false information may have their posts moved lower in News Feed so other people are less likely to see them.

Users can then view further information, such as that fact checkers have flagged some posts shared by the page include false information, as well as a link to more information about the platform's fact-checking program.

"This will help people make an informed decision about whether they want to follow the Page."

MORE FROM FACEBOOK

- Facebook tackles deepfake spread and troll farms in latest moderation push

- Facebook, Google, Twitter caution Australia against a blanket terrorism content ban

- Facebook AI cuts by more than half the error rate of unsupervised speech recognition

- Facebook's Oversight Board upholds Trump platform ban

- Twitter, Google, and Facebook publish inaugural Australian transparency reports