Google Cloud partners with AMD for 'Tau' virtual machines for scale-out workloads

Google's Cloud unit on Thursday announced a new family of virtual machine offerings that it claims can offer large percentage improvements in performance and economics for the biggest cloud workloads compared to VMs from other providers, in particular Amazon's Graviton chip-based instances.

The Tau virtual machines are the "best price-performance in the industry for scale-out applications," said Google Cloud vice president of infrastructure Sachin Gupta in an interview with ZDNet.

Scale-out applications, in this case, can include internet applications such as the kind that might be run by social media. Twitter and Snap are debut customers who were quoted lauding the qualities of the Tau VMs. Snap and Twitter use the VMs with containers, as Google provides its Google Kubernetes Engine running on top of the instances.

Those scale-out applications will commonly be micro-services, where details of the underlying machine instance are abstracted away. That could include web serving, media transcoding, large log file processing, etc.

The Tau instances can provide up to 60 virtual CPUs per instance, and are based on AMD's third-generation EPYC server processor, "Milan". Google already offers the EPYC Rome part for numerous instances. The instances can have up to four gigabytes of memory per virtual CPU.

Also: What is Google Cloud is and why would you choose it?

Tau will go live in the third quarter of this year. As an example of pricing, Google offers, "A 32vCPU VM with 128GB RAM will be priced at $1.3520 per hour for on-demand usage in us-central1." (See Google pricing here.)

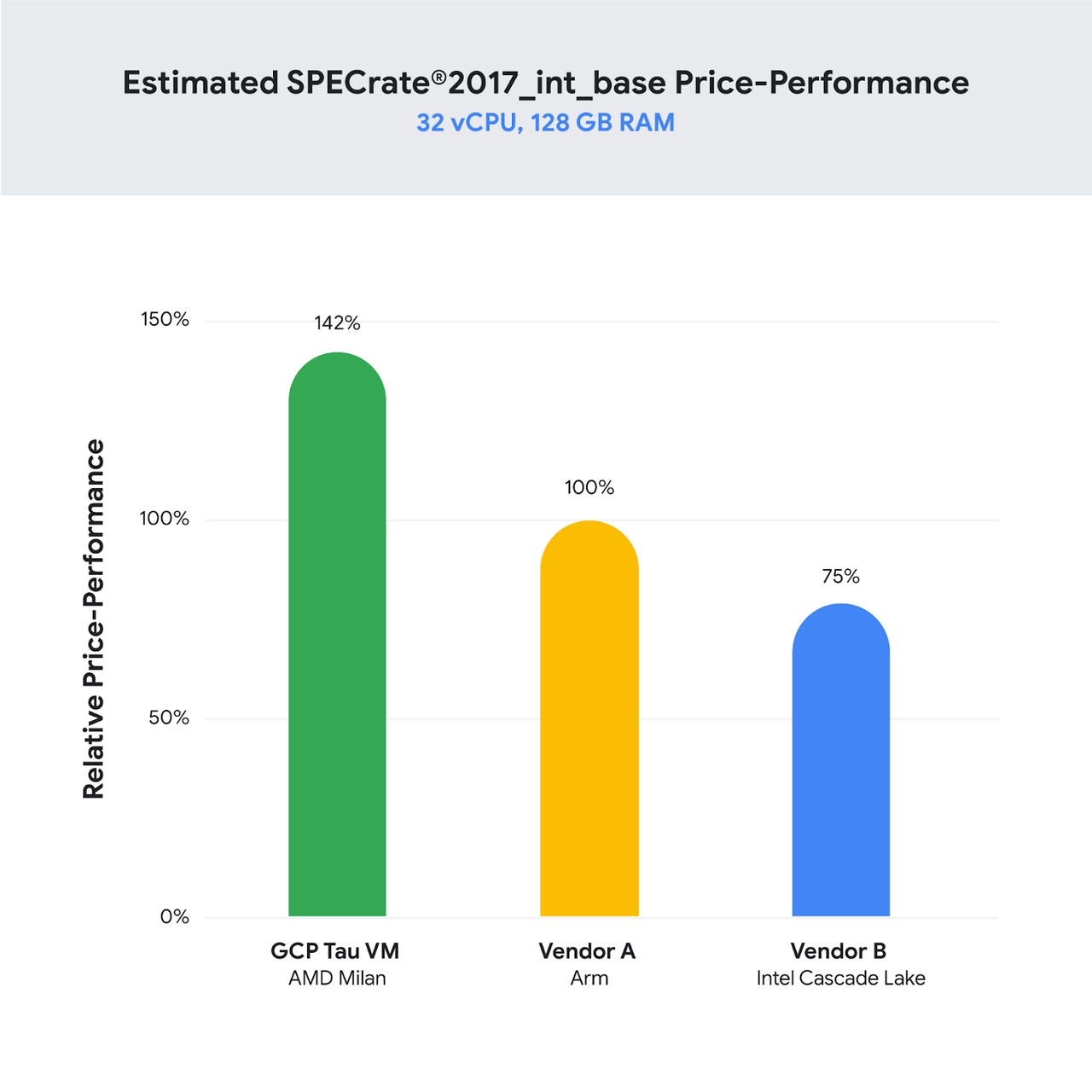

Google argues its delivers 56% improvement in raw performance, as measured by the common SPECInt processor benchmark, compared to services such as Amazon's Graviton-based ARM processor VMs. Google's own testing, it claims, also provides a 42% improvement on price-performance versus Amazon and others. (Details on the company's testing methodology can be found here.)

The Tau VMs appear to sit between Google's E2 instances, which scale to 32 virtual machines, and focus on general-purpose uses; and N2 instances, which scale higher, to 80 virtual CPUs per instance.

The Tau VMs "are particularly optimized, in the combination of CPU, memory, I/O, etc., that have been fine tuned to work best for scale-out environments."

Google Cloud expects it may incorporate other chips over time beyond the AMD part. "It's a bit of a race" in the CPU world at the moment, noted Gupta. "Somebody has a new CPU, and they're ahead for a bit."

"A thing that is particularly important for our customers is that we are able to deliver that price-performance improvement without a redesign of the application," said Gupta, versus AWS Graviton-based instances that have to port code from x86 to the ARM instruction set.

"Until now, the message they heard was, if you want something for scale-out, first you have to go do some surgery in your application, re-tune it, and maintain two stacks for your environment," said Gupta.

Google Cloud won't rule out other architectures, however. "If an answer in the future is we need to have an Intel-based, or an ARM-based instance in the Tau family, whatever it is that's best for our customers for scale-out, we would look to build against that and optimize for their application need."

That could include Google custom silicon; Google Cloud has instances that use its TPU processors used for machine learning.

"We are absolutely going to continue making investments on differentiating through silicon," said Gupta.