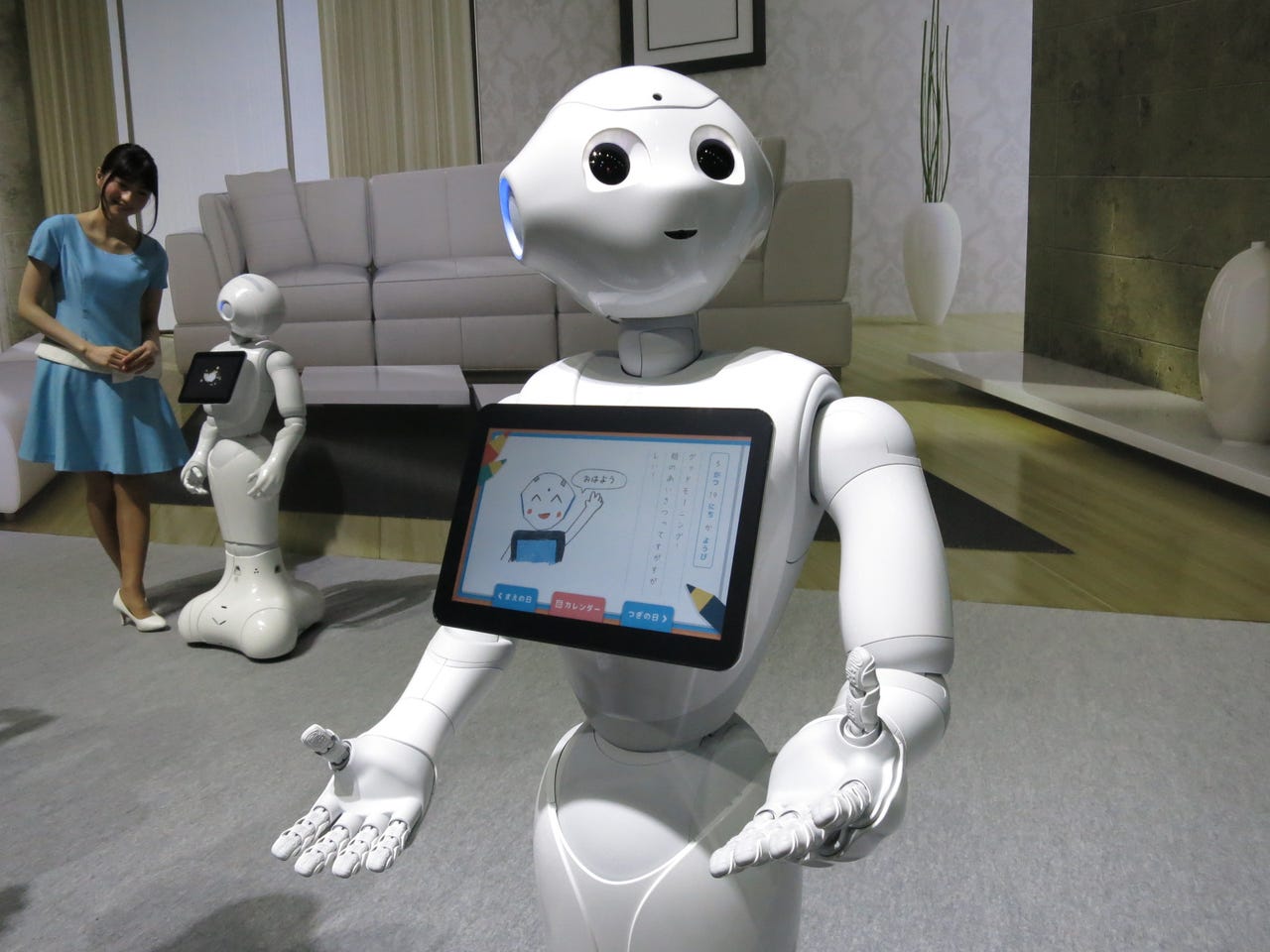

Google IO: SoftBank, maker of AI Pepper robot, has news for U.S. developers

When Japanese mobile phone company SoftBank offered 1000 of its emotionally intelligent Pepper robots for the consumer market last summer, the entire run sold out in under a minute.

At CES this year, SoftBank announced that IBM would be bringing Watson's artificial intelligence to Pepper, a bid to ready the robot for broad adoption in the home.

Now SoftBank is planning to branch into the U.S. At Google IO today, the company announced that it's opening up a new developer portal and adding SDK Android Studio to enable the development of custom applications for Pepper, continuing to evolve it's capabilities ahead of its U.S. launch, which it's planning later this year.

"We'll also be announcing the opening of SoftBank's U.S. office, headquartered in San Francisco, which will be driving the efforts surrounding the launch of Pepper in the U.S.," a company spokesman told me.

Today's announcement came along with a demonstration of Pepper's functionality and features for developers.

Robotics

Pepper was developed for SoftBank by Aldebaran, a French robotics company specializing in emotionally intelligent humanoids that can function in unstructured environments like homes, shops, and specialized care facilities. Pepper has been working in SoftBank stores in Japan, assisting customers with cell phone purchases.

Because Japan's population is aging, the push to develop artificially intelligent humanoids could be an existential necessity. There simply won't be enough working-age adults to support the service and healthcare industries in the coming decades.

The partnership with SoftBank began in 2012. The mobile phone retailer wanted a cute, emotionally intelligent robot to interact with customers at stores. Development took two years and Pepper reported to work in 2014. In the months since, Aldebaran has upgraded key hardware features, such as the camera and mic systems, and has improved the robot's reliability.

Aldebaran has also used the data from its in-store bots to refine Pepper's emotional intelligence, a humanistic way of describing a machine's ability to recognize and interpret emotion by picking up on facial and voice cues.