Humans can now use mind control to direct swarms of robots

There have been some amazing breakthroughs that enable humans to control a single machine with their thoughts. The next step is figuring out how to operate an entire fleet of robots with mind control.

A team of researchers at Arizona State University's (ASU) Human-Oriented Robotics and Control Lab have developed a system for managing swarms of robots with brain power.

ASU's new system can be used to direct a group of small, inexpensive robots to complete a task. If one robot breaks down, it's not a big loss, and the rest can continue with their mission. ASU researcher Panagiotis Artemiadis tells ZDNet that swarms of robots can be used for "tasks that are dirty, dull, or dangerous".

In the future, humans can use their thoughts to manage a team of robots that will work together to accomplish a goal. Artemiadis says:

Applications of this research can be found in a plethora of tasks that include delivery of medical help to remote areas, search and rescue to inaccessible environments and disaster areas or exploration of unknown and remote environments, ranging from underwater to space. Since most of the applications require the human in the loop, our work focuses on the optimization of the human control interface in order to increase the operation efficiency and accuracy.

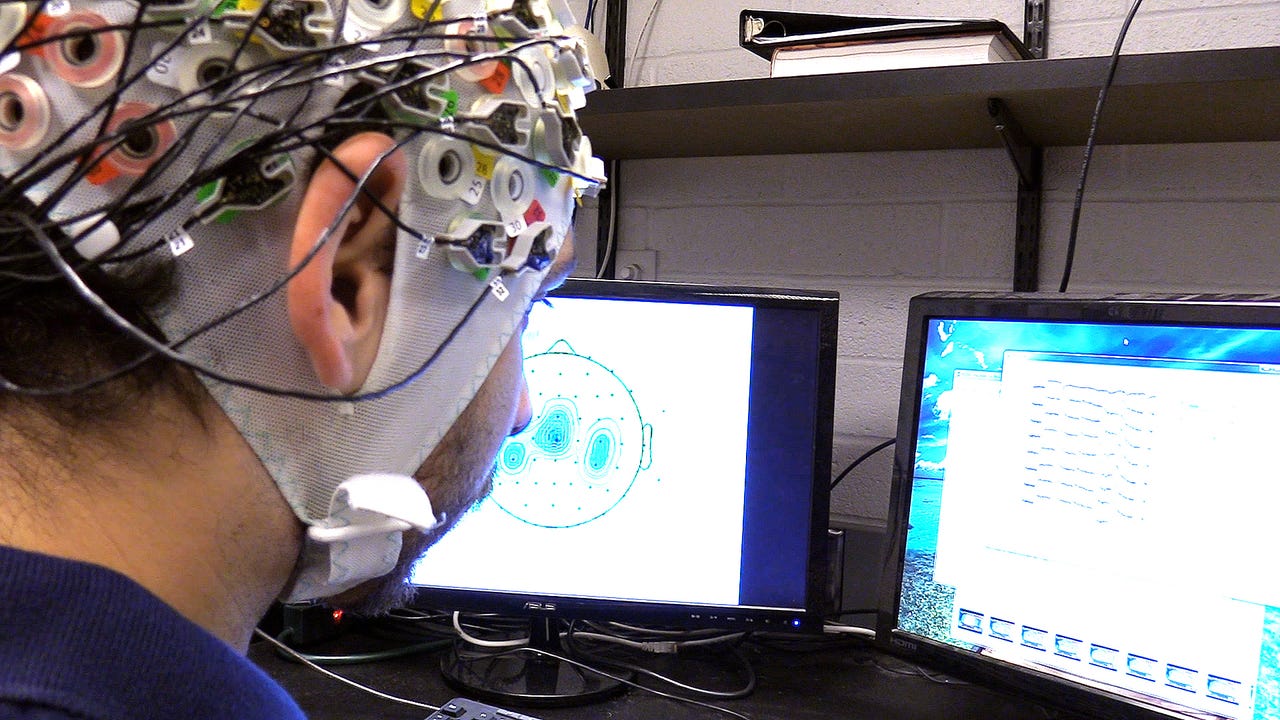

In the prototype system, a user wears a skull cap with 128 electrodes wired to a computer. The cap records electrical brain activity, which is then translated by advanced-learning algorithms into commands that are wirelessly sent to the robots. The user watches the robots and mentally pictures them doing different tasks, such as spreading out or moving in a certain direction. Conventional joysticks only control one robot at a time, but our minds can control an entire flock.

Robotics

It's much easier for the brain to control objects that resemble human limbs, which is why this study is so surprising. Artemiadis says, "The complexity of a system that requires the brain to activate areas to control robotic artifacts that do not resemble natural limbs, in our case a swarm of drones, is significant and so far unexplored." His research group discovered that specific areas of the brain are activated when we observe collective behaviors, such as a flock of birds or a school of fish. He explains, "The fact that the brain can adapt to output control actions for a swarm of multiple robots is fascinating and quite useful for human-robots interaction."

The challenge is that people have to stay completely focused on the robots; they can't let their minds wander, or the system won't work. The ASU team also had to make sure the decoding of brain activity was accurate and repeatable. This wasn't easy, since brain recordings are time-varying and quite sensitive to the precise location of the electrodes.

"We tackled this challenge by developing advanced learning algorithms that adapt to changes of the recordings in real-time," says Artemiadis. "So even in cases the brain signals are varying with respect to time, our decisions for controlling the robot swarm are robust and accurate."

Although this research is still quite early, the researchers estimate that we'll see this kind of brain-swarm interface start to complement or replace joysticks in the next 10 years. Artemiadis says, "We are going to see those interfaces used in applications ranging from military to entertainment and missions that involve exploration of unknown and/or dangerous environments."