'If it ain't broke don't fix it'? Bad advice can break your business

"If it ain't broke, don't fix it."

I heard this phrase originally from my father. Many of you also heard it for the first time from a family member, or maybe even a co-worker or a supervisor at one of your first jobs.

The phrase is widely attributed to Gainesville, Georgia-born Thomas Bertram Lance, the Director of the Office of Management and Budget during Jimmy Carter's Presidency, who resigned after his first year in office due to charges in a banking scandal that he was later acquitted of in 1980.

Bert Lance is paraphrased by an unidentified author in Page 33 of the newsletter of the US Chamber of Commerce, Nation's Business, in May of 1977.

"Bert Lance believes he can save Uncle Sam billions if he can get the government to adopt the motto,"If it ain't broken, don't fix it!" He explains,"That's the trouble with government: Fixing things that aren't broken and not fixing things that are broken."

Lance is certainly known for popularizing the saying in print, but it is believed that it had been used in the American South for many years before.

Windows XP: The end of the line

Regardless of the origin I think the full quote, particularly the part which I've bolded merits further examination.

It's certainly true that there are sometimes things that don't necessitate replacement, but we often put off replacing or fixing things due to our own complacency, being penny wise and pound foolish.

Or by having a cavalier attitude about threats to business continuity by being under the naive assumption that horrible things will never happen to us, they happen to other people instead.

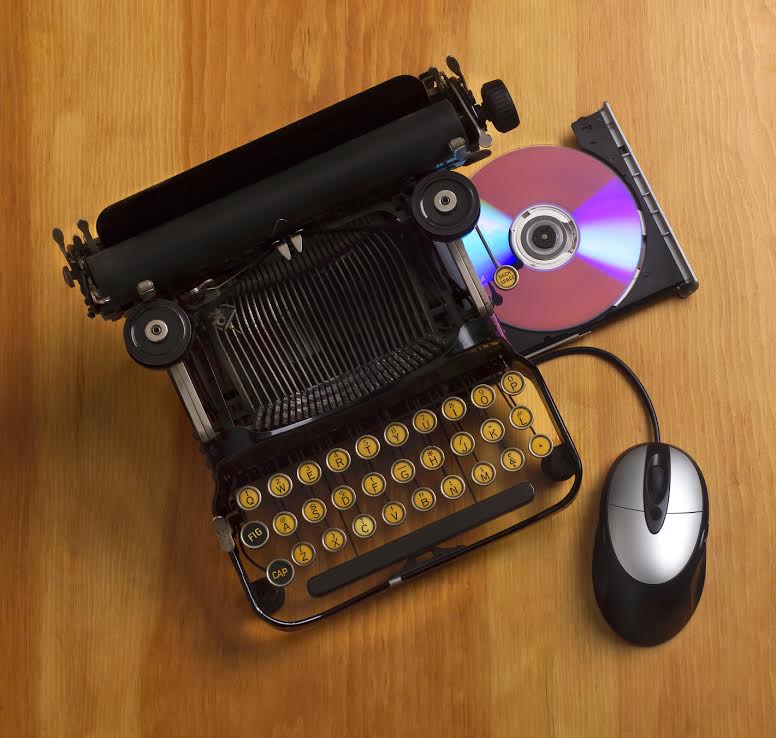

It's one thing not to replace your refrigerator, your car, or your TV set because it works fine. But keeping around End-of-Life system software and aging computer systems in general? I'm not sure even Bertram Lance would agree with that one.

The Windows XP End-of-Life event has been perplexing as I've heard old Bert's quote again and again as the reason for keeping those old PCs and software around.

Bert died in August of 2013 at the age of 82, but had it been explained to him that his widely-used quote has been used as justification for potentially exposing government, businesses and individuals to malware that could result in financial damages and leaks of Personally Identifying Information (PII), he'd probably ask that it be stricken.

During my 20 years as an IT industry consultant as both a freelancer and also during my tenure as a systems architect at IBM and Unisys I encountered countless examples of putting off upgrades of clunky old stuff because it was inconvenient or nobody wanted to spend the money.

Many of those things turned out to be single points of failure that held up large migrations and application modernization efforts, and ended up causing delays that were considerably more expensive than the money that the organization thought they would be saving by putting upgrades off.

Like, I dunno, the huge insurance company I once worked with that decided not to update their mainframe's 3270 communications stack to TCP/IP ten years before and leave their physically bus-connected (and unsupported) OS/2 2.0 SNA gateway in place when they faced a massive datacenter consolidation and VMware virtualization effort a decade later.

Or the the Internet Service Provider whose provisioning and billing system was tied to home-grown scripts and off-the-shelf software written for an EOL version of SunOS on an ancient SPARC 10 that was left running for over a decade, only to discover that when the hardware failed, the backup of the script code and the orphaned off-the-shelf software they had would not run on a modernized UNIX OS so easily.

Or the public transit authority of a large city which had a 30-year-old mainframe still in service that operated the switching system for its train cars that needed code modifications when alterations to the train lines were made. They actually had to pull someone out of retirement that wrote the original program, because the system wasn't supported anymore by the original vendor and nobody on staff there had those skill sets anymore.

I could go on with dozens of examples of systems that weren't "broken" but were left in place, and the consequences that followed.

But sometimes IT gets the cluetrain because the consequences of avoiding remediation of a problem are so dire that any inaction could risk a catastrophic business continuity loss.

If prolonged, such a loss or a data exposure could undermine your company's reputation and result in loss of customer confidence or even cause you to be fined heavily by the government. Not to mention other damages by the downtime alone.

The last time the industry hopped on the cluetrain was the Year 2000 problem.

Continued: XP's End of Life compared to Y2K

Windows XP's End of Life has been compared to the Y2K event because of the cavalier attitude many end-users as well as businesses have about it. Just yesterday, a follower of mine on Twitter remarked that Y2K was "overblown."

Just like Y2K, ignoring XP's End-Of-Life is another catastrophe waiting to happen.

I take great offense to that, because I and countless IT professionals worked full time for several years in the remediation of software and systems in anticipation of that event. Compared to Windows XP, however, the consequences to IT business continuity of avoiding remediation was well-understood.

Had the date field correction not been made, transactions on many systems on the morning of January 1, 2001 would have reverted to January 1, 1900. The results would have been catastrophic for infrastructure, banking, and any system that was transaction-oriented or date-dependent.

The world-wide cost of the remediation of that bug has been estimated at over $300 billion USD. It included programming services to fix mainframe, minicomputer, UNIX and PC application code, mainframe, minicomputer and UNIX OS software upgrades and patches as well mass patches to PC software as well as PC software and OS upgrades.

All of us who were working in IT support during the evening of December 31, 1999 stayed up all night with our pagers on, ready to run into work on a moment's notice. Many of us were even told to stay at work and forgo the usual New Years Eve festivities.

On the morning of January 1, 2000, nothing happened. Very few systems around the world were affected.

The reason why nothing for the most part happened is not because the Y2K bug was overblown, but it was because IT was proactive and it did its job. And I can assure you everyone was well aware that the cost of a business continuity loss due to a lack of proactivity would have been heads rolling in many organizations.

Windows XP: The end of the line

Similarly, just like Y2K, ignoring XP's End-Of-Life is another catastrophe waiting to happen. But unlike Y2K, there's no magic date where systems are going to just blow up. Instead, after today, security patches and updates for Windows XP will cease.

And then it will be open season for hackers that have zero day attacks against the OS that cannot be defended against.

XP was an operating system designed for a previous decade, where malware attacks were far less sophisticated and vectors for exposure to threats were also less complicated. Most of us did not have residential broadband thirteen years ago when the OS made its debut, and even corporate Internet access was confined to mostly email, in most organizations.

Arguably, with service packs and patches the old boy has held up well, but when compared to the modern systems architecture and security framework of Windows 7 and Windows 8.1, there's no comparison. It's like comparing reinforced concrete to wet toilet paper.

If you haven't begun the remediation process for XP within your organization, now would be a good time to start. Because you don't want to be the person responsible for prolonged inaction when the bad guys come out to play.

Have you begun remediation of Windows XP within your organizations yet? Talk Back and Let Me Know.