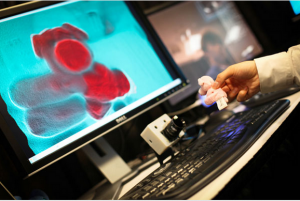

Microsoft showcases new Kinect-centric projects at its TechFest research fair

Enthusiasts and third-party developers aren't the only ones dabbling with Microsoft's Kinect sensor. Researchers who work for Microsoft, are, too.

On March 6 at the opening TechFest day -- where Microsoft allows some of its press pals and invited guests to get a preview before closing the doors and turning the event into an employee-only one -- Microsoft showed off some additional Kinect-centric research projects. (Note: As I am not at the event, I can only link to Microsoft's descriptions and photos.)

Additional Microsoft Research projects using Kinect:

Beamatron: Another augmented-reality concept that combines a projector and a Kinect camera on a pan-tilt moving head. The moving head can place the projected image almost anywhere in a room, while the depth camera enables the correct warping of the displayed image for the shape of the projection surface. How could this be used in the real world? "A projected virtual car can be driven on the floor of the room but will bump into obstacles or run over ramps," the Redmondians wrote.

SpatialEase: An Xbox 360 Kinect game for learning the language of space using 'embodied' learning that connects language with thought and action. From the description: "The learner must quickly interpret second-language commands, such as the translation of 'move your left hand right,' and move his or her body accordingly.

Shake n' Sense: A Microsoft Cambridge project that looks to mitigate interference when two or more Kinect cameras point at the same scene. It makes use of mechanical augmentation of the Kinect and doesn't require modification of the Kinect firmware, host software or inner guts.

While the Kinect and natural-user-interface technology is the darling of Microsoft Research brass these days, Microsoft also is showing off a number of non-Kinect-based projects at TechFest this week. Among them are several projects making use of new search techniques. There also are a few Azure-based demos, including something Microsoft is describing as "Bing-enabled Azure data services for the enterprise."

From Microsoft's write up of this Bing/Azure/services mash-up:

This project "identifies key Azure data services that have the potential to be widely useful for enterprises by leveraging the combination of Bing data assets, the Microsoft cloud computing infrastructure and deep data analytics. To bring home the opportunities, the project shows how Microsoft’s enterprise software can leverage these data services, and illustrates Bing-enabled enhancements that SharePoint Search and Microsoft Office products and services can potentially leverage."