Microsoft trains machine to answer, 'What's that animal in your basket?'

Microsoft Research and a team at Carnegie Mellon University have developed a system that can train machines to examine an image and seek to answer questions the way a human might ask them.

Microsoft's latest effort in developing the tools for artificial intelligence focus on the field of so-called 'image-question answering', which aims to automatically answer natural-language questions about the content of a given image.

That's easier than it sounds, even for a seemingly simple image of a dog sitting in a bike basket. To answer the question, 'What's sitting in the basket on a bicycle?' requires multi-step reasoning, the researchers from Carnegie Mellon and Microsoft Research noted.

The system would, they pointed out, "need to first locate those objects (eg basket, bicycle) and concepts (eg sitting in) referred to in the question, then gradually rule out irrelevant objects, and finally pinpoint the regions that are most indicative to infer the answer (ie dogs in the example)."

The area of study builds on advances in computer vision and natural-language processing -- areas in which Google has also boasted progress recently using artificial neural networks.

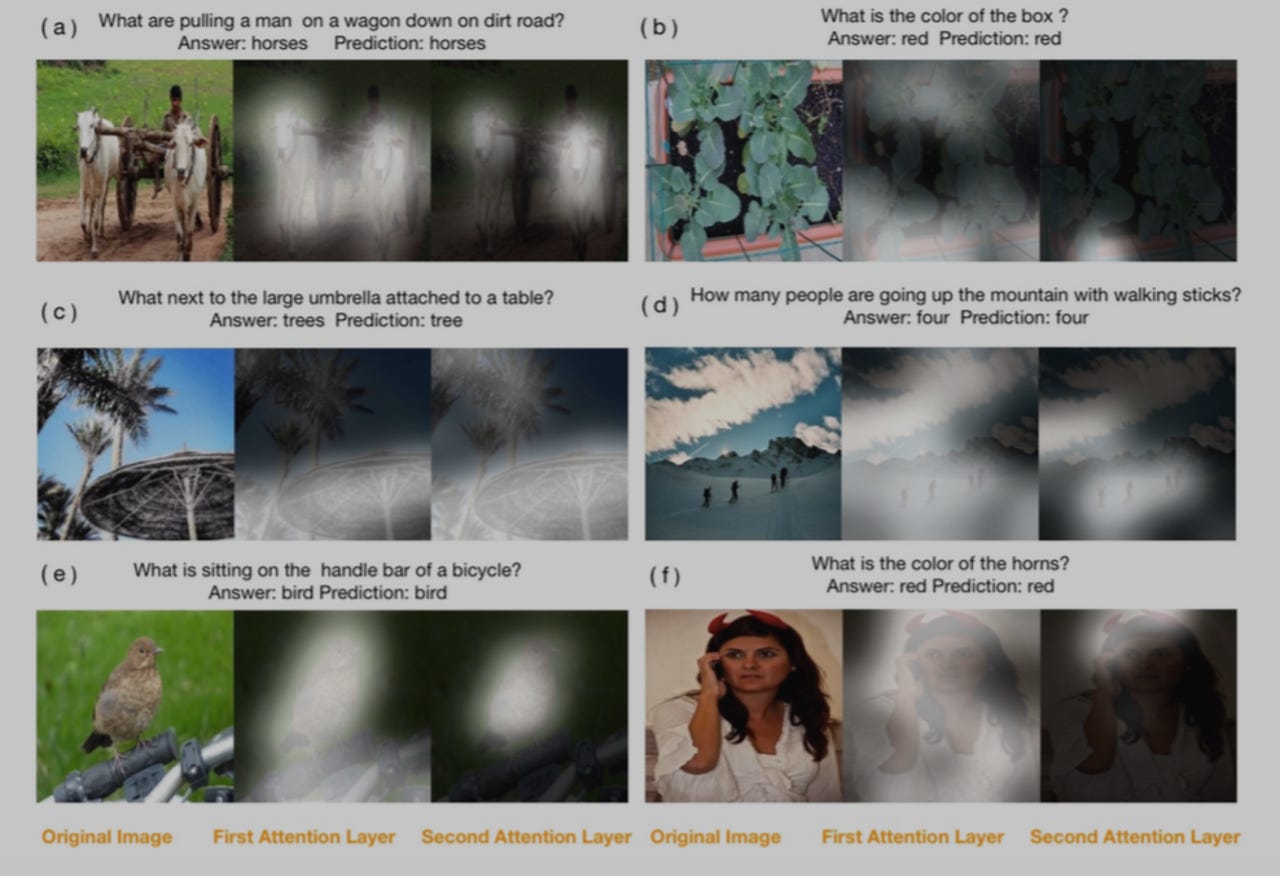

Microsoft's answer to the multi-step reasoning challenge in image-question answering is Stack Attention Networks, offering a multi-layered approach to the "attention mechanism" that has been used to solve image captioning and machine-translation challenges. The researchers offer a deep dive into how their model works here.

Microsoft sees potential for the "breakthrough" in leading to new applications that require real-time recommendations and anticipate human needs -- for example, a warning system for bicyclists on a helmet-mounted camera.

The system could continually ask itself questions such as, 'What is on the left side behind me?' or 'Are any other bikes going to pass me from the left?' or 'Are there any runners close to me that I might not see?', Microsoft Research points out in a blog.

The idea is to model human behaviour to solve problems, which would be translated as suggestions to the biker via a speech synthesiser. The answers could include directions to avoid accidents.

Microsoft doesn't mention autonomous vehicles, but it recently made a roundabout commitment to work with Volvo on the technology, which presumably could benefit from this too.

"With this system, the image goes through deep neural networks, deciding which regions are relevant to the question, and suppressing irrelevant information," Xiaodong He, a deep-learning researcher at Microsoft Research who contributed to the paper, said.