Nvidia says it can prevent chatbots from hallucinating

Nvidia, the tech giant responsible for inventing the first GPU -- a now crucial piece of technology for generative AI models, unveiled a new software on Tuesday that has the potential to solve a big problem with AI chatbots.

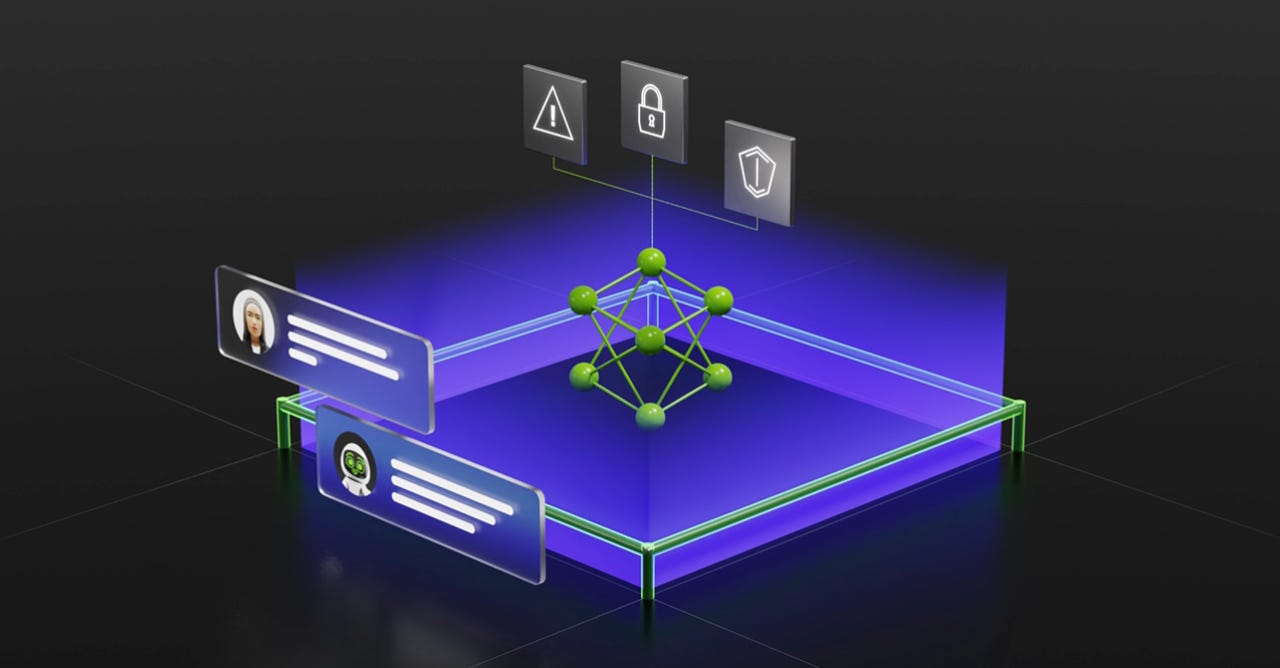

The software, NeMo Guardrails, is supposed to ensure that smart applications, such as AI chatbots, powered by large language models (LLMs) are "accurate, appropriate, on topic and secure," according to Nvidia.

Also: The 5 biggest risks of generative AI, according to an expert

The open-source software can be used by AI developers can utilize to set up three types of boundaries for AI models: Topical, safety, and security guardrails.

The topical guardrails would prevent the AI application from exploring topics in areas that are not necessary or desirable for the intended use. Nvidia gives the example of a customer service assistant not answering questions about the weather.

This type of guardrail would have been useful for Bing Chat when it was first released and began divulging company secrets.

Also: How to use Microsoft Edge's integrated Bing AI Image Creator

The safety guardrails are an attempt to tackle the issue of misinformation and hallucinations.

When employed, it will ensure that AI applications respond with accurate and appropriate information. For example, by using the software, bans on inappropriate language and credible source citations can be reinforced.

The security guardrails would simply restrict apps from reaching external applications that are deemed unsafe.

Also: Generative AI can make some workers a lot more productive, according to this study

Nvidia claims that virtually all software developers will be able to use NeMo Guardrails since they are simple to use, work with a broad range of LLM-enabled applications, and work with all the tools that enterprise app developers use such as LangChain.

The company will be incorporating NeMo Guardrails into its Nvidia NeMo framework, which is already mostly available as an open-source code on GitHub.

It will also be offered as part of the Nvidia AI Enterprise software platform and as a service through Nvidia AI Foundations.