Nvidia updates GeForce graphics, Tegra mobile roadmaps

Nvidia’s annual GPU Technology Conference (GTC), which takes place this week in San Jose, has always been about showing the world how graphics processors are for more than just playing Titanfall. With GPUs now widely used in supercomputers, energy exploration, life sciences and genomics, molecular dynamics and big data analytics, Nvidia can credibly claim that it has grown from a chip company to a visual computing company.

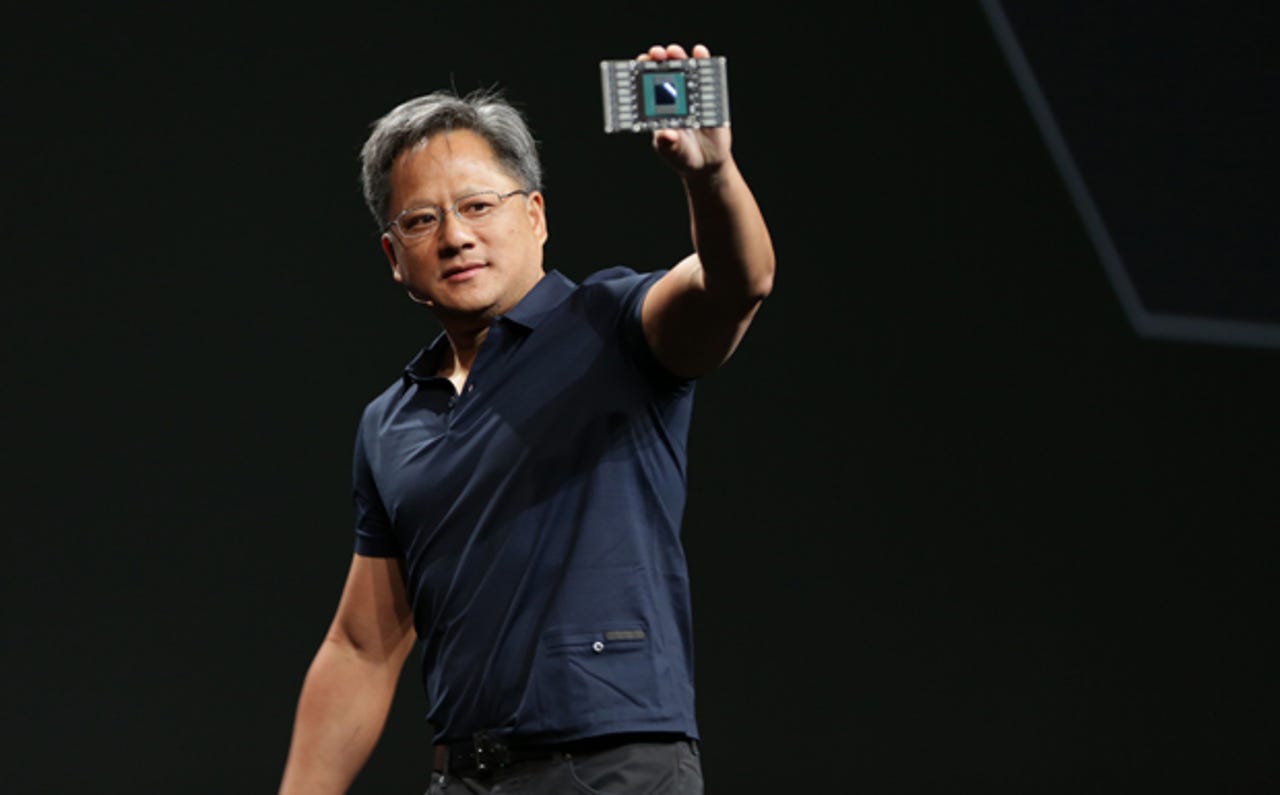

But at its core Nvidia still designs powerful GPUs and mobile processors. In his opening keynote, CEO Jen-Hsun Huang updated the company’s technology roadmap--adding some new products and removing others--and announced new products for the PC and workstation, mobile and the cloud.

Nvidia made several announcements related to its core GeForce GPUs. The first was a new high-end graphics card, the GeForce GTX Titan Z, with two Kepler GPUs (a total of 5,760 CUDA cores) and 12GB of memory capable of 8 teraflops of performance. The Titan Z, which will cost $3,000, is designed for “next-generation 5K and multi-monitor gaming,” according to Nvidia.

The replacement for Kepler is the Maxwell architecture, which will be the company’s first to support Microsoft’s DirectX 12 graphics. In February, Nvidia announced its first Maxwell GPUs, the lower-end GeForce GTX 750 (about $120 with 1GB of memory) and the GTX 750i ($150 with 2GB). This was followed earlier this month by a mobile family, the GeForce 800M.

At GTC, Nvidia announced some details of the next GPU architecture, known as Pascal. Huang said that the compute capabilities of GPUs are increasingly held back by system bottlenecks. He noted that a GPU has memory bandwidth of up to 288GB per second (in the current GeForce GTX Titan), but the CPU memory has bandwidth up to 60GB per second and the PCI-Express bus can handle only 16GB per second. “If we want to increase performance, we’re going to have solve these bottlenecks,” Huang said.

Toward that end, he announced two new GPU technologies. The first, NVLink, is a new high-speed interconnect that will increase the bandwidth between the CPU and GPU (and among multiple GPUs) by five to 12 times. The second is a new chip packaging technology that places 3D stacks of DRAM memory chips, connected with through-silicon-vias (TSVs), in the same package with the GPU. With this packaging technology, Nvidia claims it can double the memory capacity, deliver a “huge leap” in memory bandwidth and increase energy efficiency by up to four times.

Pascal will be the first to incorporate these two technologies. It will have five to 12 times the interconnect bandwidth of PCIe 3.0 and two to four times the memory capacity and bandwidth (a graph indicated that it would deliver up to 1,000GB per second). But it will only be about a third of the size of current PCIe cards. Pascal will also support unified memory with CUDA 6, meaning that both the GPU and CPU will be able to directly and quickly access data stored in memory for better system performance. Scheduled to ship in 2016, Pascal apparently replaces the Volta GPU with stacked DRAM that Nvidia announced at last year’s GPU Technology Conference.

Like all Nvidia GPUs, Pascal will be used for gaming. But Huang emphasized other applications, in particular machine learning for image detection, face and gesture recognition, video search and analytics, speech recognition and translation, recommendation engines, and imaging and search. Adobe, Baidu, Yahoo’s Flickr, IBM, Netflix, Yandex are among the companies using GPU acceleration to enable these kinds of applications.

Earlier this year, at the Consumer Electronics Show, Nvidia announced the Tegra K1, which will be its first mobile GPU that uses the same Kepler architecture. The Tegra K1 boasts some impressive specs: 192 CUDA cores, 328 gigaflops of power, and four times better energy efficiency than an ARM Cortex-A15, according to Nvidia. But given that the current Tegra 4 and Tegra 4i have struggled to find major design wins in smartphones and tablets, the question is what applications Tegra K1 will be used for. Huang emphasized embedded applications such as computer vision for driver assistance technology.

Nvidia announced a development kit, called the Jetson TK1, with the Tegra K1 GPU and VisionWorks SDK for $192 (192 cores, get it?). The kit can be used to develop embedded applications for driver assistance, computational photography, augmented reality and robotics. In one of the highlights of the keynote, an Audi A7 with the company’s self-driving technology, drove itself onto the stage and rolled to stop. This technology will be powered by a small computer in the trunk with an upgradable Nvidia Visual Computing Module (VCM) with a Tegra K1 chip, Huang said.

Huang announced that the next Tegra mobile GPU, known as Erista, will be based on the Maxwell GPU architecture and will of course deliver better energy efficiency and higher performance. Huang didn’t provide many details but simply said “I’ll tell you more about it as we get closer.” Erista is scheduled to ship sometime in 2015, and as with GeForce GPUs, it replaces (or pushes out) the Parker SOC (system-on-chip). Announced at last year’s GTC, Parker featured Nvidia’s Denver CPU based on the ARMv8 64-bit instruction set and Maxwell graphics, and was to be manufactured using a more advanced process with 3D FinFET transistors. It isn’t clear whether Parker’s push-out has to do with changes in product strategy, delays in foundry TSMC’s 16nm FinFET process, or perhaps a little of both.

There were a couple other product announcements. The Iray Visual Computing Appliance (VCA) is a “rendering appliance” with eight Kepler GPUs (a total of 23,040 cores), 128GB of memory per GPU and integrated networking (10GbE and Infiniband). The appliance provides more horsepower than a typical workstation (40 times more than a Quadro K5000 workstation) to accelerate Nvidia Iray for ray tracing photo-realistic 3D designs from applications such as Dassault’s Catia, Autodesk’s 3ds Max and Maya, and RTT’s Bunkspeed Drive. Honda’s R&D group is using an Iray VCA cluster with its RTT 3D design tools to render photo-realistic models to refine car designs. This “virtual prototyping” saves manufacturer’s money and helps them get products to market faster. The Iray VCA will be available this summer starting at $50,000.

Finally Huang and Ben Fathi, VMWare’s Chief Technology Officer, announced that VMWare’s Horizon Desktop-as-a-Service platform will use Nvidia’s GRID “GPU in the cloud” for applications that are hard to virtualize because they require low latency and detailed 3D graphics. Nvidia already has a similar vGPU (virtual GPU) partnership with Citrix. VMWare’s Horizon DaaS is available from one service provider (Navisite), but integration with GRID won’t roll out until next year. Many companies already use VMWare’s server virtualization technology, but haven’t rolled out desktop virtualization because of cost or a poor user experience. Those issues have been resolved and Nvidia says its vGPU technology will allow enterprises to shift to end-to-end virtualization.